Validation Lab Innovations

Accelerate Lab Validation for Semiconductor and Electronics

With the increasing need to be “first,” technology companies are forced to accelerate every phase of product development. Inefficiencies in design and test can have a significant impact on release dates—which makes streamlining the engineering workflow more important than ever. Taking the steps to elevate tools, people, and processes is the most reliable way to keep up with the pace of change in computing, wireless technology, and other areas of innovation.

Semiconductor Validation and Test Best Practices

Explore best practices to accelerate product development and enable smart analytics in and beyond the lab.

NI Commitment

Streamline Validation Lab Workflows and Accelerate Time to Market

The rate of innovation is increasing faster than ever before. NI is committed to providing the technical expertise to help streamline engineering workflows and the validation workflows. From software that enables automation and data analytics to scalable hardware solutions, NI is here to help meet aggressive market schedules.

We repeatedly observe that customers who adopt our software-centric, automated approach to test and measurement improve product quality and accelerate time to market while reducing cost.

Ritu Favre

Executive Vice President and General Manager, Business Units

NI

Modernizing the Lab is a Journey You Can Start Anywhere

Now more than ever, the boundaries of measurement science are being pushed. Keeping up with evolving industry needs is not a simple feat. Not only does each new technology create business opportunities for companies to take share in new markets, but it also changes the way we design and test products. From 5G to mobility to digital transformation, these rapid technological advances ultimately drive faster development timelines and increasingly motivate a software-centric approach to product design.

As the pace of technical innovation accelerates, each phase of product development has never been so critical. To complicate the matter, the need for higher volume characterization makes being “first” even more challenging. Inefficiencies in design and test can significantly impact release dates. In parallel with the intense pressure to be first, organizations must also cope with the fact that each new design is more complex than the one before it. This added complexity often drives the need for higher volume characterization.

Increasing Design Complexity Demands Increased Validation Lab Efficiency

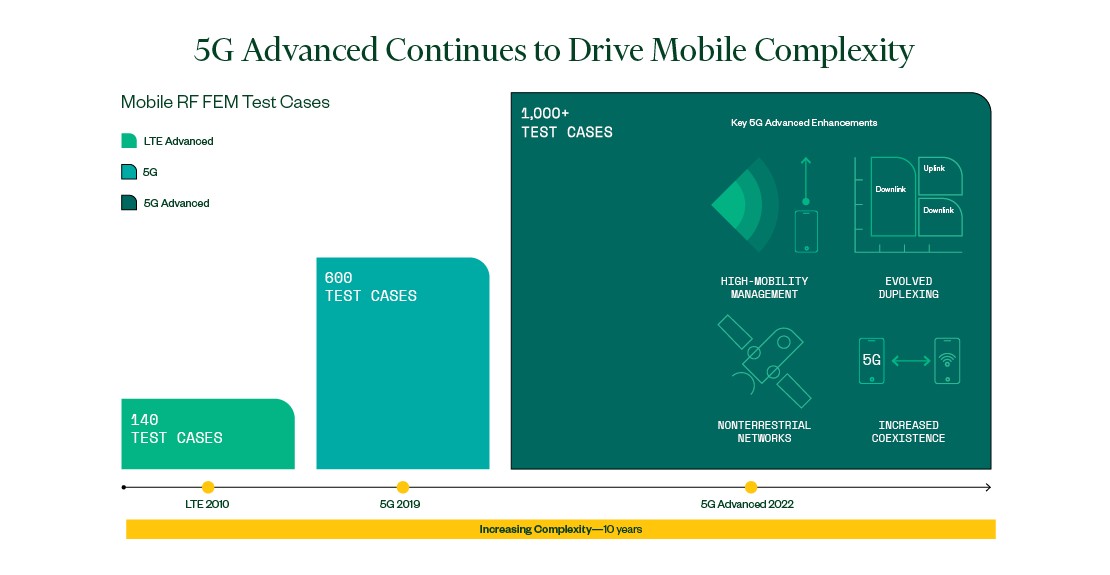

Consider the progression in complexity of a relatively “simple” RF front-end module. When characterizing a 4G RF front-end module 10 years ago, one might have to deal with fewer than 100 test cases—each with a different combination of radio band, carrier bandwidth, and waveform type. Moving to a 5G front-end module, the number of test cases increased by almost 10x. However, product schedules don’t allow for a 10x increase in validation or characterization time. If anything, the expectation is even faster development.

In addition to the need to do more measurements, the measurements themselves are becoming more complex. For 5G mmWave devices, the adoption of antenna-in-package technology means that there isn’t a physical connection to access the mmWave signal. As a result, many 5G mmWave devices require over-the-air testing—a completely new test methodology.

The bottom line is that validation engineers are being tasked with developing more complex devices in less time and at lower cost than ever before. In many ways, the design, validation, and test engineers that we work with are often caught in the middle of the competing priorities of their organization. Since doubling or tripling test capacity is often not a viable choice, maximizing resource efficiency is the only way to meet market schedules for chipsets, components, and new features. This reality elevates the importance of automation software in the lab. These tools are some of the most effective techniques to stretch test equipment budgets as far as possible.

While software automation tools help maximize resources, they also create an opportunity for organizations to tackle inefficiencies at a larger scale. For instance, natural siloes between various R&D teams and sites often lead to limited collaboration and create a duplication of effort, especially in large companies. Not sharing best practices or communicating across teams represents a significant missed opportunity.

The Power of Standardizing Test Software

While some duplication will always happen, the most successful organizations in the world have driven efficiency by standardizing test measurement software globally. In our interactions with engineering labs across the world, we’ve seen that technology companies who adopt a common software framework ultimately reduce their engineering costs and accelerate design schedules. For example, global semiconductor leader NXP leveraged advanced automation and standardization techniques in semiconductor validation, boosting efficiency and reducing time to market with NI’s robust tools. Time and time again, organizations like NXP that first automate measurements and then standardize on test automation software in the lab are significantly more efficient. Software can improve productivity—but to do so, it must scale.

Implementing a standardized measurement framework helps engineers maximize measurement IP reuse and reduce setup time. Simply put, it is one of the most impactful approaches to help streamline engineering workflows in the validation lab. One of the things best-in-class organizations do effectively is centralize the development, maintenance, and distribution of automated test software. Standardized software enables quicker device characterization, and often results in an ability to expand measurement coverage, regardless of which software engineering teams adopt. Many of our customers have successfully standardized software using a wide range of languages—from Python and C+ to LabVIEW.

What’s more, standardized software also enables consistency in data capturing. This consistency helps lay the foundation for smarter data analytics. Within the next decade, data analytics software will play an increasing role in product development workflows. Today, we already observe the challenges associated with correlating simulation results from the design process to the measurement results in the characterization lab. Effective use of data management and analytics tools is the next step in product life cycle management.

A Focus on Data

Consistent with the need to accelerate product development, data management and analytics tools provide a unique opportunity to improve productivity. Today, each stage of design produces immense amounts of data. That data is often poorly managed and insufficiently leveraged throughout the design process. As product complexity escalates with each generation, data management and analytics tools will become an increasingly critical aspect of the design workflow. Fortunately, we continue to see rapid innovations in analytics technologies like artificial intelligence and machine learning. As a result, organizations around the world will increasingly rely on these tools to accelerate product development.

However, this ideal end state of smart product management doesn’t happen overnight. Modernizing the validation lab is an evolving journey, one with many opportunities and pathways. Whether it’s by starting to automate measurements or implementing adaptable software and hardware solutions, every lab has an opportunity to become the next center of operational excellence in its organization. While there is inherent risk associated with any change, the payoffs can be monumental. Rising to the technological challenges of tomorrow starts with considering what modernizing the lab could mean for your team today. Elevating tools, people, and processes is the only surefire way of keeping up with the pace of change.

Keeping Up with Change

Evolving validation requirements and increasing DUT complexity make it harder for engineering teams to meet aggressive market schedules. Implementing modern approaches in the lab like automation and standardization help streamline workflows, scale validation, and accelerate go-to-market time for new components, devices, and technologies.

A Modernized Lab Approach That Accelerates Post-Silicon Validation

Faced with increasing device complexity and time-to-market pressure, companies are seeking ways to maximize efficiency of validation teams. Read about the approaches they are adopting to modernize their labs.