Logging Data with NI Citadel

Overview

This article presents a technical overview of the NI Citadel 5 database and describes how you can use the Citadel database to log measurement data. This white paper is useful to anyone interested in using Citadel for permanent or temporary storage of historical data related to industrial monitoring or to test and measurement applications. change

Contents

- Introduction to the Citadel Database

- Citadel Database Structure

- Citadel Operations

- Logging Data to a Citadel Database

- Retrieving Data from a Citadel Database

- Networking with Citadel

- Citadel Security and Robustness

Introduction to the Citadel Database

Citadel is an integral component of many NI software products, including:

· LabVIEW Datalogging and Supervisory Control (DSC) Module

· Lookout

· DIAdem

· VI Logger

As a common data storage mechanism, Citadel allows these software products to exchange industrial monitoring or test and measurement data. For example, a researcher can use DIAdem to analyze data generated by the DSC Module or Lookout without modifying, converting, or reprocessing the original data.

Citadel offers the following benefits that improve productivity while saving time and money.

· Citadel is optimized for real-time logging and historical data retrieval. This behavior increases application performance while saving valuable system resources, especially in large applications.

· Citadel includes advanced data visualization and management components. You do not need to develop custom data visualization or management tools for an application.

· You do not need programming experience or prior knowledge of database systems to use Citadel.

· Citadel is inherently network-aware, allowing you to share data seamlessly among team members or between data terminals.

· Citadel does not require initial configuration, which reduces start-up and system familiarization time.

Citadel Database Structure

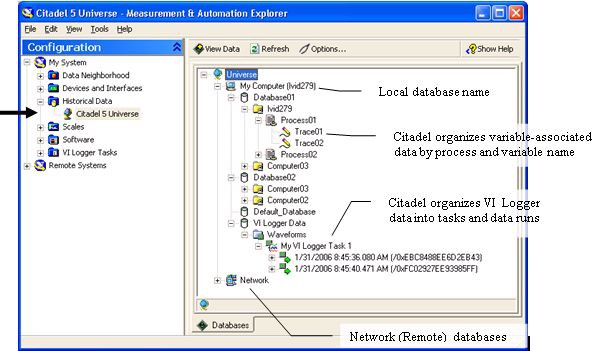

Use the Historical Data Viewer to view the data stored in any Citadel database. Select My System»Historical Data in the Configuration tree of NI Measurement & Automation Explorer (MAX) to open the Historical Data Viewer.

Citadel organizes data into a three-tiered hierarchy containing the originating computer name, the process name, and the trace name. Figure 1 displays a graphical overview of a typical Citadel database in the Historical Data Viewer.

Figure 1: Citadel Database Structure

The Historical Data Viewer also provides access to multiple databases on the same computer and to remote databases.

In addition to trace views, Citadel provides waveform hierarchies containing data logged from VI Logger and dataset hierarchies created using the DataSet Marking I/O Server in the DSC Module.

Traces and Subtraces

Citadel organizes databases into traces or datasets. Traces are uniquely named and identify a group of sub-traces that comprise a historical record of trace values. Subtraces are composed of data runs with constant resolution and logging parameters.

A subtrace is an arbitrary data stream containing a single type of data with associated meta-data. Subtraces are indexed by time, so you can identify a time position within a subtrace without reading through the entire stream. Because data points are indexed by time, all data in a subtrace data stream must have non-decreasing timestamps. If a back-in-time event occurs, or if the data type changes, Citadel must create a new subtrace. Changing the data type involves changing the base data type, such as double or Boolean, or changing the format with which data is serialized to disk, such as changing the compression algorithm by changing the value or time resolution, changing the type of variable being logged to a variant subtrace, or even changing the attributes that are set on the variant. NI recommends that you do not set attributes on variants logged to Citadel.

Though Citadel provides no direct interface to work with individual subtraces, knowledge of subtraces is important because subtraces can have a significant impact on database performance. In particular, if a trace contains many subtraces, the trace performs less well than a trace with fewer subtraces. In typical use cases Citadel does not need to create multiple subtraces.

If the Citadel service or any local Citadel client, reader, or writer fails to shut down cleanly while accessing a database, Citadel re-indexes the database upon restart. When Citadel re-indexes a database, a subtrace may be damaged by the failure that triggered the re-indexing. Therefore, when Citadel re-indexes a database, all existing subtraces are closed permanently and new data is logged into a fresh subtrace.

The following events can trigger the creation of a new subtrace:

· The trace data type changed.

· You log a “back-in-time” value.

· The logging properties, such as logging resolution, changed.

· The Citadel service terminated abnormally, such as during a power loss or system crash.

Subtraces consist of data runs of up to 100 points. For example, data in analog traces is compressed based on delta values and the logging resolution. After every 100 points, an absolute measurement value is logged, which resets the compression algorithm and creates a new subtrace. This behavior prevents accumulation of errors and reinforces data integrity within the database.

Datasets are an alternative grouping of data that associate a “run” with a group of traces and time ranges. Datasets can be created using VI Logger and the DataSet Marking I/O Server in the LabVIEW DSC Module.

Trace Properties

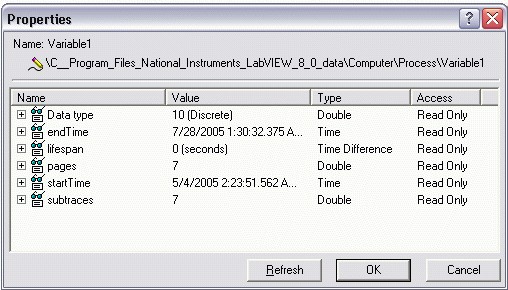

Citadel associates a variety of properties with each trace in the database. Use the Historical Data Viewer to review these properties. Right-click a trace in the Historical Data Viewer and select Properties from the shortcut menu to display properties for the trace. Figure 2 displays an example of a trace Properties dialog box.

Figure 2: Trace PropertiesDialogBox

The Data type property indicates the most generic type associated with a trace. The most generic type determines the reader to use to retrieve the majority of the trace contents.

The data types that Citadel support, from most to least generic, are:

- Binary (LabVIEW String)

- Variant

- Unicode String

- ANSI String

- Double

- BitArray

- Logical

The startTime and endTime properties of a trace indicate the actual start and end times of the trace in Universal Coordinated Time (UTC) format. UTC avoids all conflicts associated with Daylight Savings Time or varying time zones. Although Citadel stores timestamps in UTC, the Historical Data Viewer displays times in the local time zone. The Historical Data Viewer appends the current time zone name to the end of the time string in the Value column of the Properties dialog box.

The lifespan property indicates how long trace data remains in the Citadel database before Citadel reuses storage space taken up by old trace data. For instance, if you configure a trace with a lifespan of 10, only the ten most recent days' worth of data remain in the database. After ten days, Citadel overwrites the oldest data. The lifespan of a trace is defined by the application that writes data to the database and can be configured only for each process, not for each trace. A lifespan of 0indicates an infinite lifespan.

Setting a lifespan indicates specifies that Citadel can delete data older than the lifespan in order to save space. However, the database does not guarantee the deletion of expired data on any schedule. Lifespanning occurs only when the process that originally logged the data actively logs new data to the database. For example, if you copy data to a CD and, 15 years later, reattach your database to retrieved data, the data does not disappear due to the lifespanner.

The pages property indicates the number of pages the trace is using and provides a rough estimate of how much disk space the trace uses. Each page takes up 4KB of space.

The subtraces property indicates how many subtraces are in the trace.

VI Logger Data

VI Logger stores waveform data to Citadel in groups of runs that belong to VI Logger tasks. Each run corresponds to a single acquisition of data. A VI Logger run consists of between one and three subtraces: a waveform subtrace containing data from hardware, a waveform subtrace containing calculated data that the VI Logger application generates, and a subtrace containing VI Logger event data. VI Logger subtraces contain raw waveform data stored as arrays of uncompressed values. Waveform subtraces do not contain serialized timestamps because the waveform time stamp can be calculated from its startTime and deltaT attributes. VI Logger uses uncompressed subtraces to maximize throughput to disk, at the cost of greatly increased disk and network usage. The VI Logger event subtrace uses the same value or time stamp format as single-point traces to log arbitrary-length Boolean arrays with compression.

Supported Data Types

Though optimized for numerical data logging, Citadel supports the following data types:

· Analog—Citadel compresses analog values based on the specified logging resolution.

· Integer and bit-array—Citadel does not compress discrete integers. BitArray subtraces are stored as 32-bit (DWORD) unsigned integers.

· String—Citadel supports three string types: LabVIEW strings, null-terminated strings, and Unicode strings.

· Variant—Citadel stores raw variant data but optimizes storage by storing the metadata associated with a variant only once instead of with each logged value.

· Waveform—You can use VI Logger and DIAdem to interact with waveform data.

Database Size Considerations

Citadel does not impose a size limitation on databases. However, NI recommends you take the following performance considerations into account when planning a data logging system.

· Citadel database performance degrades slightly as the database grows. This behavior is due primarily to the physical limitations of hard disks and to the limitations inherent in the NTFS and FAT32 file systems.

· Archiving large databases takes a significantly longer time than archiving smaller databases.

· Database integrity is not related to database size.

The size of a large database typically falls in the range of 5GB to10GB. The size of a medium-sized database typically is 2GB to 5GB, and the size of a small database is less than 2GB. In many applications, database lifespans range from 1 to 15 years.

Properly managing database size can improve your ability to maintain a database over its expected life time. NI suggests you consider the following tips to plan a healthy database system.

· Use an appropriate logging resolution and logging deadband.

· Use an appropriate lifespan setting when configuring a process.

· Plan for regular archiving operations to reduce the amount of data stored in the operational database. To achieve maximum performance, you might want to store only the last 1GB worth of data in the operational database. The actual amount of data you decide to keep in your operational database depends on the application.

Historical Alarm and Event Data

Each distinct piece of alarm and event data is composed of several fields of data of varying types. Although Citadel can store numeric data efficiently, Citadel is not optimized for storing record-based data. Storing alarm and event data in the primary Citadel format can lower efficiency and provide a less than optimal mechanism for returning alarm data for viewing.

NI stores alarm and event data in an attached Microsoft SQL Server 2005 Express Edition (SQL Server Express or SSE) relational database. SSE is freely redistributable and therefore does not impact the pricing of the DSC Module. SSE also can be upgraded to Microsoft SQL Server if necessary. NI recommends that you treat the attached SSE database as a part of Citadel and access it via the API provided with the DSC Module.

The alarms and events portion of a Citadel database is limited to 4GB. The SQL Server Express database imposes this restriction. If you require more than 4GB alarm and events in a single Citadel database, you can purchase SQL Server 2005 Workgroup Edition from Microsoft, which removes the 4GB restriction.

Database Files

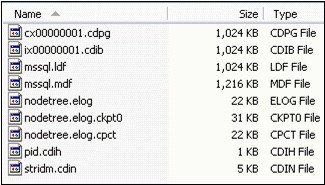

Citadel stores databases in a designated group of files on the hard drive. A Citadel database typically resides in a folder unique to that database. You can store only one Citadel database in a folder. NI recommends that you avoid placing non-database related files in a folder containing a Citadel database. A typical database is comprised of a set of files similar to those in Figure 3.

Figure 3: Typical Citadel Database Files

The number of .cdpg and .cdib files varies with the amount of data in a database. The nodetree.*, pid.cdih, and stridm.cdin files contain important information about the structure of the database. The mssql.* files contain historical alarm information. Citadel creates the mssql.*files the first time alarm or event data is written to the database.

Caution: Do not modify, move, or delete a database file while the database is attached. Doing so results in a database corruption. If you modify or delete a database file while the database is detached, you might not be able to reattach the database, and you might lose some or all of the data in the database. If you move or copy a detached database, move or copy all database files.

The .cdpg files contain trace data. Citadel stores data in a compressed format; therefore, you cannot read and extract data from these files directly. You must use the Citadel API in the DSC Module or the Historical Data Viewer to access trace data. Refer to the Citadel Operations section for more information about retrieving data from a Citadel database.

Each of the 1,024KB .cdpgfiles contains a set of 4KB pages. Each page contains data for a single subtrace. Citadel attempts to use all 4KB of space in a page before opening a new page. If a subtrace is terminated or a new subtrace is started before the end of a page, the remainder of the space in the page goes unused. Citadel reclaims the remaining unused space only if all trace data in that page is removed or deleted.

Compression of Numeric Data

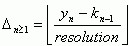

Citadel uses a complex compression algorithm to store numeric data. Equation 1 approximates this compression algorithm.

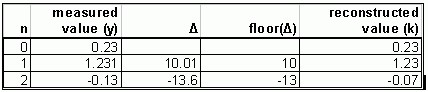

where ∆n≥1 is the Citadel delta value, y is the set of recorded values, k is the set of logged values, and kn=0 = yn=0. The number of values, n, in a run always is less than or equal to 100.

Note: This section does not describe the full algorithm Citadel uses for compression. You do not need to understand this section to use or understand Citadel.

Data is typically acquired from a data source such as a data acquisition board, an analog input device, or a PLC. In most cases, measured values are passed through a series of deadbands before being sent to Citadel. Citadel then converts the values to deltas and compresses the values using the logging resolution you specify.

When queried for historical data, Citadel always returns a value equal to the measured value plus or minus the logging resolution at the time the data point was logged. The actual resolution of the returned data point might be higher than the specified logging resolution but is always within the logging resolution.

Table 1 provides sample delta calculations using Equation 1 and a logging resolution of 0.1, which retrieves only those values within ±0.1 of the actual recorded value.

Table 1: Example Citadel Delta Calculation

The logging resolution is a key parameter in how Citadel compresses numeric data. The logging resolution can be any real number but must be chosen in concert with the logging deadband. For example, if you specify a logging resolution of 10 units and a logging deadband of 1 unit, Citadel logs any change of at least 1 unit. However, due to compression and the logging resolution you set, Citadel cannot discern any value change under 10 in data retrieved from the database. To avoid this situation, always specify a logging resolution less than or equal to the logging deadband. Also specify the logging deadband as a power of ten unless you have an application-specific reason to do otherwise. Specifying a logging resolution of 0always logs data with full resolution.

Citadel Operations

This section describes the various operations you can perform with Citadel.

Installing Citadel

When you install Citadel, the following components also are installed:

· NI Logos, which uses the NI-PSP networking protocol

· SSE 2005

The following NI products automatically install Citadel and its components.

· DSC Module

· Lookout

· VI Logger (SSE 2005 component is not installed)

· DIAdem (optional component)

You cannot install Citadel as an independent software component. If you need to use Citadel on a remote terminal, NI recommends that you purchase a DSC Module Run-Time System license for that terminal. The DSC Module Run-Time System includes the Citadel database drivers.

If you want to use the Historical Data Viewer to view Citadel trace data, you also must install MAX. MAX is automatically installed with most NI software.

The Citadel installer configures SSE 2005 with a mixed security mode. The default password for the SQL Server administrator sa is the computer ID. NI strongly recommends that you assign a strong password to the sauser.

Use the following command line prompt to set the SQL Server administrator password:

osql -U"sa" -P"" -Q"sp_password NULL, 'new_password', 'sa'"

Use the following command line prompt to change an existing password:

osql -U"sa" -P"old_password" -Q"sp_password 'old_password', 'new_password', 'sa'"

Creating, Attaching, and Detaching Database

Citadel databases are managed primarily through the Historical Data Viewer component in MAX. You also can use the DSC Module to manage Citadel databases programmatically.

Creating a Database

To create a Citadel database, you must specify a database name and a path to a folder on a local hard drive. Citadel uses the path as the default name for the database if none is specified. You also can create Citadel databases on remote PCs. Refer to the Networking with Citadel section for more information about using Citadel across a network. If you attempt to create a database in a folder that already contains an unattached Citadel database, the existing database becomes attached. You can store only one Citadel database in a folder.

Attaching a Database

Attaching a database allows you to open a connection to database files on disk. You then can review data that was previously stored on backup media for archival purposes. You can attach only a database located on a local hard disk. You cannot create or attach a Citadel database from a mapped network drive.

Detaching a Database

You can detach a database if you do not need immediate access to historical data or want to backup database files to a permanent storage medium such as a tape drive, DVD, or CD. You can detach Citadel databases manually using the Historical Data Viewer or programmatically using the DSC Module Historical API. Citadel releases control of the database files in a database directory once you detach the database. You then can manually move or delete database files safely. You must detach a database before you manually copy the database files or write them to permanent storage. If you fail to detach a database before manually copying database files, you might not get an accurate snapshot of database files, and the copied database might be corrupt when you try to attach it.

When detaching a database, you have the option to delete the database files. This option is useful if you no longer need the data in the database. You can detach or delete only local databases from a system. Citadel does not allow these operations for networked databases.

Archiving Data

Archiving data allows you to copy trace data from one location to another. You can perform archives of trace data within a database or to another local or remote database.

An archive operation requires four pieces of information: the sources trace(s), the destination database, and the start and end times for the archive operation. Citadel does not archive data that has already been archived for a particular trace. For example, if you perform a daily archive operation for a time range from 1/1/2005 to now, Citadel archives the initial range of data once and then archives only the latest day’s worth of data on subsequent archive requests.

The lifespan of all archived trace data is automatically set to 0, which specifies an infinite lifespan. Unless you reset the lifespan property by logging data to the archived trace, the data is not affected by a lifespan.

Citadel archives data by physically copying the trace pages from one database to another. Because Citadel must reconstruct a database page by page, performing an archive operation takes significantly longer than copying a raw file.

Two common use cases for archiving include destructive archiving and trace merging. You can use destructive archiving to move data from one database to another. Destructive archiving is a common process when backing up older data to a more permanent or offline storage database.

Trace merging allows you to take information from one trace and merge it into a trace with the same name. For example, assume that for redundancy purposes you have two computers, A and B, logging the same data source to a local database. All client applications reference the database computer A. If computer A goes down, client computers switch to computer B. However, you need a way to merge the redundant data from computer B into the same trace on computer A when A is rebooted. You can perform this operation by completing the following steps:

1. Create a temporary database on computer A.

2. Archive the backup trace from computer B to a temporary database on computer A. The trace has the same name and path as on computer B.

3. Rename the archived trace in the temporary database on computer A to have the same computer name as computer A.

4. Archive the data from the temporary database to the master database on computer A. This step creates a new subtrace in the original trace that contains the redundant data.

Compacting a Database

You can use Citadel to compact any attached database. The purpose of database compaction is to recover unused space in the database, but performing a database compaction does not guarantee that the database will be reduced in size. For example, suppose you have a database that contains two traces, each of which has one subtrace. Both traces are logged simultaneously, so a given .cdpg file contains 4KB pages for each trace interleaved together. After logging enough data to create at least two .cdpg files, you delete one of the traces. The data for the remaining trace still spans two .cdpg file. The database therefore still takes up approximately 2MB of disk space. If you then trigger a compaction, the Citadel engine might calculate that it can eliminate the second .cdpg file by compacting the data into the first .cdpg file. After the compaction is complete, Citadel deletes the empty .cdpg file. If the Citadel engine determines that it cannot remove at least one .cdpg file, the compaction has no effect on the database. Citadel then uses any empty space in an existing .cdpgfile before creating a new one.

Backing Up and Restoring a Database

You have several options for backing up Citadel databases. You must choose the method that best meets your system specifications.

The most basic option for backing up a Citadel database is to perform a regular archive operation from a local database to another database on a separate hard disk or on a networked Citadel database. You can perform the archive operation manually using the Historical Data Viewer, or you can write a utility application using the DSC Module to perform the archive automatically and on a regular basis.

Some applications require that you make a physical hardcopy backup of data at regular intervals. In this scenario, the preferred option is to plan a manual or automated archive process that archives data on a daily or hourly basis to a secure location. When the database grows to an adequate size, you can detach the archive database. For a CD, 650MB is an adequate size; for a DVD, 4GB is an adequate size. After you detach the archive database, write the database files to permanent storage. Using this method, each CD or DVD contains data for a specified time period.

Copy the database files back to a hard drive and reattach each CD as a separate database to restore the backup database. You then can reconstruct the entire master database by archiving all databases together.

Citadel does not contain any built-in mechanisms for automated archiving or database restoration.

Logging Data to a Citadel Database

The DSC Module, Lookout, and VI Logger can log data to a Citadel database.

Logging Data with the DSC Module

Shared Variable Logging

After installing the DSC Module, you have the option to configure automatic logging of network-published shared variables. LabVIEW stores general logging configuration options such as the database path in the library configuration. The Shared Variable Properties dialog box contains logging configuration options for each shared variable.

Configuring Datasets

The DSC Module also includes a DataSet Marking I/O Server. Data sets are database organization features that help you organize runs of data including multiple shared variables. When you log a dataset, the data set appears as a special object in the Citadel database. You can retrieve data programmatically from a particular data set or interactively view and export data set runs in the Historical Data Viewer.

Citadel Writing API

The DSC Module 8.0 and later include an API for writing data directly to a Citadel trace. This API is useful to perform the following operations:

· Implement a data redundancy system for LabVIEW Real-Time targets.

· Record data in a Citadel trace faster than can be achieved with a shared variable.

· Write trace data using custom time stamps.

The Citadel writing API inserts trace data point-by-point with either user-specified or server-generated time stamps. You can write numeric, logical, string, bit-array, and variant data using this method. Benchmarks have demonstrated that you can write single-point data to a Citadel trace at approximately 80,000 points per second on a 3Ghz Pentium IV.

Logging Data with Lookout

Lookout is an easy-to-use, Web-enabled, human machine interface (HMI) and supervisory control and data acquisition (SCADA) software system for demanding manufacturing and process control applications.

You can configure any I/O point exposed in a Lookout process for data and alarm or event logging. Lookout configures database properties on a per-process basis, much like in LabVIEW, so you can log to multiple databases simultaneously. Refer to the Lookout documentation for more information about configuring a Lookout process for data logging.

Logging Data with VI Logger

VI Logger is an easy-to-use data logging application for use with NI DAQ and FieldPoint modules. VI Logger organizes data by task and run. VI Logger is unique because it is the only application that allows you to log high-speed waveform data to Citadel.

Time Groups and Ghost Points

Time groups and ghost points ensure that Citadel has an accurate real-time record of trace values without logging redundant data.

Time Groups

Time groups are a set of traces or values that update at the same rate. Applications that use Citadel must configure a time group for every I/O device to which they connect. For example, if an application implements a connection to two different I/O modules respectively polling at rates A and B, that application configures two time groups with equivalent rates. If no hardware is associated with a variable, Citadel assigns a default time group to the trace that updates at about five times a second.

Ghost Points

Citadel uses ghost points to maintain real-time awareness of traces that do not change frequently.

For example, suppose you configure a thermocouple measurement for logging with a deadband of 1°F. The thermocouple is in an environment that remains at a constant temperature most of the time, but you need to know when any major changes occur. If the temperature remains within 1°F for one hour, Citadel does not log redundant points, even if the temperature is sampled once every five seconds. However, if the logging system experiences a failure at some point during the sampling, you want to know the temperature at the time of the system failure. You also want to know the approximate time of the failure. Citadel handles this situation by logging a single ghost point for each trace. The ghost point in each trace updates constantly at a rate based on the time group specified for the trace. The ghost point always reflects the most recent time and value of a trace.

Citadel stores ghost points in a .tgpffile that updates once every ten seconds. This file ensures that Citadel has an accurate record of the value for every trace at the time of a system failure.

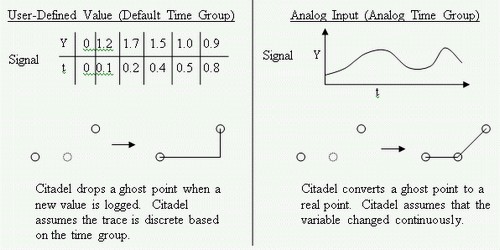

When Citadel detects a value change on a given input, ghost points are converted to real points in two ways. If a trace is configured to use the analog time group, Citadel assumes that the data is a continuously changing variable. If a trace is configured to use the default time group, Citadel assumes that the change is discrete. All user-defined values use the default time group. Figure 4 illustrates the default and analog time groups.

Figure 4: Default and Analog Time Groups

Logging Back-in-Time

Most data logging systems generate ever-increasing time stamps. However, if you manually set the system clock back-in-time, or if an automatic time synchronization service resets the system clock during logging, a back-in-time data point might be logged. Citadel handles this case in two ways.

When a point is logged back-in-time, Citadel checks to see if the difference between the point time stamp and the last time stamp in the trace is less than the larger of the global back-in-time tolerance and the time precision of the subtrace. If the time is within the tolerance, Citadel ignores the difference and logs the point using the last time stamp in the trace. For example, the Shared Variable Engine in LabVIEW 8.0 and later uses a tolerance level of 10 seconds. Thus, if the system clock is set backwards up to ten seconds from the previous time stamp, a value is logged in the database on a data change, but the time stamp is set equal to the previous logged point. If the time is set backwards farther than 10 seconds, Citadel creates a new subtrace and begins logging from that time stamp.

Beginning with LabVIEW DSC 8.0, you can define a global back-in-time tolerance in the system registry. Earlier versions of DSC or Lookout always log back-in-time points. Use the backInTimeToleranceMS key located in the HKLM\SOFTWARE\National Instruments\Citadel\5.0 directory. Specify this value in milliseconds. The default value is 0, which indicates no global tolerance.

Retrieving Data from a Citadel Database

NI provides numerous options for accessing Citadel. You can access Citadel programmatically using APIs in the DSC Module, view data interactively using the Historical Data Viewer component in MAX, or retrieve data using SQL queries with the Citadel ODBC interface. C, C++, and VisualBasic APIs do not exist for Citadel.

Retrieving Data with LabVIEW

Using the APIs in the DSC Module is the most efficient way to retrieve data from Citadel programmatically. The DSC Module includes an advanced API for retrieving historical trace and alarm or event data. You also can request this data in interpolated or raw form. Raw data contains the actual values recorded in the database and the associated time stamps associated with those values. Refer to Table 1 for an overview of raw data. A request for interpolated data requires you to specify a time range and time interval. The returned data is an interpolated approximation of the trace values at a given time. You can specify either linear or stair-step approximation when requesting interpolated data.

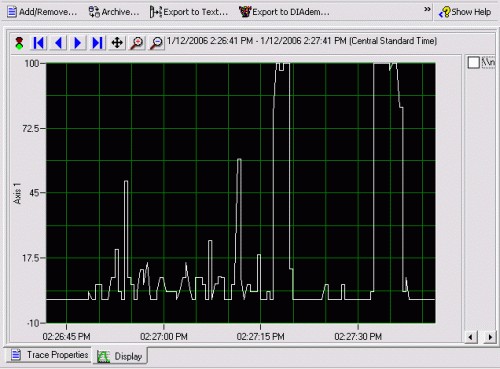

Retrieving Data with the Historical Data Viewer

The Historical Data Viewer component in MAX contains a basic interface for viewing and exporting Citadel trace data. You can use this interface to export historical data to a text file and use cursors to perform a basic analysis of the trace data, as shown in Figure 5.

Figure 5: Using the Historical Data Viewer to View Citadel Data

You can configure trace views in the Historical Data Viewer to show multiple overlapping traces. You also can configure trace views in trace groups.

Retrieving Data with Lookout

Lookout supports historical data charting with the NI HyperTrend control. The NI HyperTrend control provides a real-time chart view that you can scroll, zoom, or pan. Lookout also provides an SQLExec Object with which you can calculate and display summary statistics for a set of Citadel traces.

Retrieving Data with ODBC and ADO.NET

The Citadel database includes an ODBC driver that enables you to retrieve data directly from third-party applications. The Citadel 5 ODBC driver is compliant with SQL 92 and ODBC 2.5 standards. All clients that follow these standards can retrieve data from a Citadel 5 database.

ADO clients now can use the ODBC driver through the Microsoft OLE DB Provider for ODBC Drivers to access the Citadel 5.2 and later databases.

Retrieving Data with DIAdem

DIAdem provides a direct interface to Citadel with which you can perform advanced analysis and reporting directly on Citadel data. Refer to the NI Web site at ni.com/diadem for more information about the DIAdem analysis package.

Networking with Citadel

The Citadel 5 database is fully network-aware and contains a host of built-in networking tools and enhancements. Citadel inherently supports remote database logging. When logging remotely, Citadel keeps a local cache of all data intended for permanent storage in the remote database. In the case of a network failure, Citadel sends all data cached locally to the destination database when the network connection is restored.

You also can perform most other database functions described in the Citadel Operations section of this white paper across a network. However, you cannot perform operations that would delete or remove data across a network. For example, you cannot remotely detach or delete databases, can you cannot remotely perform destructive archiving.

The Citadel 5 database relies on Logos for proper networking operation. Specifically, Citadel uses the NI PSP Service Locator service to communicate with a remote instance of Citadel. The Logos services are configured automatically when you install Citadel. Refer to the NI Web site at ni.com for information about configuring Logos to work with a firewall.

Note: Prior to LabVIEW 2012, NI PSP Service Locator is listed as NI Service Locator in Windows Services.

Database Caching

To achieve optimal performance, Citadel manages a local cache of remote databases. Citadel configures and manages this cache, which is completely transparent to the user. Caching is common in two primary use cases: logging to a remote database from the local computer and viewing real-time data from a remote server.

When logging data directly to a remote database, Citadel guarantees data integrity by logging data to a local cache of the remote database. Citadel sends a signal to the remote database indicating that remote data is waiting to be transferred. The remote database then schedules a data transfer. If a network connection is lost or if there is limited bandwidth, the remote database retries the transfer until all data is copied. Citadel clients connected to the remote server cannot see the data until the data is transferred from the local cache to the remote database.

When viewing data from a remote database, Citadel maintains a local cache of the remote data. This local cache decreases network bandwidth usage and improves performance when multiple clients are connected to a central server. Citadel deletes local cache data if the data is not accessed for an extended period of time. If you view or query large volumes of data from a remote Citadel database, the local cache might grow as large as the original database itself. The cache therefore might place an additional disk resource requirement on PCs used as Citadel clients. Consider this potential requirement when planning the database architecture.

Citadel Security and Robustness

This section describes ways to maintain a secure and robust Citadel database.

Security

Citadel does not implement any built-in security features. You must secure data by placing all PCs on the data logging network on an isolated sub-net or an isolated network. Otherwise, any user with access to Citadel on the network can view, modify, or copy potentially sensitive Citadel data. NI also recommends that you password protect all Citadel terminals to prevent unauthorized access to data.

Database Robustness

The Citadel 5 database is generally highly reliable. NI routinely tests database reliability for every major release in at least the following areas:

· Disk overflow—Citadel does not log more data than available disk space. If Citadel predicts a disk overflow error, it generates an alarm and ceases logging data.

· Alarm database overflow—Citadel does not fail if the SSE portion of the database exceeds the 4GB limit. Alarms are cached in the Citadel portion of the database until space in the associated SSE database is available.

· Manual corruption—During testing, Citadel databases are corrupted manually using a HEX editor to modify data files in random locations. Citadel attempts to recover as much data as possible from the database. Citadel recovers data in 4KB blocks.

· Abrupt power failure—During testing, computers logging to Citadel databases are subjected to abrupt power loss scenarios. Citadel attempts to recover from all known corruptions that this procedure causes. However, when power is terminated unexpectedly, an unpredictable amount of data might be lost from any point in a Citadel database. The amount of lost data can vary greatly depending on the rate at which data is being logged and the number of traces being logged.

Note: NI recommends that you use a certified, uninterruptible power supply (UPS) with any computer used to log Citadel data.

· Network outage—During testing, Citadel operations such as remote logging, remote archiving, and remote data viewing are interrupted during periods of data transmission. Citadel should recover all remaining data when the network connection is restored.