Transform your Processes and Workflows: Change the Value of Test

Overview

These days, it’s impossible to escape digital transformation. From moving to a model-based enterprise or implementing an Industry 4.0 approach, transformation means different things to different people. But it’s needed to keep pace with advancements in technology. It enables us to bring new features and products to market faster.

Verification and validation, or V&V, teams can—and should—play a central role in their organization’s digital transformation. They are the ones to provide product performance insights that both ensure quality and enable quicker decision making. The real question is, how can V&V test teams do this without a budget or resource increase? How can these teams have time to reach these essential insights with the continual added complexity of devices that need more tests done in a shorter amount of time?

Let's discuss how assessing your V&V workflows and processes can identify where small changes can be made that could have a large impact and how V&V teams can be more efficient leading them to make fewer tradeoffs in the V&V phase, reducing risk. Also, consider taking our short quiz to identify your test strategy profile and get customized recommendations for processes, systems, and data that aligns with your team's goals.

Contents

Identifying Limitations in Your Current Workflow

To be able to understand limitations, we need to start at a high level and break the entire workflow down into pieces. This lets us understand how it fits into the overall process of developing, testing, manufacturing, and shipping products to customers.

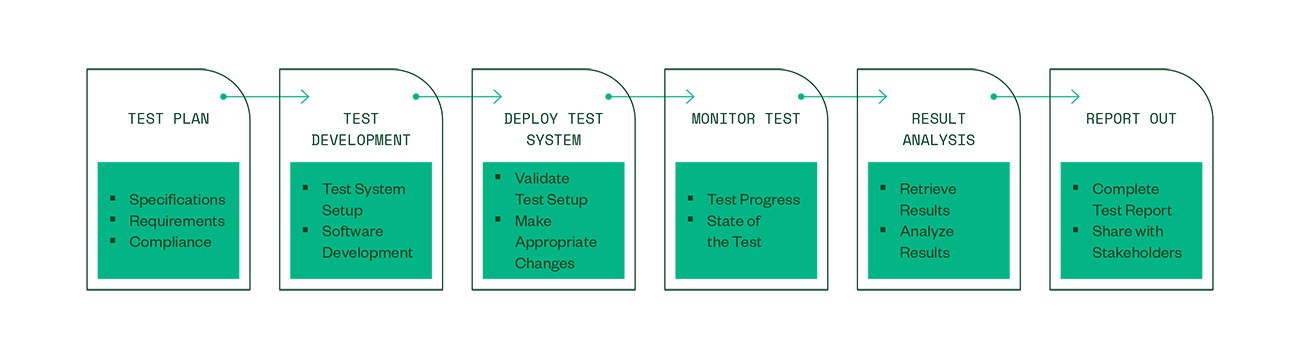

In a typical workflow, V&V engineers get involved and start discussing specifications and requirements with design engineers as the Engineering Validation Test (EVT) stage approaches. Let’s review the high-level steps.

- Create the Test Plan—Gather requirements to see what equipment is needed and which software test routines must be developed. Sign off on test plan and cases.

- Build the Test System—Build systems and develop software.

- Deploy the Test System—Hand off to a technician tasked to deploy and run the test—or the V&V engineer walks over to the tester, deploys the software, and starts the test.

- Monitor Test—Monitor test execution to enable teams to react quickly to an unexpected failure. Since it’s rare to have the resources available to watch the entire progress in V&V, it’s more likely that engineers or technicians will walk over to check as they have availability.

- Analyze Results—Manually transfer results from the tester to the appropriate team for analyzing.

- Report Results—Share outcome with design engineers and other stakeholders.

Depending on whether the product passed or failed, the next step could either be another design iteration and test or it could be handoff to manufacturing for production.

Figure 1. Typical V&V Test Engineering Workflow

How to Improve Inefficiencies

If we step back and assess this typical workflow, we’ll notice there are many steps that require manual interaction. These unintentional deficiencies may not seem like much at first, but when you add up all the walking around and data transfer steps, the impact is significant. Products might not get to market on time or worse—we end up accepting risk over quality because there isn’t time to rerun all the tests.

While the steps themselves often have areas we can improve on, a common thing to overlook is how teams transition between these steps. If you are inefficient in the way you move from one step to the next, you lose not only productivity but also traceability since it’s a manual process to document when changes are made. Let’s look at some areas that, if optimized and automated, can add a lot of efficiency to your workflows.

The Test Plan

Companies that see strong results from their digital transformation efforts often succeed in breaking down the silos between departments. For V&V teams specifically, this means having a process where the team is involved and aware of which products are in the pipeline and what features and functionality is intended to be built in. The earlier V&V is involved, the better they can plan.

Being involved doesn’t just mean being part of an endless stream of meetings but having access to the data generated (i) by the design teams—especially simulation data. The greater understanding V&V teams have of a product, the better the test plans will be.

It is important to remember that while V&V test engineers may want to work closer and earlier with design engineering, we also need to involve our production test team earlier. Production should understand what we are testing early on, which test methodologies we use, and ultimately in which areas of the test plan we found issues. Then, they can be better prepared to start manufacturing and testing the final product once it is released from V&V.

Test Development

V&V test teams often need a variety of equipment available to be able to perform tests across a spectrum of technologies. They also need to make sure they have the test coverage in their system to test against corner-case scenarios. Needless to say, they need a spectrum of expensive equipment to just do V&V.

Hardware

We talk about reuse and repurposing, but finding out what equipment can be repurposed for a test can be a significant time sink. We may need to walk around to the different testers we have, looking for a piece of equipment that fits our needs, seeing if it’s currently being used, and tracking down who is responsible for that test and whether they will be done with it by the time we need it—all of this takes time!

If we manage to find the right equipment, there is an additional dimension to consider. How close is the equipment to needing calibration? Can we complete our test without compromising quality by using uncalibrated equipment? With all these complications, we often see the default action is initiating procurement of new equipment. New equipment is not only expensive, but the procurement process consumes more precious time.

Instead of running this routine every time we need a piece of equipment, we need to automate the tracking of devices within systems. That way we can see what equipment is in which tester, if a test is running, and the utilization rate of the equipment in that specific tester—all at a quick glance. Having this capability eliminates the long, tedious search for test equipment. When you have these insights, you enable your teams to make data-driven equipment investments and only procure equipment when there truly is a need for it. This frees up the budget to do other things. What started as an identification of a place in your process to improve efficiency now also makes you better control cost.

Software

Developing software for test systems is one of the most time-consuming tasks V&V test engineers need to do, but it is also one of the most important tasks. Because we have limited time and deadlines to meet, it might seem like a good idea to have V&V test engineers each choose the language they want for developing software. The assumption is that they will pick whatever they are proficient in using. But what happens when you end up with a dozen different coding languages across your software system? Without a common framework and set of coding rules, you may build testers that are so customized that they are impossible to maintain and code reuse is hard. It can be even harder when the engineer who created the code is no longer with the company or has moved on to a different role. After you’ve built the same type of tester two or three times, you really feel the impact of this inefficiency.

When considering how we can improve this situation, start with the broad understanding that we may run many different types of tests in our V&V labs. Some may do simple control based on simple user inputs whereas others may need sophisticated test routines that are run while adhering to strict timing requirements. This spectrum is important as we are looking for efficiency gains, so we need to ensure we can use no-code/low-code options for simple tests and focus software development time for the tests that need exactly that.

For the more complex tests, we can gain efficiency with a standardized, open framework. This framework can call code modules developed in different languages and has the flexibility to be customized to your needs. Getting the right foundation in place increases efficiency and reduces risk as the common components you need—data collection, pass/fail evaluation, integration with other backend systems, and more—are developed once and reused across every tester. V&V test engineers can then focus on building the test routines and not the whole framework, ultimately standing up testers faster. But to truly take advantage of this, you must put in place processes that define when and where to use the framework over a no-code option. Getting this step right means everyone has a common understanding of how to build testers and follows the same set of rules, simplifying development and maintenance.

Deploy Test System

For many companies, deploying a test system is a manual process that involves moving the test software from a development machine onto the tester and making sure everything works as expected. Often, this step spawns small tweaks and changes in the code, typically made directly on the tester. Because of the manual process, V&V test engineers must later remember to move the final version back to their development machine and update the documentation, version history, and so on, for traceability and compliance purposes. Each manual process takes time and increases the likelihood of errors.

Manual processes do not take advantage of connected systems and require that the V&V engineer is present in the laboratory when the system is deployed. The distance between the engineer’s desk and the lab can add a considerable amount of overhead time spent just in the time it take that person to walk back and forth. Also, consider something as simple as what would happen if the USB stick used to transfer the test program became corrupted, or perhaps after the engineer deployed the test program to the testers, they discovered they needed to make a significant change that required them to work from their development machine at their desk. A whole spectrum of things could require that engineer to go back and forth to the lab, increasing the time it takes to deploy the system.

To increase efficiency in this part of the process, you need connected and accessible systems that can be viewed and managed from a remote location at your company. Your teams must be able to view what assets and software are in the system to ensure system readiness. Deployment then happens remotely, so there is full version history and traceability to what was deployed and who deployed it. Automation and remote management of your systems not only increases your operational efficiency but also ensures consistent traceability to each system.

Monitor Test

This part of the process is often overlooked when you are trying to increase efficiency. For tests that are somewhat automated, it can feel like after we get the test running, we can just stop by the tester once in a while to take a look at the status of the test and get a feel for when it will complete.

- Reduce the Steps—Though it might be great for their fitness trackers, the time it takes for your team to go back and forth between their desk and the lab adds up very quickly.

- Get Exact Timing—It’s essential to know exactly when the test finishes. If it failed, has it just been sitting and doing nothing for three hours before someone walked over to check?

- Remote Access—Your team needs remote access to systems so that they can check how a test is progressing at any time. This monitoring should include alarm functionality to notify them precisely when something goes wrong so that they can take the appropriate action early and avoid time-consuming, costly reruns.

Results Analysis and Reporting

This part of the process is critical and notorious for taking a long time. Often, we talk about automation and automated test systems, but we tend to forget to look at this part of the process.

When a test is complete, test engineers need to understand why a product performed the way it did. Did we get the outcomes we expected from the test scenarios? The results are often stored on the tester itself, resulting in a manual process for engineers to retrieve them. Then reviewing, extracting, transforming, and analyzing the data makes for a lengthy process—especially if you aren’t doing all this automatically.

Chances are that your company drives efficiency in other areas, so it would make sense to standardize your analysis process across all products. Running standard analysis has proven to reduce test costs and increase the amount of data analyzed and gained insights from (ii) While automation is important, it is equally important to be able to do quick ad-hoc analyses that can help your team do root-cause analysis. With a centralized place to store your test data and predefined routines that can be run on test data as it comes in, you can save a lot of time in this phase. If you also standardize reporting and autogenerate reports you can easily share with relevant stakeholders, you can get the right information to the right teams faster, which means iterating faster on your product design and getting to market sooner.

What to Do Next

Taking a step back and looking at the overall workflow can help identify which areas of your process you need to focus on. Every company is different—you may have some of the challenges discussed previously. Maybe you have all of them. With all the initiatives that span organizations today and the focus on moving faster, increasing efficiency, and creating competitive advantages, V&V teams have an opportunity to change how the organization views and values test.

Here are some ways to move forward:

- Consider how the bottlenecks you have can be solved with automation that can improve the efficiency in the overall process.

- Make changes to your teams’ processes to gain access to relevant information in real time.

- Evaluate if you have the right data foundation with software-connected systems that drive the results you’re looking for.

Process optimizations will benefit your company overall. While it is not an easy task to start, it is well worth the effort—and you don’t have to do it alone. NI has worked with multiple companies on standardization initiatives that can drive efficiency. It comes down to more than workflows and process.

Let’s talk today about what return you are looking for and explore areas where you have bottlenecks so we can discuss best practices. Learn how NI can help focus your efforts to enable you to gain the most efficiency and/or reduce the most risk.

Next Steps

- Drive Efficiency and Reduce Risk in Verification and Validation with a Solid Data Foundation

- Standardize Your Approach to Building Validation Test Systems for Increased Efficiency and Reduced Risk

- Discover Systems and Data Management Solutions

- Learn How NI Services Can Help You Determine ROI and Accelerate Your Standardization

References

-

[1] Laurence Goasduff, “Data Sharing Is a Key Digital Transformation Capability,” Gartner, May 20, 2021, https://www.gartner.com/smarterwithgartner/data-sharing-is-a-business-necessity-to-accelerate-digital-business.

-

[2] Foster, Simon. “Creation of an Infrastructure for Test Data Management.” NI. Accessed November 8, 2021. https://www.ni.com/en-us/innovations/case-studies/19/creation-of-an-infrastructure-for-test-data-management.html