ni.com is currently undergoing scheduled maintenance.

Some services may be unavailable at this time. Please contact us for help or try again later.

Drive Efficiency and Reduce Risk in Validation with a Solid Data Foundation

Overview

You’ve been generating data for decades. It’s how you get insights into whether your product is meeting requirements, its performance, and its quality before producing at scale.

Meanwhile, the world around us continues to use “Digital Transformation” and “Digitization” as buzzwords. While digitization is the process of converting analog signals and general information into digital formats, digital transformation means different things to different people1. So, what does it mean for all this data you’ve been producing? It’s not enough just to digitize all the data. We must use the data to continuously add value and improve the overall business. In order to do that, you must ensure you have the data foundation in place to gain the insights you need to act and make changes that drive efficiency and reduce risk.

Let’s discuss how utilizing data can help not only ensure quality, control cost, and meet timelines but also provide insights to other parts of the organization. Plus, how to do this in a matter of minutes rather than days or, even worse, weeks.

Contents

- Building a Foundation on Existing Data

- Data Transparency with R&D Design Teams and Manufacturing

- Where to Begin

- Why Does This All Matter?

- Using Data to Increase Efficiency in Validation

- Controlling Costs

- How NI can Help

Building a Foundation on Existing Data

V&V tests can generate a lot of data when validating products. Getting more value out of it all doesn’t necessarily mean generating more data. Instead, we need to use the right information: data that describes the test system state, assets used, and test routines being run. To deliver additional value, we need to not only know if the product passed or failed a test but why it passed or failed. Connecting the test and system data can provide you with insights into more than how the product performs. It can also show you if you need to make changes to your process.

For example, you might find that some tests fail because the instruments weren’t calibrated in time. Or maybe the product being tested is a newer revision of an existing product that requires an updated version of the software that was never deployed. If you can design your validation process so that it accounts for these types of circumstances, you can increase efficiency, eliminate test reruns, and release the product to production quicker—getting it to market faster.

Data Transparency with R&D Design Teams and Manufacturing

Sharing data across teams and functions is more important than ever2. In R&D, most industries use simulations to predict how a product will react in different use cases and when exposed to different environmental scenarios. This process generates a lot of data that can give insights into how the product might function.

As test scenarios move from unit to integration, and finally to systems test before acceptance test is performed, accessing the data generated during simulation can be extremely valuable. While data correlation can take a while, looking at simulation data alongside test data can provide valuable insights into how a product works across scenarios and can help make problem identification easier. We can take what we learn from performing these physical tests and provide those findings to R&D, who can optimize the models for higher fidelity.

Overall, these data insights can shorten the time it takes to go from design validation test (DVT) to working with manufacturing and preparing for production validation test (PVT) to building a manufacturing test plan. Some of these things, of course, require alignment and discussion across functions, but don’t overlook the fact that it can all be enabled when you build the right data foundation.

Where to Begin

To get started, let’s consider what we need to build a data foundation:

- Understand the data you’re currently collecting. Evaluate how complete it is, and what insights you can gain from it.

- Determine what additional data you need.

- Create a data strategy. With the amount of data available, make sure to assess what you need today versus what you need in the future.

- Take your data needs and strategy, then coordinate with design and manufacturing engineering teams to gain efficiency across the product development cycle.

As you expand the scope, you might also find that you want to provide relevant data to individuals, such as managers and executive-level audiences. They may be looking for high-level information that can give them insights into the entire value chain and when their new top-performing product will begin to ship.

Why Does This All Matter?

It can be hard to manage accelerated timelines and increased product complexity with limited resources. But if your V&V test team can provide additional business value beyond meeting deadlines while validating functionality and ensuring quality, it’s easier to demonstrate your team is worth supplemental resources. Let’s break down how we can drive efficiency while reducing risk so you have the bandwidth to go the extra mile.

We all know development schedules might slip as design engineers work through the issues they find. When the product gets to V&V, the time crunch is intensely felt because of scenarios like these:

- Manufacturing expects the product to start being produced on a specific date.

- Sales and Marketing have built a plan around when lead users can expect to get the product, but V&V finds an issue that requires another design iteration.

- V&V encounters an issue with uncalibrated test equipment and has to do a rerun.

- The time it takes to analyze and do a root-cause analysis on your data takes so long that V&V has to take a risk over confirmed quality.

Now, some of these things are out of our control. But how can the right data help?

Using Data to Increase Efficiency in Validation

Since we can’t compromise on deadlines, one way to increase efficiency is to get data that can limit the number of test reruns we have to do. To reduce the risk of having to do reruns, consider two key needs:

- The ability to see information about the overall system—this data helps us determine the state of the tester before we start a validation test. Having this data available and accessible in a central location enables your V&V teams to quickly and easily do a system review and get an overview of a tester before a validation test starts to ensure high system readiness before a test.

- The use of data in real time—setting thresholds on the test data as well as systems data can mean that you catch errors earlier and while it won’t eliminate a rerun of the test, it will increase the efficiency by making your team aware as soon as problems occur rather than an incident not being known until your teams start looking at the data after a test is complete.

Enabling teams with data that grants visibility into history and performance at the same time as reviewing test results usually brings efficiency gains. Teams can accelerate the process of root-cause analysis as they can quickly rule out if it is a system (process) error or if the product that is being tested isn’t performing as expected (design issue). Having the right tools in place drives efficiency in many ways:

- Optimizing workflow by automating more parts of the process

- Eliminating bottlenecks

- Creating traceability from test orchestration to execution and through to data management

- Reducing the time it takes to give valuable insights back to design engineers

The right data foundation lets you iterate on designs faster and get to market sooner.

Controlling Costs

Cost is hugely important within an organization. It would be an oversight to not consider how we can use data and gain insights that can help control costs. For V&V teams to perform quality tests, they need reliable, high-caliber equipment. And that can be expensive.

You should have a good handle on what equipment you have and how it is being used. Otherwise, you may end up investing in new equipment for a test when you could have used equipment you already have that is not currently being utilized. Not only is this costly, but the procurement process can also be long—and could, in the worst case, affect your timeline.

The same is true at a system level, regardless of whether your system consists of pure data logging on a test rig or if you have large scale systems for hardware-in-the-loop tests. The ability to determine across all of your systems when equipment is not utilized provides you with an opportunity to repurpose that equipment to do other tests instead of procuring more equipment. On the contrary, it also enables you to make strategic and data-driven investments when you do need more equipment because you can easily show that you are at capacity. All this is enabled by having the right data foundation.

How NI can Help

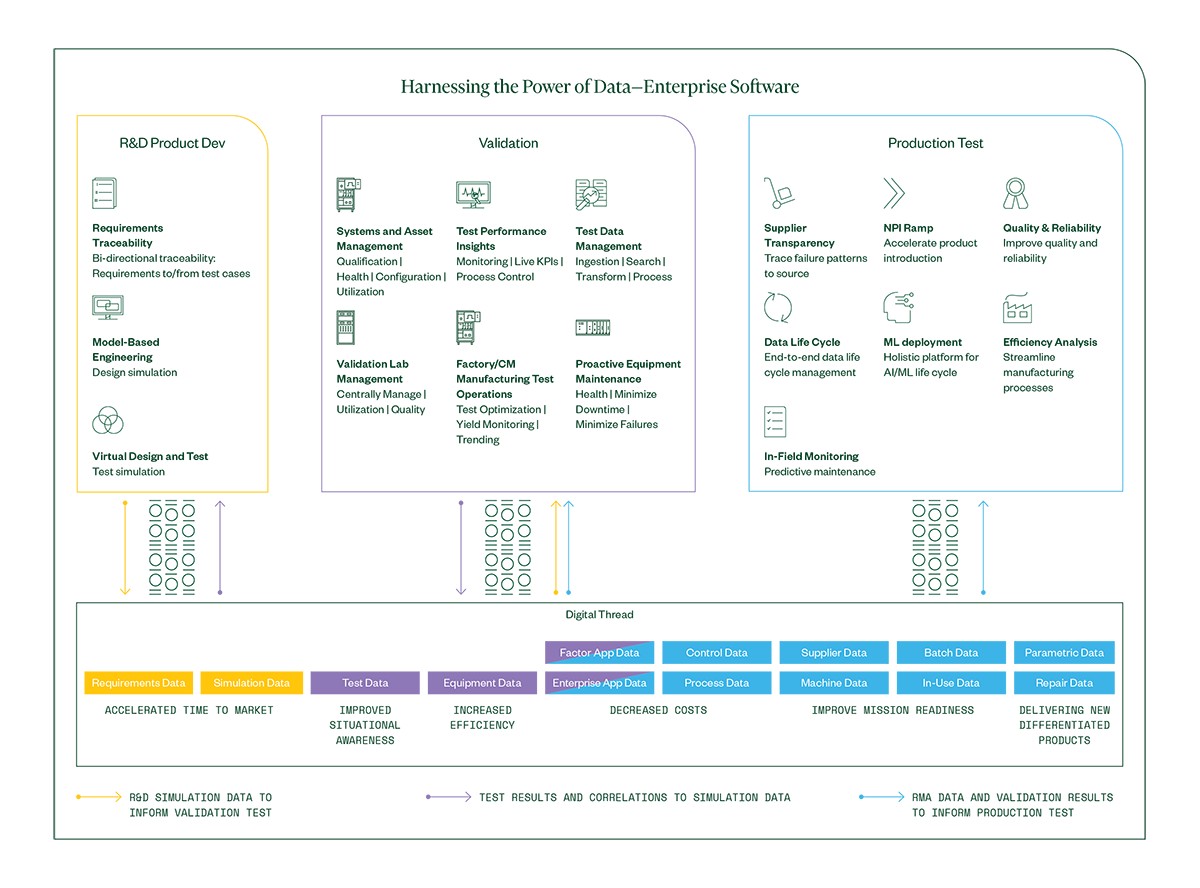

We have discussed how building a solid data foundation can drive efficiency, reduce risk, and help you control cost. You don’t have to start over, but if you can assess the data you have available today and leverage the right data to gain the insights you need for your teams, you can provide more value to the overall business.

While we have discussed what data can enable, we have not discussed how to architect and integrate this within your teams. Knowing what data you want is only half the battle. A data pipeline from your systems is necessary. Ingestion and transformation of the data needs to happen so that it can be indexed and made searchable. Perhaps your company has been working through a digital transformation. If so, you’ll need to ensure you can connect to a larger data lake where data from many other sources such as ERP systems, RMA tracking, CRM systems, and so on, are also stored. Business analytics teams can then use all the data generated to gain business insights that can drive change across the organization.

You don't have to do this alone. At NI, we have worked with multiple test teams through the assessment, architecture design, and implementation as our customers have built these capabilities using SystemLink™ Software for systems and data management. Let’s have an initial discussion on where you are on your journey, and what you are trying to drive so we can rightsize and provide an overview of what you need to get started.

References

-

Liu, Shanhong. “Digital Transformation - Statistics & Facts.” Statista, August 18, 2021. https://www.statista.com/topics/6778/digital-transformation/

-

Goasduff, Laurence. “Data Sharing Is a Key Digital Transformation Capability.” Data Sharing Is a Business Necessity to Accelerate Digital Business. Gartner, May 20, 2020. hhttps://www.gartner.com/smarterwithgartner/data-sharing-is-a-business-necessity-to-accelerate-digital-business/