Connecting Design and Test Workflows through Model-Based Engineering

Overview

The interlock between design and test teams remains a friction point and an area of inefficiency in the product development process. NI and MathWorks® recognize this and are collaborating to improve the design-test connection using model-based engineering. We aim to connect design and test teams with a digital thread to speed the development process, increase design and test iteration opportunities, and move test earlier in the development process.

Contents

- Roadblocks and Friction Points Between Design and Test Teams

- Improving Design and Test Efficiencies

- Digital Thread

- Proposed Connected Design and Test Workflow

- Next Steps

Roadblocks and Friction Points Between Design and Test Teams

An effective interlock may be obstructed by the following roadblocks:

- Adapting the algorithm for real-time execution—a model often must be compiled from the design software running on a development machine before being imported and used on a real-time controller running test software

- Determining the best way to interact with design models

- Instrumenting the code to get meaningful results

- Accessing the hardware or the lab

Consider the work of Dan, the design engineer, and Tessa, the test engineer. Dan writes algorithms for hybrid electric vehicle (HEV) system control. He spends his day in MathWorks MATLAB® and Simulink® software and doesn’t know that much about real-time implementation. He’s currently working to update controller code to incorporate a new sensor input.

Dan gives his design to Tessa without much collaboration (“throws it over the wall”). Tessa tests ECU control software and I/O using hardware-in-the-loop (HIL) testing methodology and tools. She spends her day with NI hardware and software test systems. She doesn’t know that much about control algorithm implementation. She’s currently tasked with testing the new system for HEV control that Dan’s been working on. Lacking integrated tools, Tessa can’t easily get Dan’s new update to run in the test system.

Does Dan and Tessa’s interaction sound familiar? It’s an illustration of all-too-common barriers to effective collaboration between design and test teams.

Many of our customers face similar problems arising from these friction points between design and test teams:

- Exchanges between teams without collaboration (“throwing things over the wall”)

- Version compatibility

- Poorly documented or undocumented workflows

- Platform issues between design tools and test tools (Windows/Linux, desktop/real-time, 32-bit/64-bit, compiler differences)

These challenges prevent organizations from achieving the goal of full-coverage testing with best-in-class methods.

Improving Design and Test Efficiencies

Most engineers want to test as much as possible because of the hidden costs and risks associated with less testing: the cost of rework, issues found in the field leading to liability concerns, recalls, and brand image and market share impacts. But resources such as time (schedule), costs (budget), and people (expertise) are limited, so more testing is usually not possible past a certain point by throwing more resources at the problem. Instead, that movement is achieved by fundamentally changing test methodologies and processes to become more efficient within existing constraints. The ability to make this shift is a significant competitive advantage because it involves doing more with less, minimizing risk, and maximizing quality and performance during a program.

Connecting design and test through model-based engineering is one fundamental way to improve design and test efficiency that leads to second-order effects. It provides the ability to move test earlier in the development process (from the track to the lab and from the lab to the desktop), so engineers can find errors earlier, debug algorithms faster, and iterate through the design/test cycle more quickly.

Digital Thread

Establishing this digital thread—this common language teams use to communicate—starts with making toolchains interoperable.

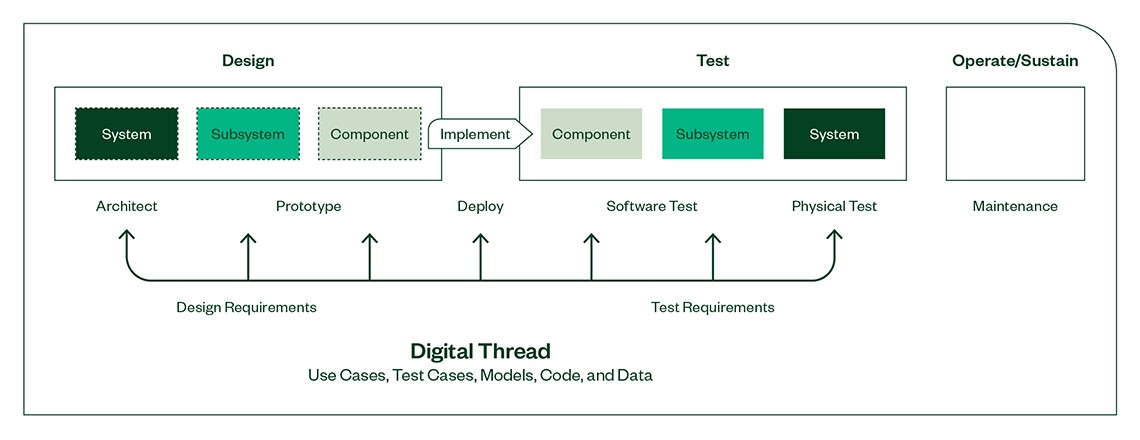

Figure 1: Design and test connected through a digital thread of data, codes and software components.

Teams need a translation layer to enable bidirectional communication of information, which reduces rework, troubleshooting, and reimplementation. MathWorks and NI are working on this because we recognize that models are a primary method of communication between the design and test worlds.

Models are rich in information. They describe the behavior of the system and provide the basis for building test cases and quantifying test requirements. Integrating the same models used for design into test allows for a common platform to evaluate performance and simulate/emulate the world around the devices and components under test. This frees test teams from tool-imposed requirements and enables them to speak the same language as the designers.

Establishing a digital thread between teams using models as a primary means of communication improves development efficiency. It connects design and test workflows with interoperable tools developed to work together. This solution helps Dan and Tessa work together more closely, test more often, and give their organization a competitive advantage.

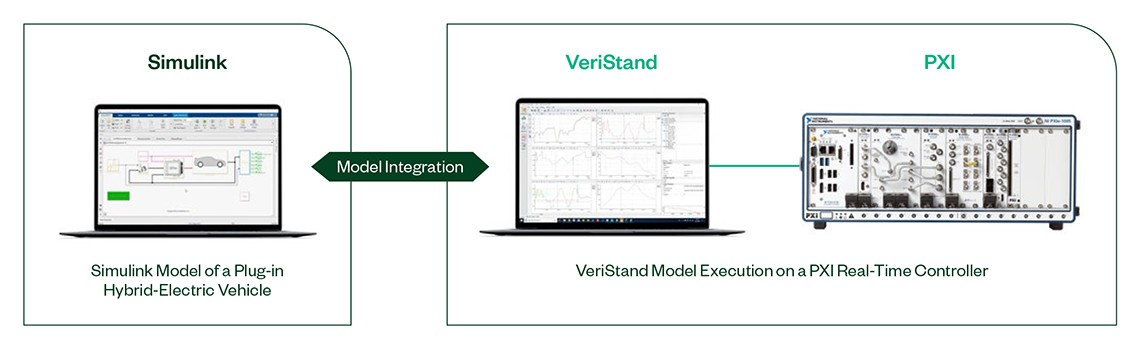

MathWorks and NI have improved compatibility between Simulink and VeriStand. We now have lock-step releases (for example, MATLAB R2020a release compatibility with VeriStand R2020). And we are collaborating on further improvements like automating more of the joint workflow that is manual today and providing deeper access to signals and parameters in the model hierarchy.

Figure 2: Mathworks Simulink model integration into real time test software.

Proposed Connected Design and Test Workflow

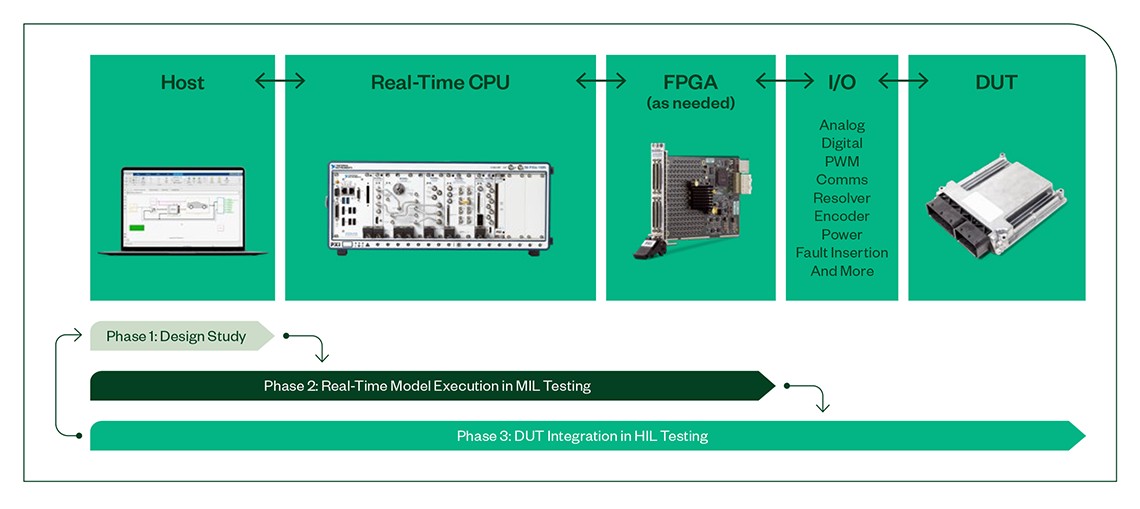

Figure 3: Integration of software and hardware tools from design to validation test for model in the loop and hardware in the loop test.

Next Steps

- NI and MathWorks Product Interfaces

- Learn how to use models from Simulink software in VeriStand

- Learn how to create real-time test applications with VeriStand

- Learn more about how to optimize V&V test workflows

Some content is by Paul Barnard of MathWorks.

MATLAB® AND SIMULINK® ARE REGISTERED TRADEMARKS OF THE MATHWORKS, INC. LINUX® REGISTERED TRADEMARK IS USED UNDER A SUBLICENSE FROM LMI. LMI IS THE EXCLUSIVE LICENSEE OF LINUS TORVALDS, THE WORLDWIDE OWNER OF THE BRAND.