Avoid Test System Downtime With CableSense™ Technology

Overview

When intermittent failures start to increase, or test data suddenly becomes noisier than expected or offset by an odd amount, the main question on your mind is typically the same. What changed? Is your code, instrument, device under test (DUT), or physical setup to blame? Too often, the issue comes down to one faulty electrical connection. The key to mitigating risk here is to verify your system’s physical setup at the start of a test to detect common ATE connection issues early while remaining minimally disruptive to the test itself. To help you do this, NI introduced patent pending CableSense technology to some of its PXI Oscilloscope models. Using principles similar to a traditional time-domain reflectometer (TDR) on a real-time oscilloscope within your test system, you can detect changes from a known, golden setup without having to alter the connections themselves.

Detecting Changes in Your Setup

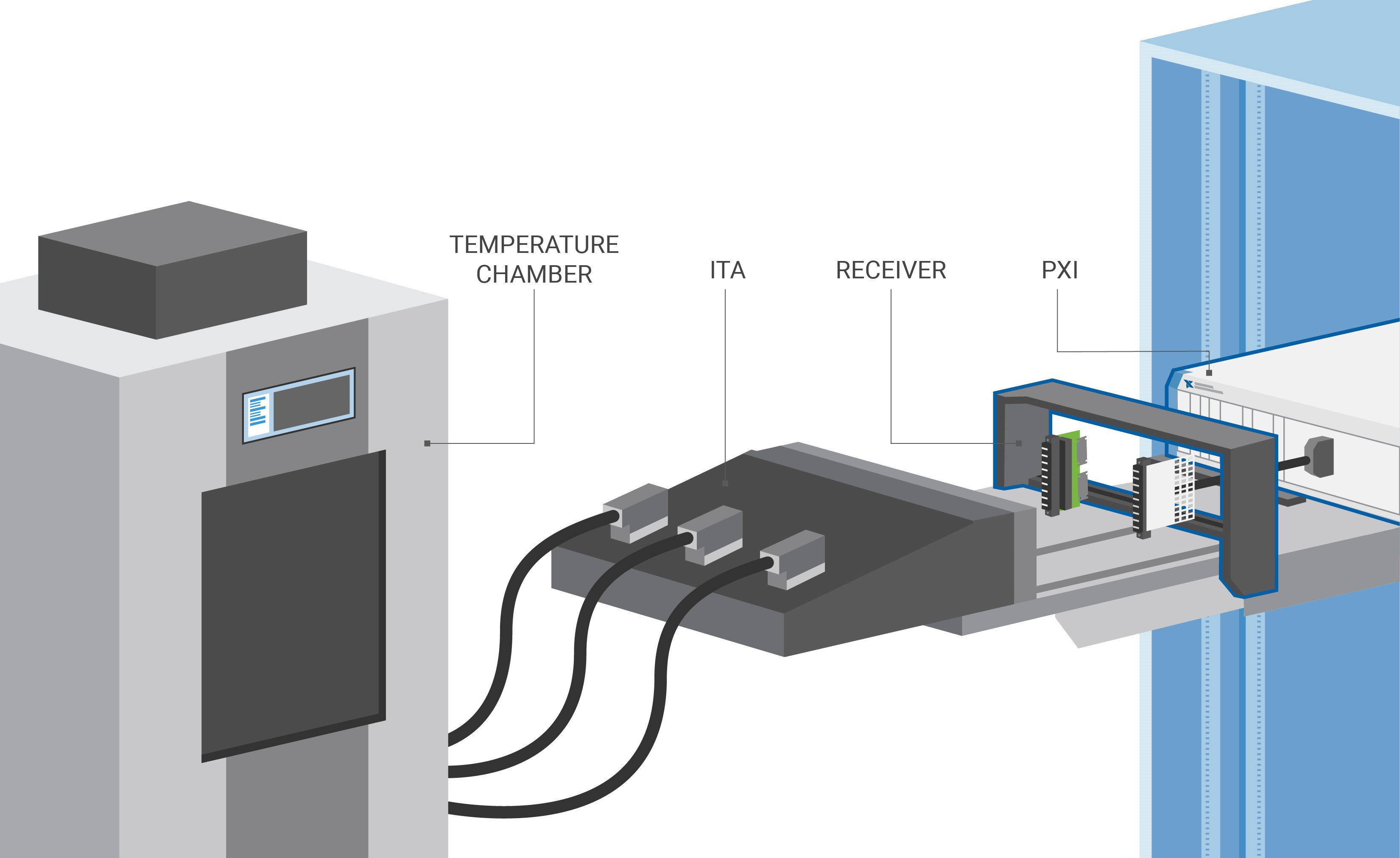

Automated test setups can range from simple, single coaxial cable connections to vastly complex mass interconnect systems involving adapters, switches, receivers, interface test adapters (ITAs), and/or temperature and vibration chambers. To ensure the quality of a test, you need to maintain secure connections between the instrumentation and the DUT. However, as the size and complexity of a mass interconnect system grows, the number of potential failure points grows as well.

Figure 1. Mass Interconnect Setup

Faults are increasingly likely to occur with connections closer to the DUT since those locations are the most exposed to insertions, changes, and potential human error. For instance, connections in a temperature or vibration chamber are swapped between every DUT, but the cables between a PXI chassis and receiver are unlikely to be touched frequently once they are situated.

Types of Connection Failures

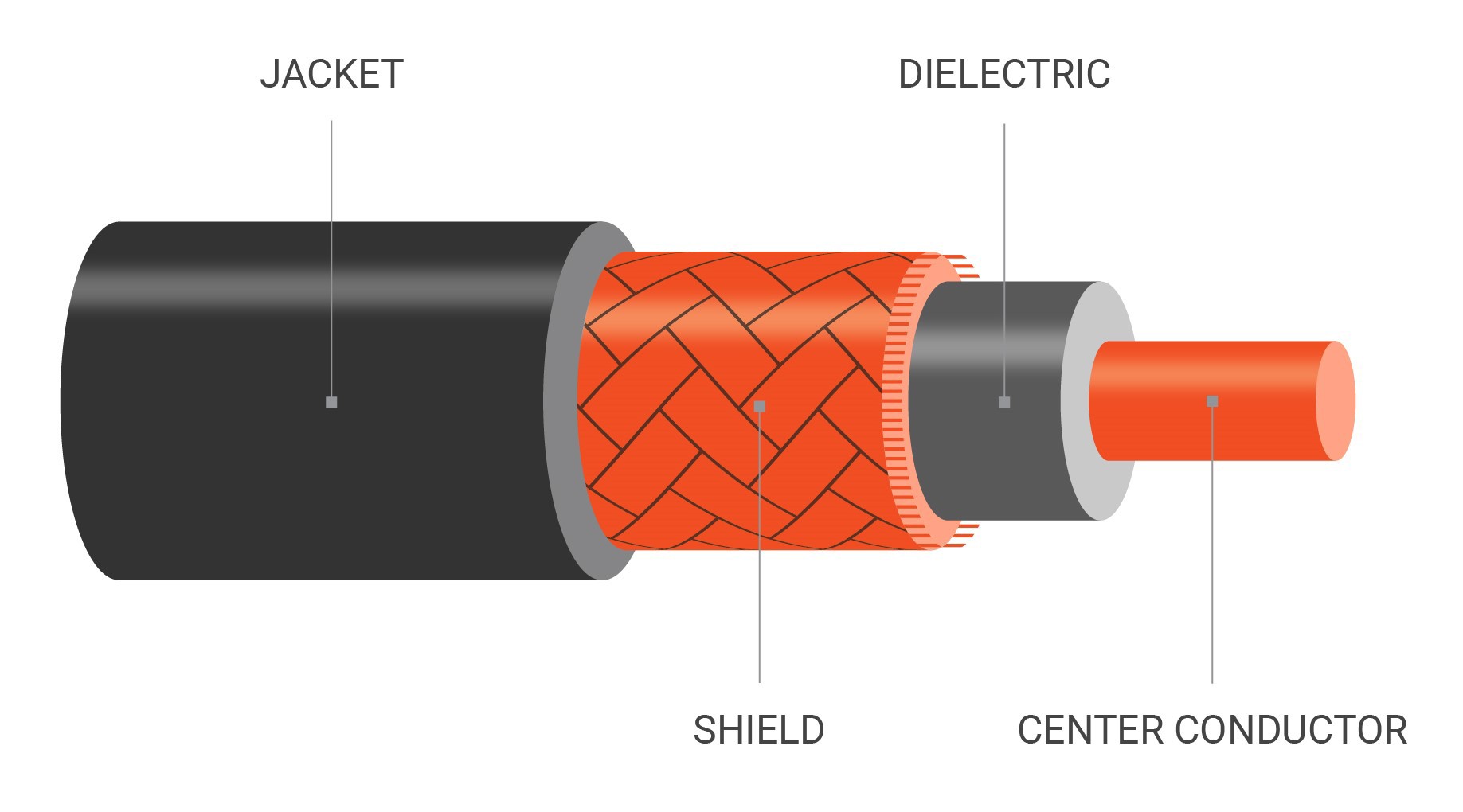

Cables, connectors, and relays unfortunately wear over time and are susceptible to operational misuse. Cables can become loose or frayed, pins can get bent from misguided insertions, and relays may fail earlier than expected. During tester replication, an incorrect type or length of cable may also be used by mistake, resulting in unnecessary and avoidable measurement variation.

Figure 2. On the left, a cable’s metallic shield starts to fray, which can be caused by mechanical stress. On the right, a high-quality cable’s center pin is missing, which is easy to overlook especially when the cable still mates.

Characterizing Your Setup Using CableSense Technology

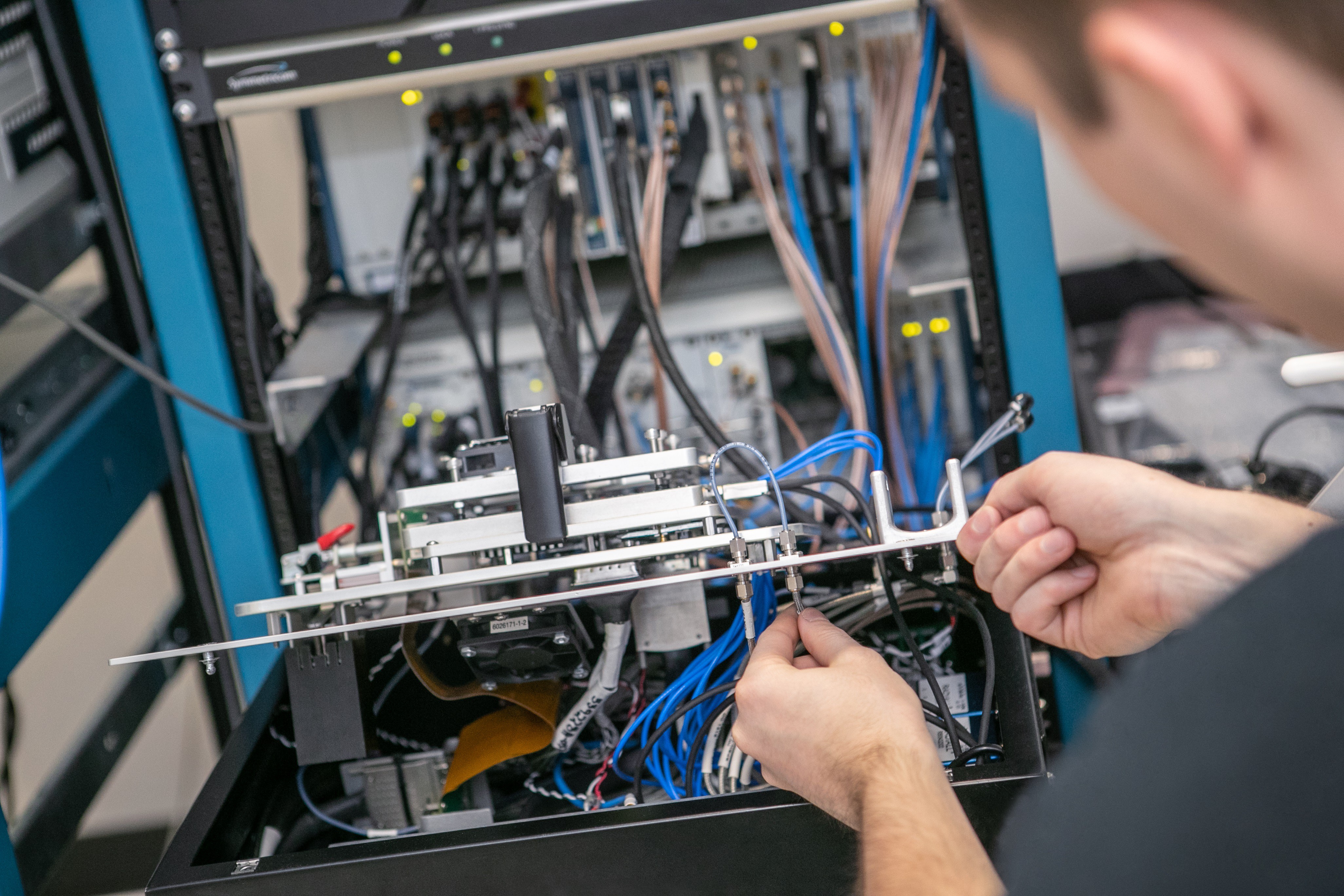

Lab experts may be able to visually inspect systems in a high-level attempt to detect changes, but bent pins or internal cable failures are still too difficult to find this way. And tampering with a test station that should already be working fine is nerve-racking because noble intentions could cause new problems, especially when insertion counts are limited.

Figure 3. “Best guess” troubleshooting not only wastes time but could inadvertently cause new issues in your setup.

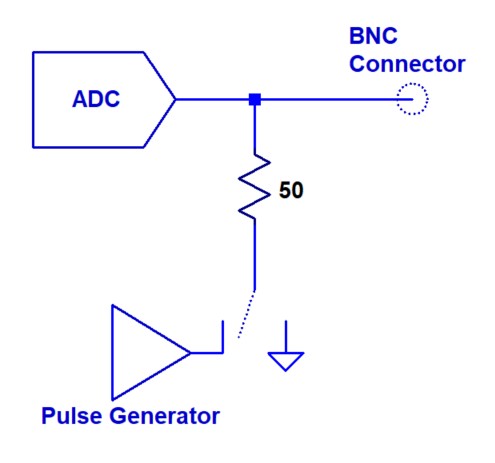

The key to mitigating risk here is to verify your system’s physical setup at the start of a test to detect common ATE connection issues early while remaining minimally disruptive to the test itself. To help you do this, NI introduced CableSense technology to some of its PXI Oscilloscope models by incorporating a pulse generator behind each oscilloscope channel’s 50 Ω path.

Figure 4. Block Diagram of CableSense Pulse Generator in a PXI Oscilloscope Front End

Similar to a traditional TDR, the NI PXI Oscilloscope sends a pulse along the entire passive electrical path from the oscilloscope channel all the way to the DUT. When the pulse reflects back toward the instrument, you can characterize impedance or reflection coefficient over time, correlate it with distance, and account for adapters, switches, receivers, or ITA connections in the signal path. Critically, since the real-time PXI Oscilloscope is already incorporated into the automated test system itself, you don’t need to alter any connections to perform this characterization. External TDRs or wire testers require a combination of disconnections, cable swaps, or equipment swaps for reactive debugging, which prevents them from being effective preventive solutions.

To apply this technology, you can create a limit mask from a known, golden setup to serve as the basis for future comparisons. The CableSense pulse is accessible through the NI-SCOPE API, so you can programmatically create these masks and then verify against them later in an automated fashion, perhaps right at the start of your test sequence. Comparison logic detects both major failures like loose cables or bad relays and minor failures like incorrect cable types or lengths. You can automate this check at your preferred frequency, for example, once every morning, or, for critical, longer-running tests, once per DUT. By tailoring the masks to your own tolerance for change, you ensure the repeatability you need in your specific application to prevent false failures.

CableSense Software

Unlike a traditional TDR, CableSense technology offers the added benefit of comparison logic. Automating the CableSense check against a mask lowers the barrier of entry to debugging using TDR principles, which sometimes require advanced knowledge and experience to use efficiently.

Mask Generation

Validating connectivity by monitoring cable impedances and length over time is a key application of CableSense technology. Whether the connection faults are due to mishandling, high insertion count, failing relays, or bad or incorrectly replaced cables, you can identify them by comparing the system’s response to that of a known good setup using a predefined mask. If at any point the response waveform meets or exceeds the limits set by the mask, an error is generated to alert the operator of a system connectivity problem and its location within the system’s electrical path.

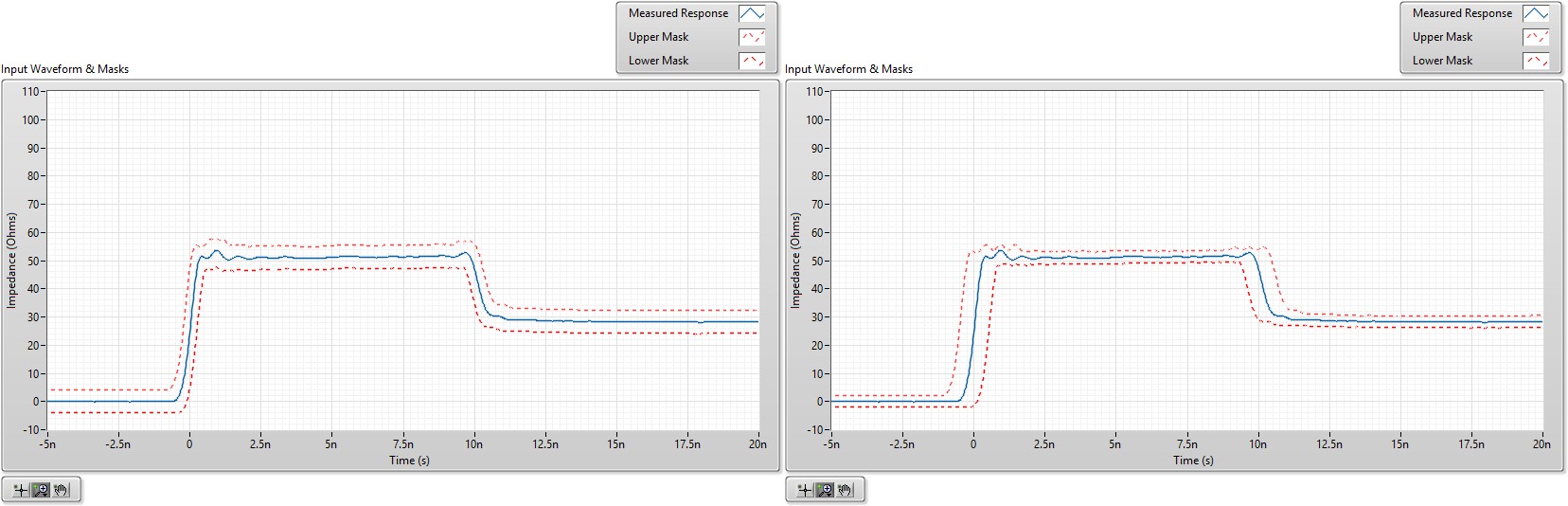

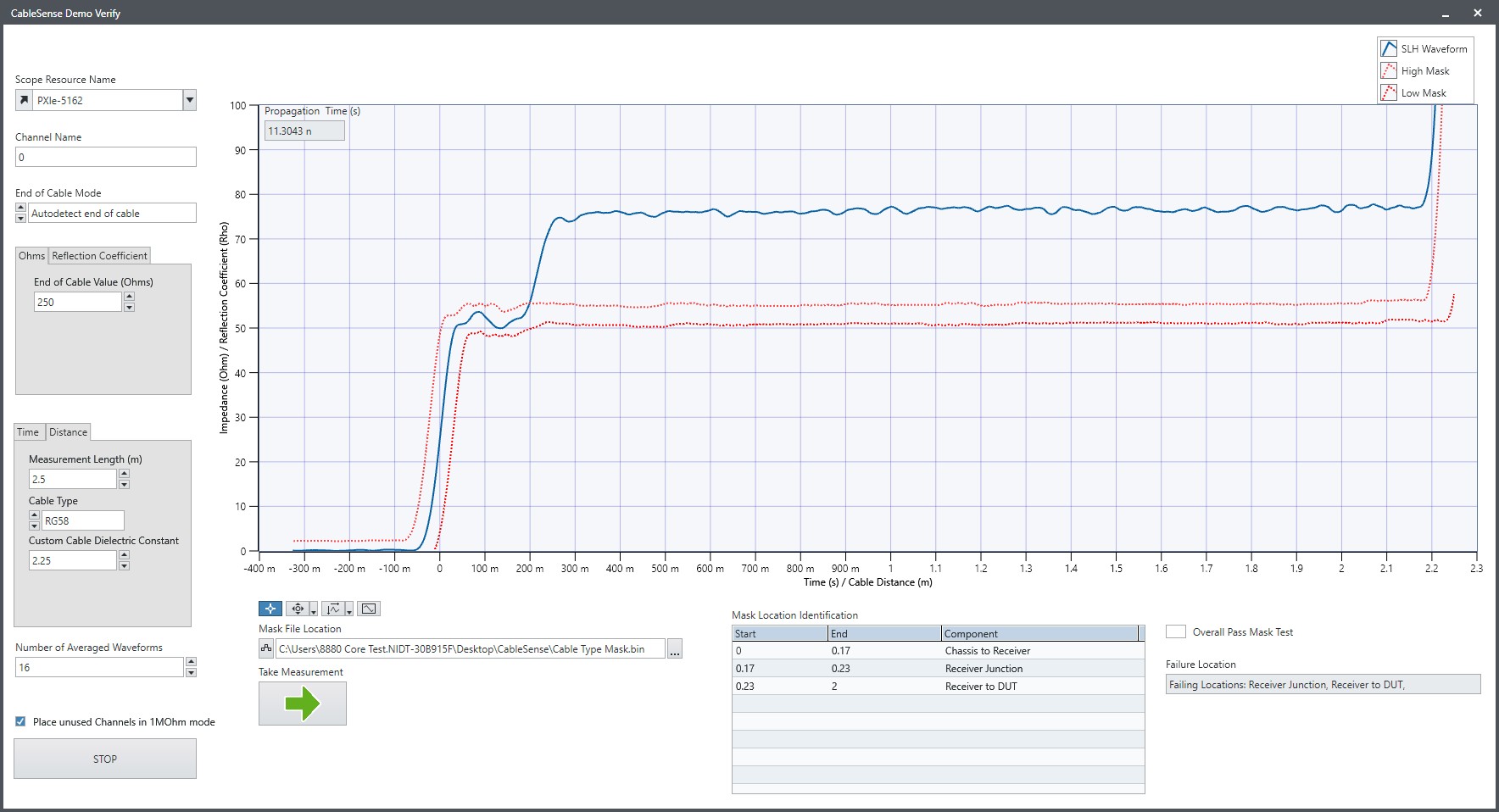

Figure 5 shows a system’s measured response along with its upper and lower limits. You can adjust the mask in both the time (x-axis) direction and the impedance or reflection coefficient (y-axis) direction. The plot on the left shows a ±4 Ω impedance offset and ±100 ps time offset. The plot on the right shows a ±2 Ω impedance offset and ±500 ps time offset.

Figure 5. A Measured CableSense Pulse (Blue) With Varying Levels of Mask Tolerance (Red)

In general, you should set these tolerance limits wide enough to allow for normal variation in your application but tight enough to detect undesired change. NI recommends characterizing your setup’s typical variation by first testing several candidate cables you would typically use in the application. This insight should help you determine the appropriate time and impedance margins to use.

Major Failure Software Example: Open Circuit (Loose Cable, Bad Relay)

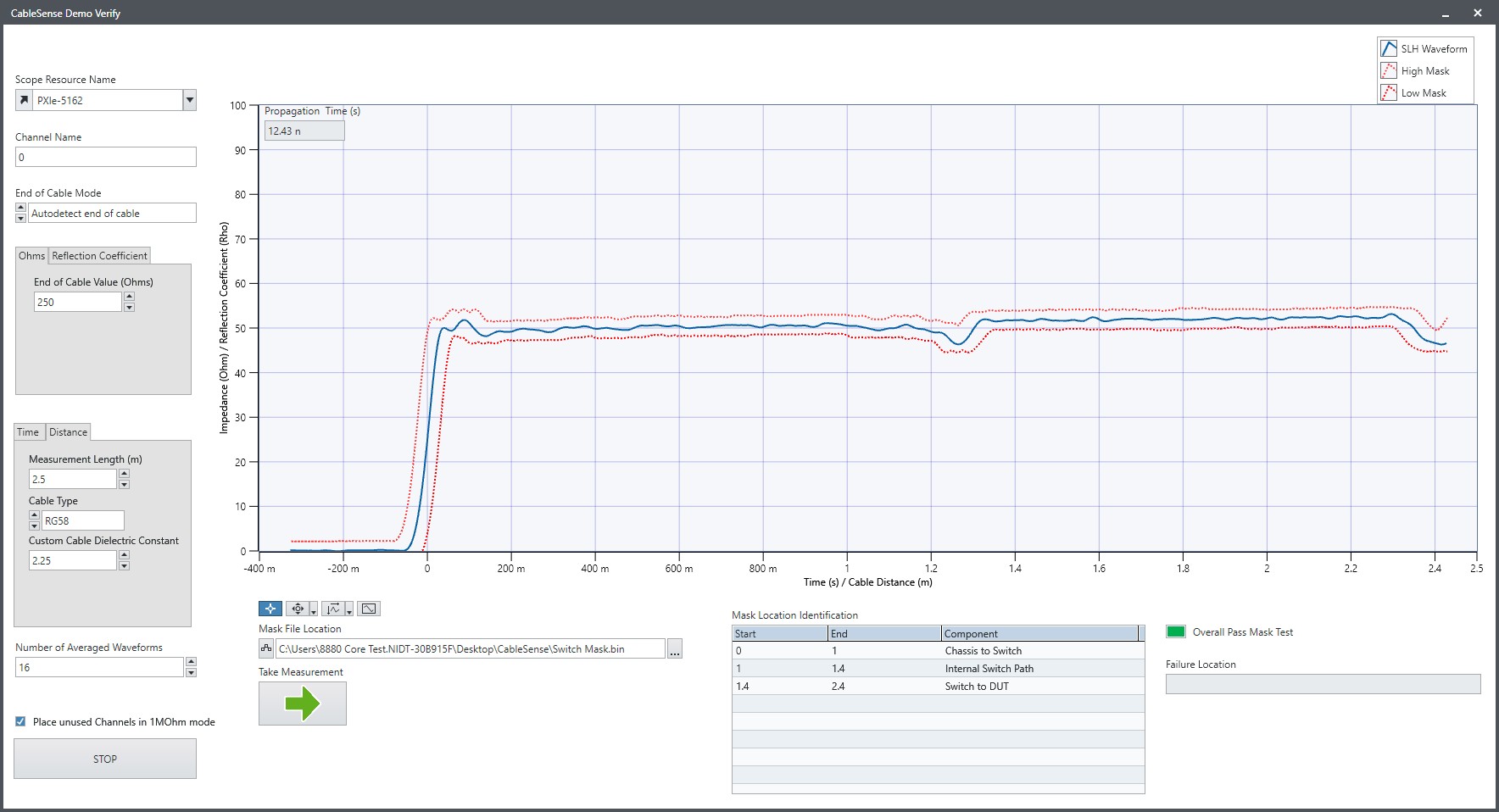

In the following setup, a PXI Oscilloscope is connected to a PXI switch through a 1 m cable, and the PXI switch is connected to a DUT through a second 1 m cable. The two cables are connected through the switch, whose internal path is about 40 cm in this case.

When initially creating a mask, you can increase its future relevance by segmenting the full electrical path into subregions based on your knowledge of your setup. To aid this functionality, you can use the x-axis to represent distance rather than time and correlate it based on the known dielectric of the cables you’re using. In this example:

- Distance 0 m–1 m = Chassis to Switch

- Distance 1 m–1.4 m = Internal Switch Path

- Distance 1.4 m–2.4 m = Switch to DUT

Then, if a failure is detected, your program can report the subregion(s) failing the mask limits to narrow the scope of your troubleshooting. In the first test below, nothing in the setup has been altered, so the mask verification passes.

Figure 6. The CableSense pulse passes the mask check, meaning the setup has not varied from its known, good state.

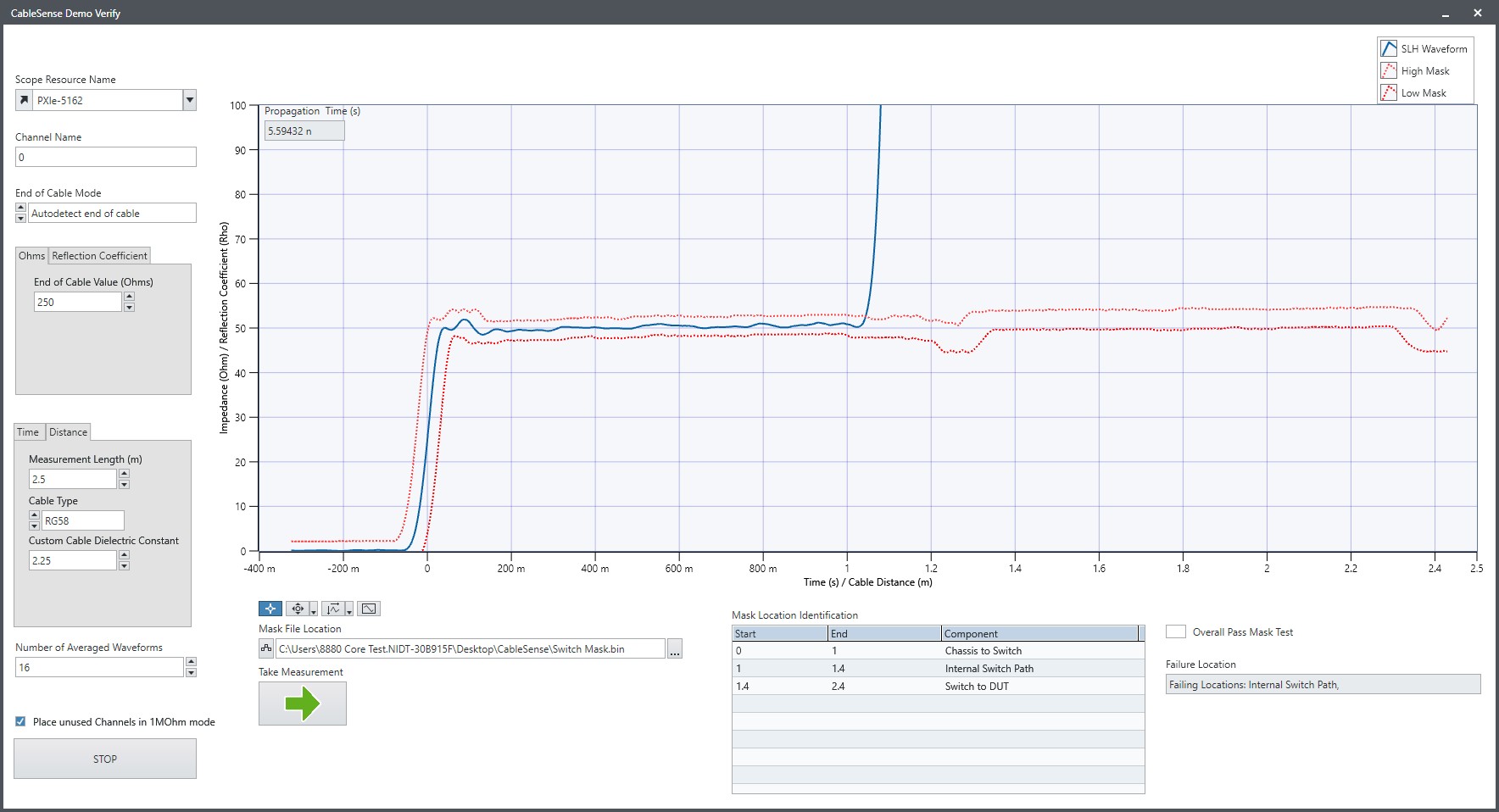

The same setup is used for Figure 7 except the internal switch path has intentionally been broken to represent a faulty relay or otherwise bad switch path. Slightly further than 1 m into the electrical path, the impedance waveform sharply rises, representing an open circuit at the problem site. The mask check fails, and Internal Switch Path is correctly reported as the failure location.

Figure 7. An open circuit is detected, and Internal Switch Path is reported as the failure location.

Minor Failure Software Example: Wrong Cable Impedance or Length

Beyond open or short circuits, you can use the CableSense pulse to identify more minor issues, like incorrect cable type or length. In the Figure 8 setup, a mask was created based on a short, approximately 20 cm BNC cable joining with a 2 m BNC cable, both of which are supposed to be 50 Ω.

During the test, a 75 Ω, 2 m cable was used instead, resulting in a mask failure. The plot clearly shows that the electrical path was within limits for the first 20 cm but then rose outside the limits of the mask to 75 Ω and stayed there for two additional meters. From an outside glance, cable misuse can be difficult to visually detect in a replicated test setup; an electrical verification is more efficient.

Figure 8. An incorrect 75 Ω cable is easily detected against an expected 50 Ω cable mask.

In practice, if your test sequence reports a CableSense failure in its initialization section, you can use a UI like the one in Figure 8 to visually represent the electrical failure and narrow the scope of troubleshooting. For an example implementation of CableSense technology, see the demo LabVIEW code in the Related Links section.

What Can CableSense Technology Measure?

CableSense technology on real-time oscilloscopes is capable of measuring cable impedance and cable length, similar to a traditional TDR; however, there are some important differences. The CableSense feature is an add-on option for in situ measurement verification using a real-time oscilloscope within your test system, while a traditional TDR is an external, high-performance instrument with no built-in comparison logic.

Traditional TDRs have fast-edge pulse generators with tens of picosecond rise times and sampling bandwidths in the tens of gigahertz. In contrast, the CableSense pulse can be hundreds of picoseconds to several nanoseconds, and the sampling bandwidths range from 100 MHz to 1.5 GHz. CableSense sampling is conducted by the oscilloscope channel’s analog-to-digital converter (ADC) and input stage; thus, if a 100 MHz scope is used, the pulse sampling is limited to 100 MHz. Conversely, if a 1.5 GHz oscilloscope is used, the CableSense pulse is sampled at 1.5 GHz bandwidth.

Supported Hardware and CableSense Specifications

Each oscilloscope model that supports CableSense technology is available with or without the technology enabled through separate part numbers. The voltage generated by the CableSense pulse generator is typically between 0.4 V and 0.5 V, depending on the oscilloscope model. For example, the PXIe-5162 pulse is nominally 0.5 V, and the PXIe-5113 is nominally 0.4 V. These voltages were measured at the BNC connector into a high-impedance load such as a digital multimeter.

Table 1. Hardware Models That Support CableSense Technology With Their Nominal Specifications

| Oscilloscope Model | Oscilloscope 50 Ω Path Bandwidth | CableSense Pulse Voltage | CableSense Pulse Rise Time |

| PXIe-5162 | 1.5 GHz | 0.5 V | 650 ps |

| PXIe-5160 | 500 MHz | 0.5 V | 950 ps |

| PXIe-5113 | 500 MHz | 0.4 V | 1.3 ns |

| PXIe-5111 | 350 MHz | 0.4 V | 1.6 ns |

| PXIe-5110 | 100 MHz | 0.4 V | 4 ns |

Spatial Resolution

The pulse and the acquisition engine combined produce a maximum rising edge rate that can be sampled, and this rising edge determines the spatial resolution of the system. Higher bandwidth oscilloscope models enable shorter rise times and ultimately shorter spatial resolutions. Spatial resolution for a traditional TDR is specified as the distance between impedance features that can be uniquely identified; therefore, lower spatial resolution equates with more sensitivity to changes in a physical test setup. It is defined as:

where

Tr = rise time

l = spatial resolution

c = speed of light

εr = dielectric constant

Or, to simplify further, it is half the rise time (Tr) multiplied by the standard velocity of propagation:

where Vp is the velocity of propagation:

Figure 9 shows an example where the spatial resolution could be important when different impedances are short and adjacent.

Figure 9. Transmission Line With Two Short Impedance Changes

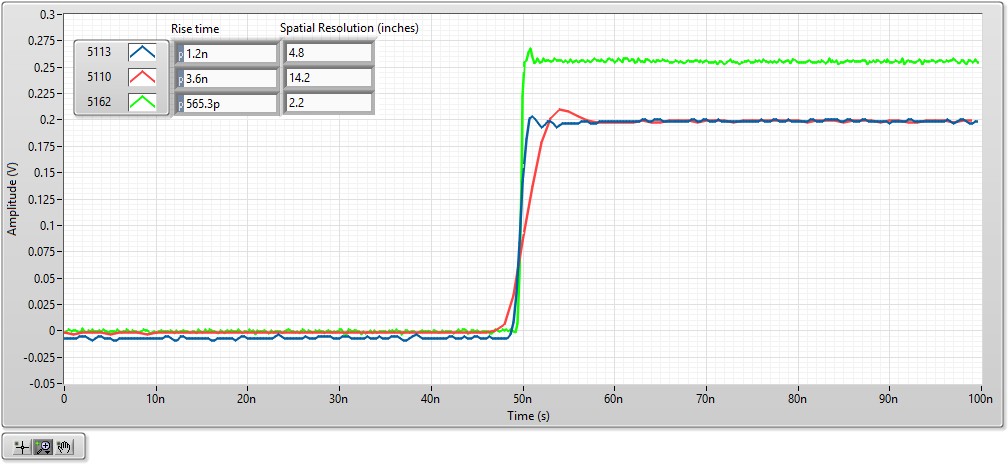

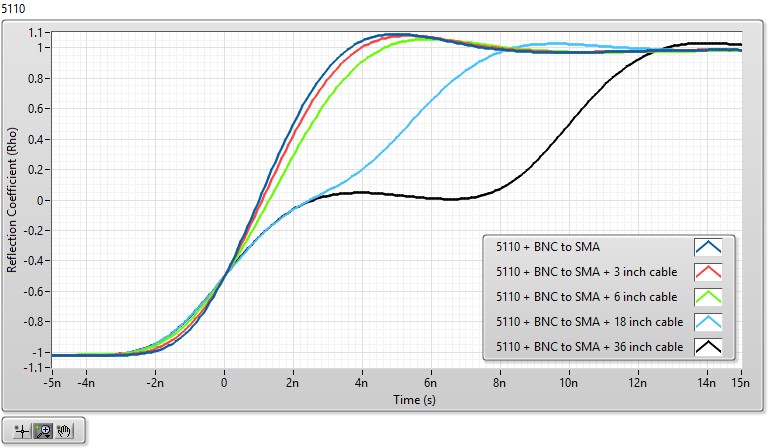

The plots in Figure 10 show the varying measured rise times for a PXIe-5162, PXIe-5113, and PXIe-5110; in this case, the measurements were slightly better than their respective nominal specifications. Based on this rise time, the spatial resolution ranges from 2.2 in. to 14.2 in., depending on the model’s bandwidth, for a standard RG223 cable with a relative dielectric constant of 2.25.

Figure 10. Rise Time and Spatial Resolution Estimates for the PXIe-5162, PXIe-5113, and PXIe-5110

Spatial Resolution Example 1: Cable Length Verification

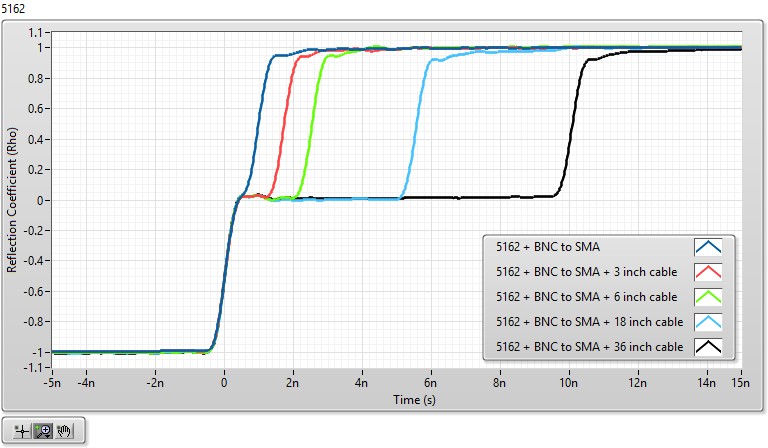

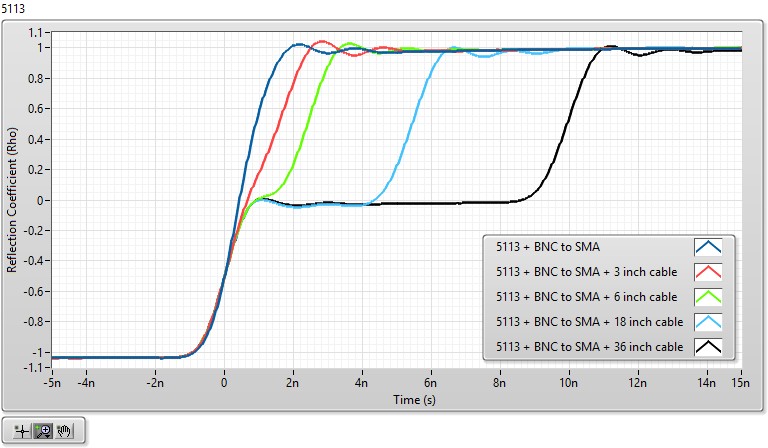

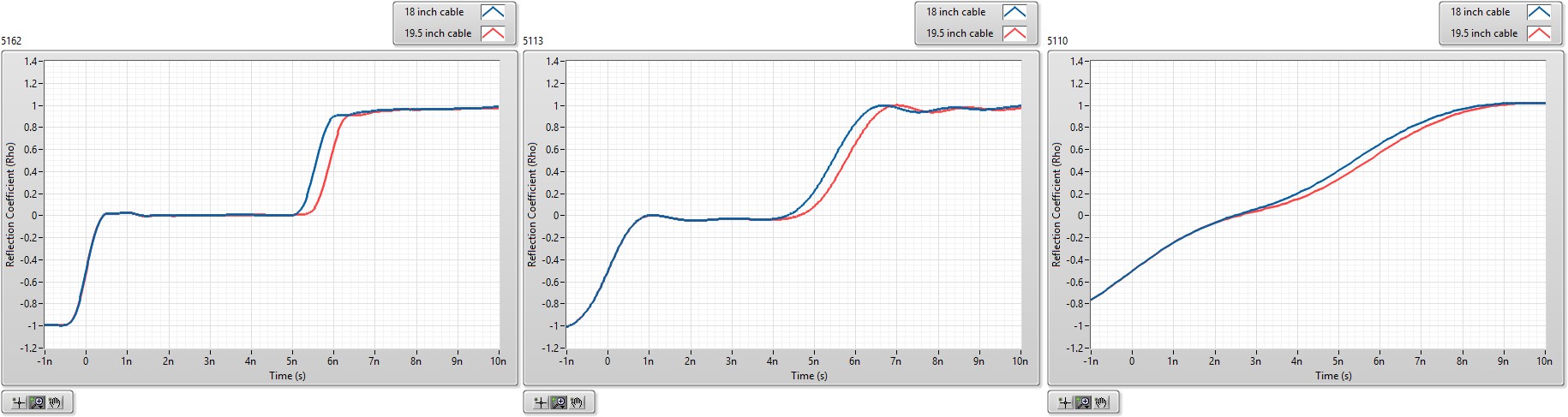

Using the same three oscilloscope models, a series of cable lengths was measured to demonstrate the different spatial resolution of the various models. The plots in Figure 11 reinforce the expectation that faster sample rates and bandwidths provide better spatial resolution. The PXIe-5162 has the fastest rise time of the three, which results in the best waveforms for measuring small cable lengths. This can be seen in Table 2 by the measured cable lengths’ proximity to actual values.

Table 2. Relative Effect of Spatial Resolution on Cable Length Measurement Accuracy

| Oscilloscope Model | BNC to SMA + 3.12 in. Cable (in.) | BNC to SMA + 6.56 in. Cable (in.) | BNC to SMA + 18 in. Cable (in.) | BNC to SMA + 36 in. Cable (in.) | ||||

| Measured | % Error | Measured | % Error | Measured | % Error | Measured | % Error | |

| PXIe-5162 | 3.09 | 1% | 6.47 | 1.4% | 17.9 | 0.5% | 35.9 | 0.3% |

| PXIe-5113 | 2.77 | 11% | 6.23 | 5% | 17.6 | 2% | 35.2 | 2% |

| PXIe-5110 | 0.73 | 77% | 2.22 | 66% | 13.1 | 27% | 31.1 | 14% |

Figure 11. Varying spatial resolution affects each oscilloscope model’s ability to detect smaller cable length changes. The PXIe-5162 (first plot) has the highest bandwidth and lowest spatial resolution of the three models, so it distinguishes the short cable length differences the most clearly.

When monitoring a station’s connectivity, you should check for cable differences, which can be detected at distances smaller than the spatial resolution might suggest. In Figure 12, each plot shows an 18 in. cable length measurement contrasted with a 19.5 in. cable measurement for the PXIe-5162, PXIe-5113, and PXIe-5110 oscilloscope models. All three models can detect the change in the cable length; however, as the plot shows, the PXIe-5110 oscilloscope’s slower rise time could lead to errors in reliably detecting a 1.5 in. change in length.

Figure 12. Relative Effect of Spatial Resolution on a 1.5 in. Cable Length Change Verification for 1.5 GHz (Left), 500 MHz (Middle), and 100 MHz (Right) Oscilloscope Models

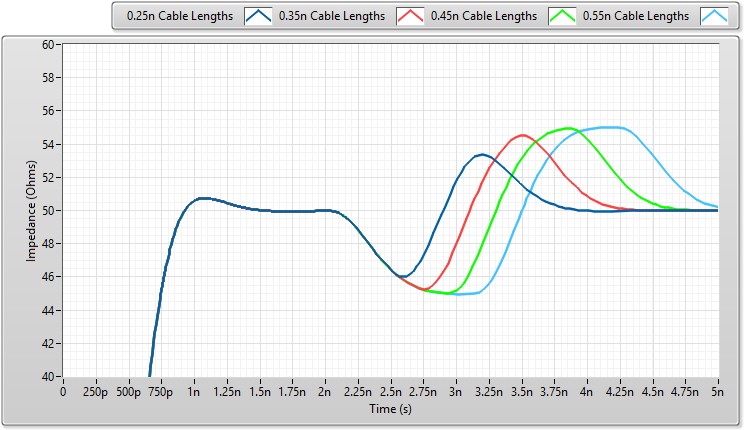

Spatial Resolution Example 2: Cable Impedance Verification

Impedance verification also relies on cable length, and spatial resolution still applies. To measure the impedances of two short cable segments, the cable segment lengths should be at least as large as the lengths defined by the spatial resolution equation. This can be seen in Figure 13, where two discontinuities are placed close together. When the discontinuities are roughly the same length as that defined by the spatial resolution equation, you can determine that two different discontinuities are present even though the measurement has not settled. The measurement doesn’t settle at 45 Ω and 55 Ω until the cable segment lengths approximately equal the rise-time length.

Figure 13. Measuring Impedance Discontinuities of Various Lengths With a PXIe-5162 or 650 ps Rise Time

Rise Time Versus Cable Length

The dependence on rise time for cable length and impedance measurements has been demonstrated above. However, as cable lengths increase, rise times slow down. For frequencies below 1 GHz, this is primarily due to loss caused by the skin effect of the cable.

The time it takes for a step response to transition from 0 V to the final value divided by 2, To, is defined as:

where A is the loss in dB/100 ft and L is the cable lengthi.

For an RG58 cable, A = 17.8 dBm/100 ft, and for an RG223 cable, A = 16.7 dB/100 ft. The rise time of a cable is then calculated as RT = 28.83 * To. The calculated rise times for various cable types and lengths are provided in Table 4.

Table 3. Coaxial Cable Rise Time vs. Length

| Cable Length | RG223 Rise Time | RG58 Rise Time |

| 1 m | 0.04 ns | 0.04 ns |

| 5 m | 0.99 ns | 1.12 ns |

| 10 m | 3.95 ns | 4.48 ns |

| 25 m | 24.67 ns | 28.02 ns |

The rise time of the system then depends on both the rise time of the cable and the rise time of the CableSense pulse and channel.

For the PXIe-5162, the nominal CableSense pulse rise time from Table 1 is 650 ps, and from Table 3 the rise time of a 5 m RG223 cable is approximately 990 ps. Therefore, the combined rise time to use in the spatial resolution equation at the end of a 5 m cable would be approximately 1.18 ns. Ultimately, this shows that the greatest spatial resolution is achieved closest to the scope input.

Measurements With Attenuators

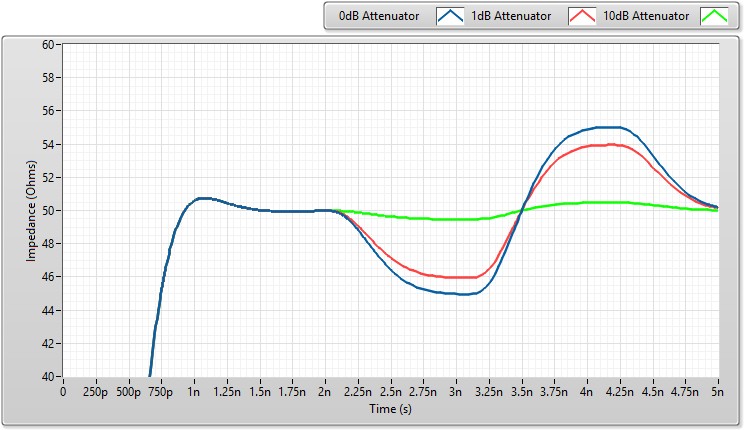

In many applications, attenuators are included in line with the cabling, as shown in the example in Figure 14.

Figure 14. Cabling Configuration With Inline Attenuator

In practice, reflected signals after an attenuator are reduced as they propagate back toward the oscilloscope channel, which makes impedance discontinuities less pronounced. Though this typically has a positive effect on measurement quality, it reduces the ability of the CableSense feature to detect cable variations. Figure 15 shows this result, comparing no attenuation with both a 1 dB and 10 dB inline attenuator.

Figure 15. Effect of Various Attenuators on Returned Results

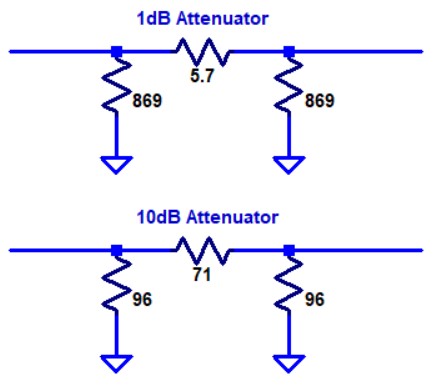

In addition, the impedance the oscilloscope detects varies depending on the attenuation level you use. For example, using the resistor values shown in Figure 16, a 1 dB attenuator has an open-cable impedance of approximately 436 Ω while a 10 dB attenuator has an open-cable impedance of approximately 61 Ω.

Figure 16. 1 dB and 10 dB 50 Ω Pi Attenuators

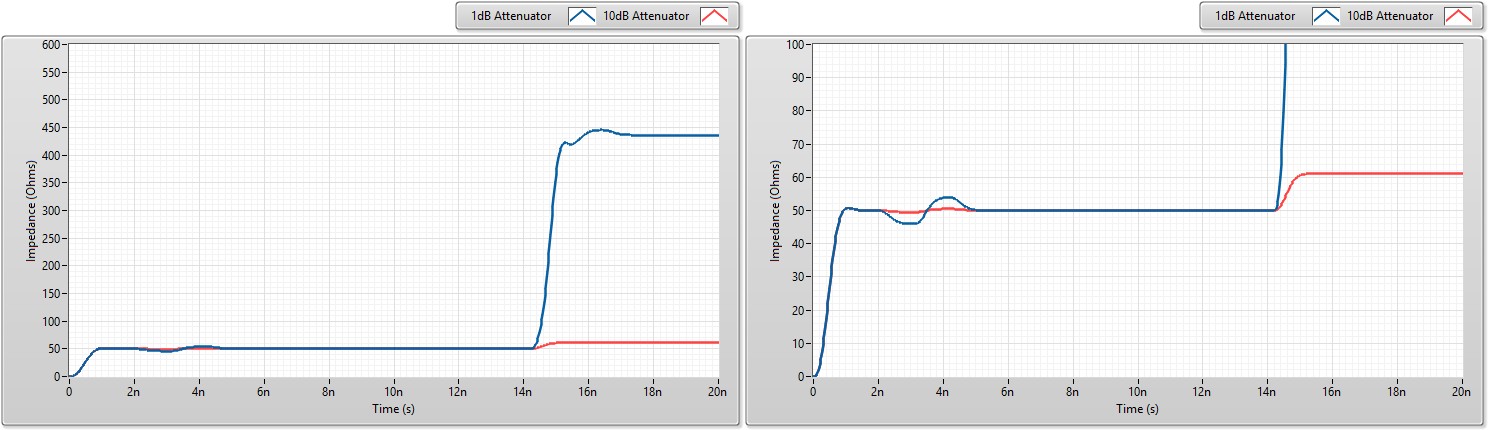

The impedances the CableSense pulse detects are shown in Figure 17 for the cable setup in Figure 14. The plot on the left shows that the 1 dB inline attenuator setup settles at the end of the cable to 436 Ω, while the 10 dB inline attenuator setup settles at 61 Ω. The plot on the right shows the same data zoomed in. You can use both cable setups to determine cable length; however, the higher the attenuation level, the more difficulty you may have programmatically calculating distance while ensuring that other impedance discontinuities do not lead to misidentifying the end of the cable.

Figure 17. End-of-Cable Detection for Cables With 1 dB and 10 dB Inline Attenuators

Mitigate Risk With CableSense Technology

Going down the wrong troubleshooting path is not only frustrating but costly; your whole team’s project can get pushed back another day. Every time you miss a deadline because of a last-minute issue, you tell yourself it’s the last time a frustrating, yet avoidable mistake slips through. But, until now, how could you really be sure?

The simplest, although least effective, route is to ignore the possibility of electrical path failures until necessary (or, until they’re already urgent). For additional peace of mind, some organizations may routinely replace cables before needed or schedule downtime for connection health verification using an external TDR. But frequent replacements require extra budget, and external TDRs take extra time given that each test point must be disconnected from the test station and reassembled after verification.

Given its automation capability, CableSense technology can help you mitigate your overall risk by catching setup changes early and qualifying the integrity of the measurements that follow, all without disrupting your typical workflow. Whether you’re trying to be first to market or just sticking to a strict schedule, chances are you don’t have time for downtime.

Next Steps

- Explore PXI Oscilloscopes

- Download LabVIEW code for an example CableSense implementation

i Pulse Response of Coax Cables, Lawrence Radiation Laboratory, University of Berkeley