ni.com is currently undergoing scheduled maintenance.

Some services may be unavailable at this time. Please contact us for help or try again later.

Impact of Security on Automated Test Systems

Overview

As organizations look to combat increasing cyber-security threats, special-purpose test systems present unique challenges. In today’s digital age, a compromised test system can wreak havoc on an organization’s reputation and revenue. But by recognizing the ways in which test systems differ from traditional IT systems, this paper seeks to examine the key cyber-security trends of our time, illustrate the real-world examples that highlight those trends, and offer practical steps to successfully navigate a path forward.

Contents

- Trend 1: Applying IT Security to Test Systems

- Trend 2: Supply-Chain Compromise

- Trend 3: Growing Attention to the Insider Threat

- Where to Go From Here

- Next Steps

Trend 1: Applying IT Security to Test Systems

Imagine yourself in this all-too-familiar scenario for those in the manufacturing industry. Your phone rings at 2:15 a.m., jolting you from sleep. The subsequent conversation delivers news of an event that requires your urgent attention.

Production line C was halted in the middle of a run because of the failure of two programmable logic controllers (PLCs) used in the end-of-line production test system to ensure product quality. The manufacturing control center lost communication with the PLCs 30 minutes ago and can’t determine whether they are now in a trustworthy state to bring back online. Three of these incidents have already happened this month, and now a fourth? This time, however, your manufacturing team was prepared and is shifting production to an adjacent facility with spare capacity. With hope, this will help to reduce the net production losses.

As discovered days later, these failures were the result of a cyber-security incident. But rather than an external attack, it was a case of friendly fire. The IT department recently implemented nightly scans on all networked devices to assess their security—test equipment used to be exempt from most IT protocol, but executive leadership changed this policy as they could no longer tolerate the cyber-security risks of unmonitored network devices. Because of the rudimentary software algorithms on the PLC that were likely developed decades before the security software existed, the nightly security scans overwhelmed the two PLCs with more network packets than they could handle and triggered a failure response: shutdown.

The Key Issues

The trend of applying IT security practices to test systems makes sense for several reasons, most notably the increased cyber-security incidents that exploit unmonitored network devices. No CEO wants to be in the position of the Target CEO, whose point of sale systems were compromised by an attack that originated through the heating and ventilation system controllers. Similarly, no executive can stomach the economic impact of large production losses should test equipment be attacked through the corporate IT systems.

Another reason this trend makes sense is that security practices and technology for generalpurpose IT systems are more mature. To protect systems and detect compromise, IT security staff have a range of options from network discovery scanners and intrusion detection technology to desktop antivirus and monitoring agents. The natural response is to extend the coverage of these security best practices and technologies to special-purpose test systems and devices, especially when those practices are used to comply with a regulated standard such as NIST SP 800-171.

However, this trend does not make sense categorically for at least two reasons. Primarily, IT-enabled test systems are less tolerant of even small configuration changes. Users of IT systems can tolerate downtime and may not even perceive application performance differences, but special-purpose test systems (especially those used in production) often cannot tolerate them. Even small changes in performance characteristics because of a security patch or a new security feature can negatively affect test outcomes or even the quality of the collected test data. Similarly, even small amounts of downtime in production test systems can have a significant financial impact on an organization’s revenue.

Second, test systems often have security needs that are unique. They typically run specialized test software not used on other organization computers, and they are equipped with specialpurpose peripherals unaddressed by standard IT security technologies. For example, test peripherals that require calibration to supply accurate measurements can degrade or even undermine test quality if their calibration data is altered maliciously or inadvertently. Blindly applying IT security practices to these test systems can result in a false sense of security simply because they don’t address the unique cyber-security risks of these test systems.

What You Can Do

The preferred approach for security test equipment involves two key components. First, use data to inform what IT security measures you adopt for your test system and how extensively you apply them. This equips you with information needed to engage IT security staff for assessing and managing risk. Second, supplement those IT security measures with testsystem-specific security features so that you address unique risks. This fills in the remaining gaps that standard IT security practices can’t address.

You can reference the annual Verizon Data Breach Investigations Report (DBIR) as a source of data. Verizon analyzes the data collected about the prior calendar year’s disclosed cybersecurity breaches in this report. A portion of the 2016 Verizon DBIR contains an analysis of the active cyber attacks that preyed on the vulnerabilities patched by major software vendors. Hackers use a technique that takes advantage of the lag time between a vendor’s release of a patch and the installation of the patch on a computer. By reverse compiling the vendor patch to discover where the vulnerability is in the unpatched software, the hacker then weaponizes an exploit to play on that vulnerability. Hackers actively begin exploitation within two to seven calendar days after a patch release, heavily focusing on major software vendors.

You can use this data to make more accurate risk decisions about patching your test systems. To reduce your risk, first install security patches within seven days of their release. This means you must monitor software vendor notifications, evaluate patch applicability, and rapidly requalify affected systems. Second, minimize the installed software on your test systems. The upfront time spent doing so will quickly pay for itself in reduced patching and requalification costs. These steps are especially important for higher risk test systems such as those used in manufacturing or production.

The second key component involves making use of vendor-specific security features. For example, given how crucial calibration data, test parameters, and test sequences are to maintaining test quality, you can use technologies such as file integrity monitoring and calibration integrity features that are specifically configured for your test system and its components. Similarly, you can refer to security documentation from your test system vendors to guide your test system purchase, design, and deployment decisions toward options that provide better security.

Questions to Ask Your Test System Supplier

How compatible is your software with Windows OS security features?

NI tests its software for compatibility with Windows security features so that you can enable them as needed in your environment. You can run all NI application software with standard user privileges.

Can I safely remove software component “X” to reduce my security risk?

With NI software installers, you can customize the installation so that you can install only the products you need while ensuring you have all the necessary dependencies. In addition, NI applications give you the ability to build your own custom installers so that you can deploy your application with the minimum runtime environment and dependencies.

What additional safeguards can I use to protect the test software and my test applications?

You can find file integrity monitoring tools on the market. NI is currently evaluating these types of tools to determine how they might be configured to work with NI software. Contact NI for details.

Where can I find security information for your software and hardware?

NI provides documentation about network ports and protocols, Windows services, memory sanitization, and critical security updates.

Trend 2: Supply-Chain Compromise

News of malicious software (malware) that targeted industrial control systems came with a surprise in 2014. This was not the work of hackers remotely penetrating the defenses of a particular factory or of covert operatives installing malware at a refinery. Instead, the malware had been installed through vendor software that contained a trojan.

The campaign was originally dubbed “Energetic Bear” because it targeted electric power plants and was thought to have originated in Russia. One aspect of the campaign involved the supply chain. They attacked three different software vendors whose websites had their industrial control system software available for customer download. When the hackers had access to the files on the website, they altered the legitimate vendor software installer by inserting a piece of malware into it and then saved the file in its original location on the website. It was only a matter of time before customers downloaded the trojanized software and installed it. The economic impact to both the software vendors and their customers is unknown.

In a more sophisticated case, Kaspersky Labs discovered a supply-chain compromise of several vendors’ commercial hard drives in 2010 that dated back to as early as 2005. What they found was firmware embedded in hard drive controllers that appeared to operate the hard drive normally. However, it secretly stored a copy of what it considered to be sensitive information into unused areas of the nonvolatile memory containing the firmware. Because the altered firmware had no external communication capability, you could conclude that an operative would collect the hard drive after it had been decommissioned to recover the sensitive information. Notice that the sensitive information would be recoverable even if the contents of the hard drive were sanitized before disposal.

The Key Issues

The Energetic Bear campaign’s website compromise indicates that the integrity of a test system (or any system) relies on the integrity of its components throughout their life cycle. Every place that the components change hands and every location where the components are stagnant for an extended period of time represents opportunity for compromise. Establishing a clear chain of custody is vital, and equally as vital are safeguards to protect and detect a compromised component at each stage.

The Kaspersky hard drive compromise discovery indicates that the sophisticated hackers of the world are willing to reach into a vendor’s development process to access unpublished vendor source code. In this case, the stolen vendor source code was used to create fully installable and functional variants that were installed into the compromised hard drives well after the hard drives had been purchased and put into service.

No aspect of a product is immune to a supply-chain compromise. Any installer, even for seemingly insignificant plugins or add-ons, could have been compromised by the Energetic Bear campaign. The same was true for the seemingly insignificant firmware in the hard drive controllers that allowed field updates without strong security checks.

You must understand the trade-offs between supplier diversity and standardization in addressing cyber-security risk. Diversification has the advantage of reducing the risk of system-wide compromise because of the compromise of one supplier’s component, but this advantage is often outweighed by the sustainability costs for training staff on multiple types of equipment and managing all the supplier relationships. Standardization reduces these sustainability costs but carries greater risk of a system-wide compromise.

What You Can Do

Standardization has so many cost benefits that it is difficult to justify supplier diversity except in high-risk scenarios. The most feasible approach involves supplier standardization where an evaluation of the supplier’s supply-chain security is a significant part of the decision criteria.

Most already have suppliers on which they have standardized. In this case, both you and the supplier have a vested interest in maintaining the relationship. The most important thing you can do to address supply-chain security is talk with your suppliers. Ask them about their supply chain and what they do to protect the integrity of their products throughout their development, manufacturing, and order fulfillment processes. Your insights into any weaknesses in their processes can help to reduce your risk of supply-chain compromise and help your suppliers shore up their security. Without that dialog, both sides are subject to making uninformed decisions.

Besides prevention, ensure that the dialog with your suppliers includes ways to detect when compromise has occurred. Any security system can be compromised given enough motivation and resources. Make sure there are sufficient checks in the system to detect when compromise has occurred and there are clear instructions about how to respond. In a case like the Energetic Bear website compromise, the “last leg” detection mechanism could be a digital signature check of the installer, but that detection mechanism needs to be paired with proper procedures and training that result in an aborted installation and a help desk ticket. In a case like the hard drive firmware compromise, an inquiry into the supplier’s firmware update design would reveal a protection gap with no way to verify the integrity of the installed firmware.

Questions to Ask Your Test System Supplier

How can I verify that the software I receive from you is legitimate?

NI digitally signs all its Windows software installers so that you (and Windows) can verify that it is from NI and unaltered. In addition, NI offers a secure software delivery solution that adds physical assurance to software delivery. Secure software delivery provides tamper-evident packaging and traceable shipment of NI software on optical disks plus an independently shipped validation disk to verify the file integrity of the software in the other package.

How do you protect your supply chain from counterfeit parts and altered components?

NI follows rigorous quality practices with respect to where it sources parts and how it assembles the final product. NI conducts background checks on the personnel involved in manufacturing and test, and has numerous checks and balances to ensure you receive a high-quality product. Contact NI for more details.

How can I verify that no one has tampered with my hardware calibration data?

NI hardware modules require a password to make changes to the long-term calibration data. Manage this password with the same diligence you apply to your Windows password. NI is currently developing a calibration integrity feature for selected hardware modules. This feature would give you the ability to verify that the long-term calibration data has not changed since its certified calibration.

How do your products support vendor diversification?

NI complies with industry standards whenever possible to provide the broadest interoperability with other vendors’ products. For example, NI products conform to PCI, PXI, IEEE, IETF, and ISO/IEC communication standards and use industrystandard technologies such as IVI and OPC-UA. NI participates in many of these standards committees as well.

Trend 3: Growing Attention to the Insider Threat

The Edward Snowden leak of volumes of classified surveillance data from the National Security Agency is the most likely cause of increased attention to the insider threat. His actions have resulted in an estimated $22 to $35 billion in economic losses to the US technology industry because of the resulting distrust in US technology. But it isn’t the first case of insider threat.

Timothy Lloyd of Omega Engineering became infamous for his insider activity in 1996. The state of technology was Microsoft Windows 95, and cyber security was rarely (if ever) discussed by mainstream media. What Timothy Lloyd was able to accomplish as a privileged insider was staggering for the time. He worked at the manufacturing site as a system administrator. When he learned that he would be dismissed, he installed a software time bomb that systematically deleted all the manufacturing software off the systems under his control. The time bomb triggered when the first administrator logged in to the network the day after Lloyd’s dismissal. The economic impact of this event to Omega Engineering amounted to several million dollars and the loss of 80 jobs. It almost forced the company into bankruptcy.

The Key Issues

The key issues in this area are multifaceted and are still a significant research topic. The issues include attentiveness to anyone who has access to critical test systems, regardless of their status as employees or contractors. They involve a clear identification of the most critical aspects of the business and the people who have a role in those aspects of the business and how authority is distributed among them. Solutions typically involve a significant degree of behavioral monitoring, which can negatively affect the interpersonal trust needed for operational efficiency.

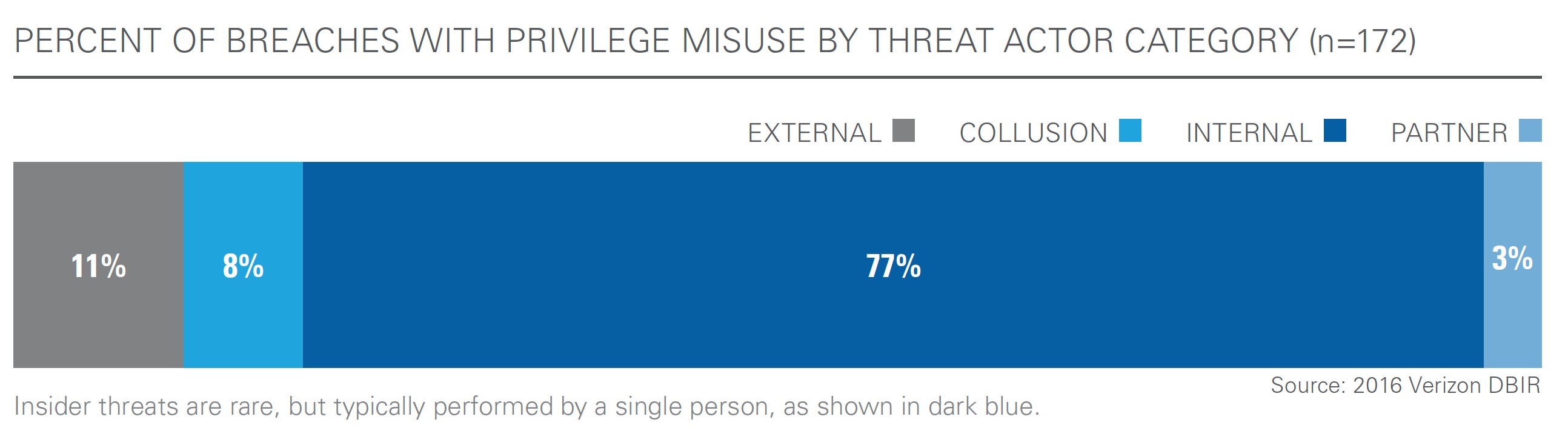

Insider threats are low probability but high-impact events, an assertion that the 2016 Verizon DBIR supports. Out of over 64,000 cyber-security incidents in 2015, only 172 involved a misuse of privilege by an insider. Over 75 percent of the insider threat incidents were done alone without any external assistance or internal collusion with another insider.

What You Can Do

Except for high-criticality systems, addressing the insider threat is best done after you have tackled the basics described in the previous trends. Those other trends speak to the most probable ways that your test systems can be compromised.

For high-criticality systems, however, address the insider threat as early in the design process as possible. After you have identified the most sensitive or mission-critical aspects of the system, design a privelege management solution that separates the duties into at least two roles that no single individual can hold, and prevent any attempts to assign both duties to a single role. That moves the probabilities in your favor from the 77-percent-acted-alone bucket into the eight-percent-had-to-collude bucket, according to the Verizon DBIR data.

Question to Ask Your Test System Supplier

How do you protect your software’s source code internally?

NI implements numerous layers of protection to its software source code: NI requires unique usernames and passwords to make changes, limits company access just to the engineering groups involved in development, and periodically reviews the access control lists. Engineering groups elect to either restrict code changes to the members of the access control list or to allow code changes to be submitted from nonmembers. For groups that allow code changes from nonmembers, NI requires notifications of such source code changes and a code review by a group member.

Where to Go From Here

Addressing the cyber-security needs of a test system is complex. It can either get bogged down in an infinite number of potential security risks or never get started because it seems overwhelming. Realistically, perfect security is unachievable because every solution can theoretically be compromised given enough resources and time. Instead of either extreme, start by prioritizing issues based on realistic scenarios and address the most import issues first, applying commonsense along the way.

Start by building a consensus among the people involved (for example, your management, team, IT security staff, and suppliers) that addressing security threats is important to everyone. This starting point also has the benefit of raising the awareness of all relevant personnel about the nature of cyber-security threats and the potential negative impact of security incidents to their mutual success. Next, allocate time and money specifically for cyber-security projects, training, and technology. Because cyber-security threats to test systems are real and pose a financial risk to your organization, an allocation of the organization’s resources should be dedicated to assessing and addressing cyber-security needs. After a realistic assessment of how cyber-security threats can impact your operations, allocate a proportional amount of your resources to address those needs.

Next Steps

- Learn more about NI security

- Read more about best practices for building a test system

- Configure your test system