RF Front End Testing with NI PXI

Overview

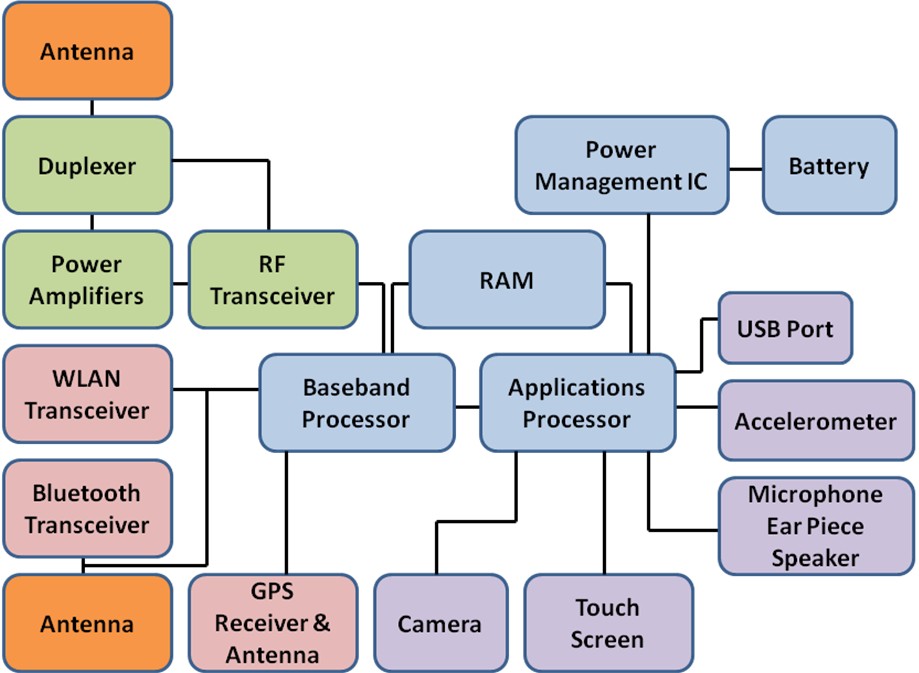

What composes an RF front end in today’s radio devices? If you were to take apart your mobile phone, you would see an assortment of chips with different functions that make wireless communication possible. This white paper focuses on the duplexer, power amplifiers (PAs), and RF transceiver.

Contents

- Components of a Typical Phone

- RF Front-End Devices Versus Other Mobile Device Components

- Importance of Test for RF Front-End Devices

- Common Tests for RF Front-End Devices

- Typical Setup and Device Control for RF Front-End Test

- Common Test Equipment for RF Front-End Testing

- Performing RF Front-End Test With National Instruments PXI Products

- Next Steps

Components of a Typical Phone

Figure 1: A typical phone layout consists of many components that make wireless communication possible.

Antenna

Integrating different antennas into a phone can be difficult. When you have different frequencies for each standard, a specific antenna is best used to get the best performance. In some cases, you can share antennas by using filtering or anticipating loss from nonideal antenna length. Take the case of your typical phone when you need to provide multiple bands for coverage in different countries. For example, you may need to support GSM bands as low as 380 MHz and as high as 1,900 MHz. Based on the calculation of the wavelength of your radio signal, you can determine your antenna length.

Therefore, you have varying lengths of antennas from 7. 5 cm to 37 cm based on a simplified dipole antenna design formula.

An additional challenge phone manufacturers have besides the shared antenna is the impedance of the antenna compared to the impedance of the rest of the electronics. Because an antenna comes into contact with nonideal media, such as a metallic table or some other simple grounding, it causes a variation in the electrical impedance of the antenna. This impedance causes signal reflection or worse, which makes power management challenging for a phone. New technologies such as microelectromechanical systems (MEMS) look promising for mechanically controlling these impedance changes at a very rapid rate.

Duplexer

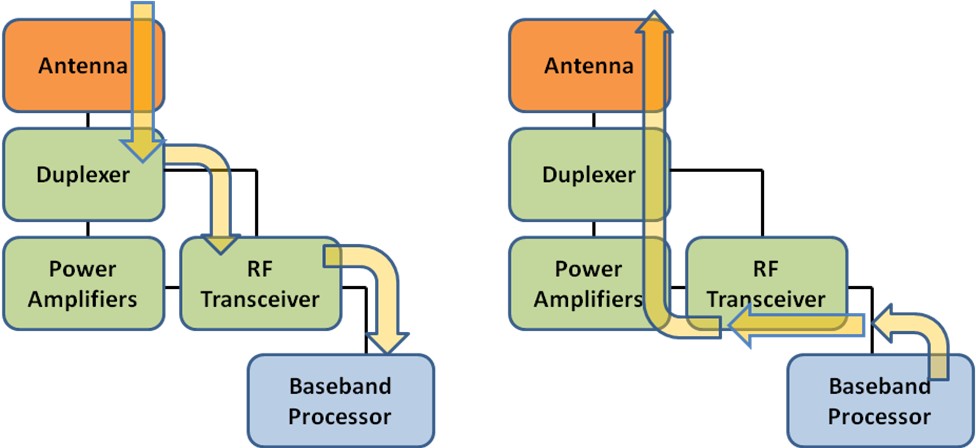

With a duplexer, both the transmission and reception of the main cellular signal can occur on the same shared antenna. In the case of the phone, the duplexer acts like a rapid switching device. Reception of the signal from the base station typically goes through a low-noise amplifier (LNA) to add gain before being downconverted by the RF transceiver and finally to the baseband processor (see Figure 2). Generation goes through the PA to add gain to the signal for transmission back to the base station.

Figure 2: The phone receives the RF signal in the left image and generates the RF signal in the right image.

Power Amplifier

One of the most important components in the mobile phone is the power amplifier (PA). The PA provides gain to the generated RF signal. Depending on the standard, this could output up to 30 dBm or 1 watt of power from a phone. It affects battery life more than other components in the phone, so particular attention is made to make this as efficient as possible.

RF Transceiver

The RF transceiver is the primary front end for the baseband processor. It downconverts the signal from the RF frequency of choice to either an intermediate frequency typically below 100 MHz and often with further signal processing to baseband (0 Hz) to obtain the original transmitted complex data. It also upconverts the baseband data from the processor typically through an I/Q modulator directly to an RF frequency.

Baseband Processor

Although not the focus of this white paper, it is important to understand this component’s function. The baseband processor collects the captured data from the RF transceiver and extracts the raw data through demodulation and other signal processing. This content can include anything from audio information to video or browser bit information for Web surfing. It also does the reverse by signal processing and modulating the data. Besides managing just the physical layer portion of the data it also deals with the signaling requirements for the phone to communicate with the base station.

RF Front-End Devices Versus Other Mobile Device Components

One difference between the RF front-end devices, such as the PA, and the other mobile device components is the way they are made. Because silicon (Si) does not have great properties for microwave-based signals, it is not commonly used for RF devices. Instead PAs and other RF front-end devices are made with Gallium Arsenide (GaAs), which is the most common semiconductor compound. However, newer devices are also using Indium Phosphide (InP), Silicon Germanium (SiGe), and Gallium Nitride (GaN). These compounds have advantages of faster transistor junctions and tolerances for higher frequency signals. The disadvantages are that they are more expensive to fabricate and have smaller wafer sizes. For these reasons, there is a lot of research and development to move microwave devices to silicon.

Importance of Test for RF Front-End Devices

Developing a phone with all of its components can lead to many issues or errors without proper testing. These errors can compound with each other to degrade the phone’s overall performance. Therefore, it’s important to test each component to ensure quality and test the entire phone itself to ensure proper integration. Traditionally, testing for the semiconductor components is performed once it is packaged. However, because of cost of new wafer development and processes, it is becoming more important to also catch any issues with the silicon prior to packaging.

Common Tests for RF Front-End Devices

Many of these common tests have been proven to be the most effective to catch issues with the semiconductor device. For characterization test, they also can provide insight into the function of the chip. The following sections discuss which tests are appropriate for characterization, production, or both. Some tests are used for both packaged chips as well as wafer-level testing.

The tests can be broken down into five categories: RF power measurements, spectral measurements, network analysis, modulation accuracy measurements, and DC measurements.

RF Power Measurements

Tx power or transmit power is probably the most common measurement performed for a device. Output power from the device must be within compliance of its design. You can perform this measurement using a variety of measurement equipment including a power meter, vector signal analyzer (VSA), and vector network analyzer (VNA).

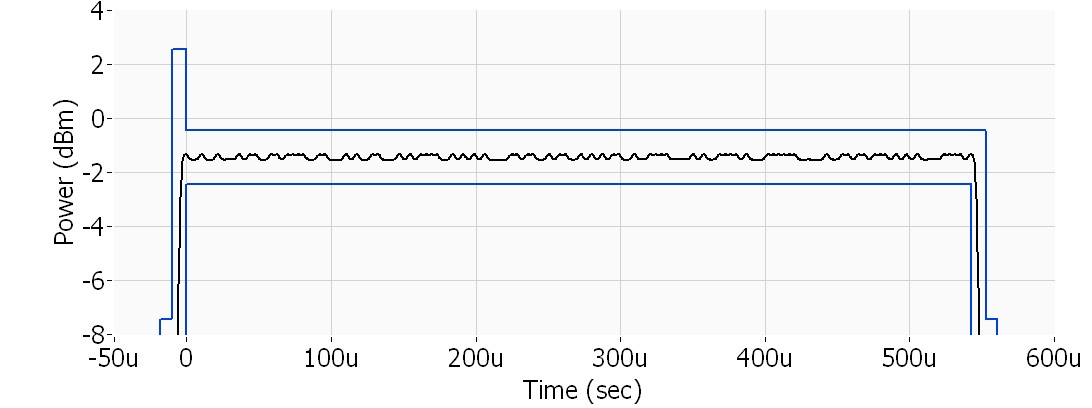

Power versus time (PVT) measures the burst power and the average power of a signal. It is commonly used for bursted RF signals such as GSM or WLAN. Often, a mask is placed around the signal to make sure it is in compliance for the test.

Figure 3: A PVT measurement is commonly used for bursted signals.

Gain is an important measurement for PAs. Gain = Pin – Pout where Pin is input power to the amplifier and Pout is out resulting output power after amplification. By having a known input power, typically by using good calibration techniques, you can use this as your Pin reference. A highly accurate device such as a power meter measures Pout. Some measurement products such as VSAs can measure gain as well if they are measuring relative gain.

Return loss provides insight into the reflection of the original signal when going through the RF front-end device. This is important especially when trying to measure voltage standing wave ratio (VSWR) for best impedance matching. Because it references a ratio of the input and output signal, it is typically measured with a VNA. In some cases, it is possible to use a vector signal generator (VSG), VSA, and coupler although care must be taken when performing system calibration of this hardware.

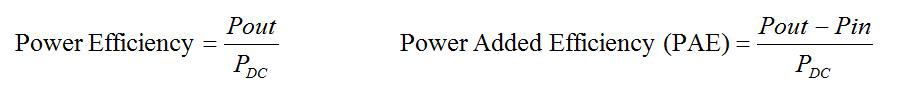

Power efficiency is one of the more important PA measurements because it determines how well a PA uses battery power from a mobile device. The higher the efficiency, the longer the battery lasts, which is ideal for device manufacturers. You can calculate power efficiency in a couple of different ways, depending on whether the device is a high-gain amplifier.

Where Pout is the measured power from the amplifier, PDC is the supplied power from the battery source or battery simulator, and Pin is the input power, which is typically a controller tone or continuous waveform (CW).

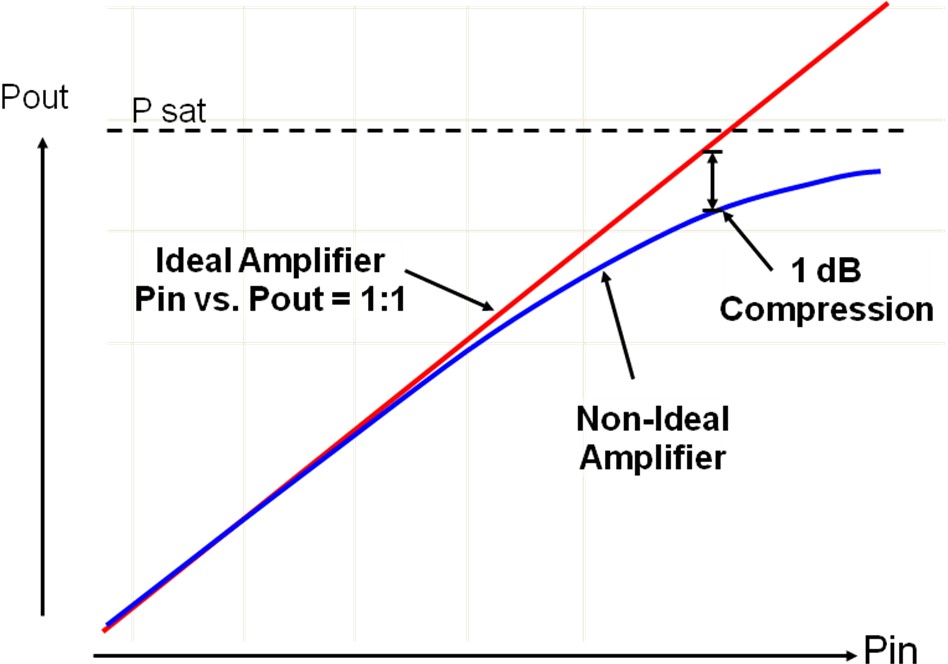

1 dB compression is also an important measurement. Since PAs eventually become nonlinear as they are driven to their maximum output level, they start to deviate from their ideal linear output. This deviation is best illustrated in Figure 4.

Figure 4: The 1db compression is the point where the ideal linear amplifier and the real-world amplifier deviate by 1 dB.

As you increase the power input or Pin, the PA begins to saturate and level off to a maximum power output called Psat. The point where the ideal linear amplifier and the real-world amplifier deviate by 1 dB is called the 1 dB compression. The signal is compressed by its natural saturation point. In PA design, it is ideal to get as close as possible to this 1 dB point because of power efficiencies close to this level.

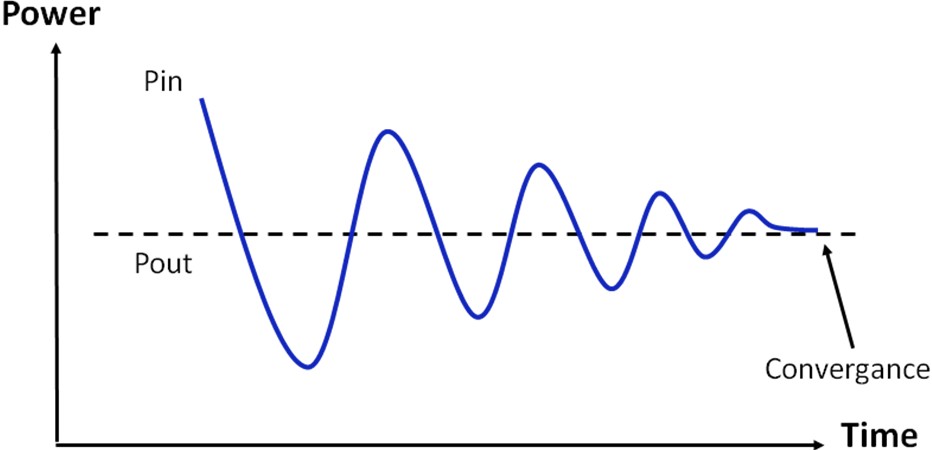

Servoing is a unique concept to PAs. Because the calibrated output power must be known, a power control technique is used to determine this final gain amount. This is done by creating a control loop to capture a desired output power and controlling the generator power until that output power is achieved. In simplest terms, it uses a proportional control loop to swing back and forth in power levels until the output power level converges with the desired power.

Figure 5: In PA servoing, a control loop swings the power levels back and forth until the output power level and desired power converge.

Third-order intercept (TOI) and intermodulation distortion (IM3) are two closely related specifications that are used to enumerate the linearity of an RF system. Both specifications are insightful in regards to the level of third-order distortion products relative to the power of the instrument. Third-order distortion products can interfere with the original signal and therefore lower its signal-to-noise characteristic. This in turn makes it more difficult for higher order or more complex modulation schemes to work properly in a system.

Harmonics are also important to measure because they can affect the output product of the device, which can either interfere with other RF signals or cause a compliance issue with the Federal Communications Commission or other government communications body. You can measure out harmonics as far as the seventh order for different standards. For example, you may measure the harmonics for the 1,800 MHz PCS band out to the seventh order, which is approximately 12.6 GHz.

Spurs are also commonly measured during design. These affect the signal-to-noise ratio (SNR), so design modifications are made to eliminate these in the measured spectrum.

Spectral Measurements

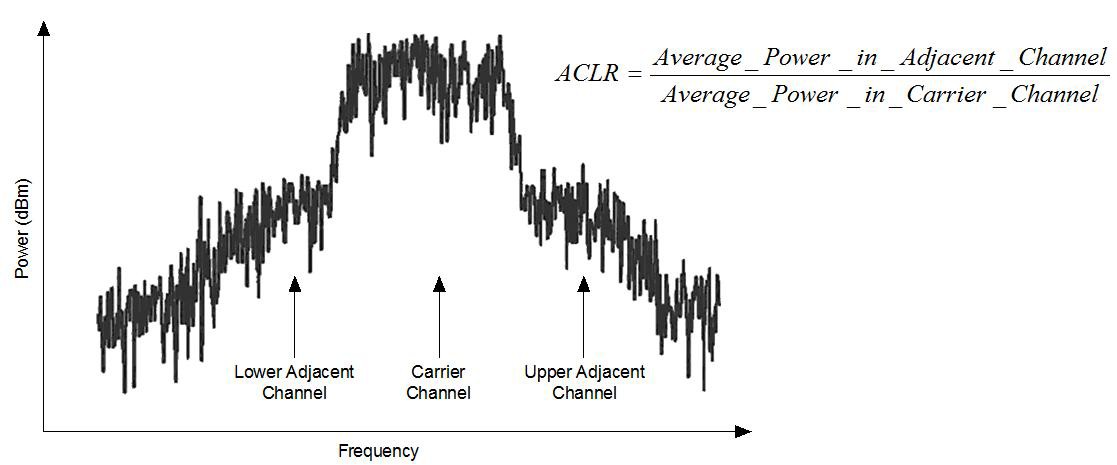

Adjacent channel power measures the way a particular channel and its two adjacent channels distribute power. You can perform this measurement by calculating the total power in the channel and also the total power in the surrounding upper and lower channels. Depending on the technology standard you are measuring, different criteria exist for adjacent channel power measurements. For example, the core division multiple access (CDMA) wireless standard requires transmissions to fit within a 4.096 MHz bandwidth. Moreover, adjacent channel power, measured at 5 MHz offsets, must be at least 70 dB below the in-channel average power.

Adjacent channel power leakage ratio (ACLR) is a ratio of the carrier product power to the adjacent channel products power level. This is most commonly used for wideband CDMA measurements. For other standards it is also commonly known as the adjacent channel power ratio (ACPR). The primary reason for this measurement is twofold: It measures any adjacent channel interference, which can affect other spectrum outside the carrier of interest and, more importantly, it is another method to measure third-order intermodulation products introduced by the carrier. Figure 6 illustrates this measurement for a given WCDMA signal.

Figure 6: This WCDMA waveform illustrates ACPR or ACLR.

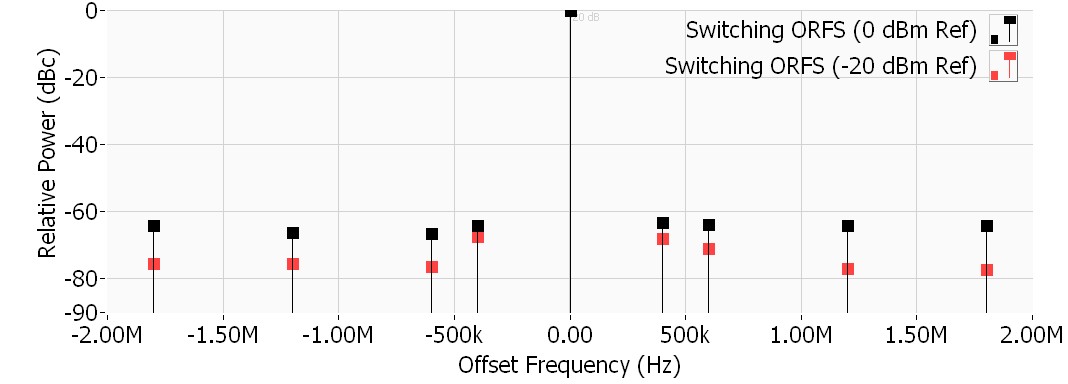

Output RF spectrum (ORFS) is a narrow-band measurement that provides information about the distribution of the mobile station transmitter’s out-of-channel spectral energy due to modulation and switching as defined in the 3GPP specification. This measurement is commonly used for GSM, GPRS, and EGPRS where GMSK modulation (phase only) is used for transmitting and receiving data.

The ORFS measurement calculates the power at various frequencies offset from the carrier frequency to determine how much the burst leaks into other frequency bands. The power at each offset is referenced back to the carrier power and is reported in terms of dBc.

There are two types of ORFS measurements. The modulation ORFS measurement examines the frequency content of the center of a burst, while the switching ORFS measurement measures the frequency content of the ramp up and ramp down portions of a burst. In general, the switching ORFS reports higher values at a given frequency than the modulation ORFS. In the 3GPP specification, defined frequency offsets are used for modulation and switching:

- Modulation: +/-200 kHz, +/-250 kHz, +/-400 kHz, +/- 600 kHz, +/-1.2 MHz, +/-1.8 MHz

- Switching: +/-400 kHz, +/-600 kHz, +/-1.2 MHz, +/-1.8 MHz

Figure 7: This is the ORFS for a GSM signal.

When introducing amplitude and phase modulation such as QPSK or 16QAM, it is common to use an error vector magnitude (EVM) measurement instead.

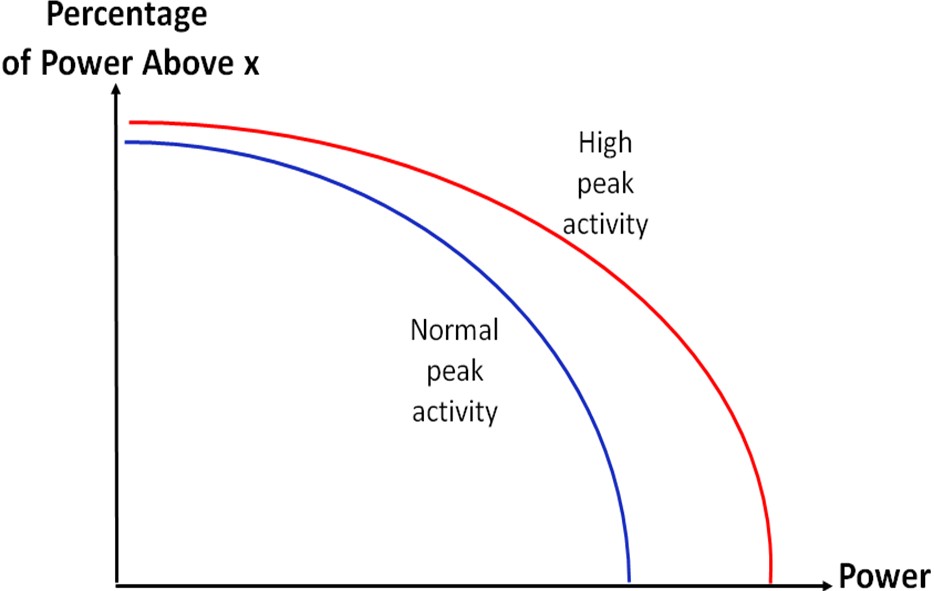

Complementary cumulative distribution function (CCDF) is a statistical measurement method you can use to analyze the power characteristics of a signal. It demonstrates how much time a signal exists at certain power levels for a defined period of time. In a CDMA or WCDMA signal, there are infrequent higher power peaks that occur with the signal transmission. These peaks are necessary for proper data transmission although, if the peaks last too long, they can indicate compression for a PA device. This can be seen in the graph in Figure 8, which shows more peak transmission versus normal peak transmission over a given period of time.

Figure 8: Complementary Cumulative Distribution Function

Network Analysis

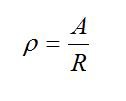

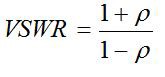

Voltage standing wave ratio (VSWR) is the ratio of maximum to minimum amplitude in the resulting interference wave, as shown in the following formula:

A = Reflected Wave and R = Incident Wave

Figure 9: Definition of p or the Reflection Coefficient

Any impedance mismatches along a transmission line cause partial reflection of the propagating signals. The impedance difference determines the magnitude of the reflection. The length of a mismatched section determines the lowest signal frequencies that reflect from the section. VSWR is a measure of that signal reflection.

Return loss is also a reflection measurement like VSWR, but is usually expressed in dB. Using the same reflection coefficient as above, you can express it as the following:

Return loss in dB = –20 log (p)

You can measure either forward return loss, which is most common for RF front-end devices like PAs, or reverse return loss, which you can use for RF transceivers.

Modulation Accuracy Measurements

Phase and frequency error (PFER) is a common measurement for GSM, GPRS, and EGPRS signals. Since the modulated signal is entirely based in phase (GMSK) with no amplitude shifting, a measurement method is needed to determine the quality of that phase and therefore its modulation quality. Both root-mean-square (RMS) and peak phase are normally measured. The RMS phase error gives the RMS average of the phase error across an entire burst, while the peak phase error gives the worst measured phase error in the burst.

Error vector magnitude (EVM) is a measurement of demodulator performance in the presence of impairments. The error vector for a received symbol is defined in the I/Q plane as the vector between a received symbol and the ideal symbol location. To calculate the EVM, the ratio is taken between the magnitude of the error vector and the magnitude of the expected constellation point.

Modulation error ratio (MER) is a measure of the signal-to-noise ratio (SNR) in a digitally modulated signal.

DC Measurements

Current can be measured on different parts of the RF front end. It can be measured at the supply voltage to power the device. It can also be measured on the accessory channels for the digital lines, Vramp or mode and frequency control lines.

Leakage current is often performed with semiconductor devices like RF front ends. The leakage current measurement helps determine isolation between pins on a semiconductor device. By using a source measure unit (SMU), you can measure the leakage current for any given pins.

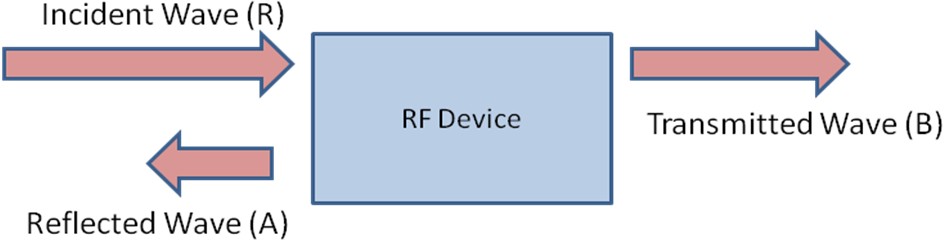

Vdetect measurement is voltage measurement of the output control line from a PA. This Vdetect outputs a control signal for the battery of the device to indicate how much power is needed for Vbatt on the PA.

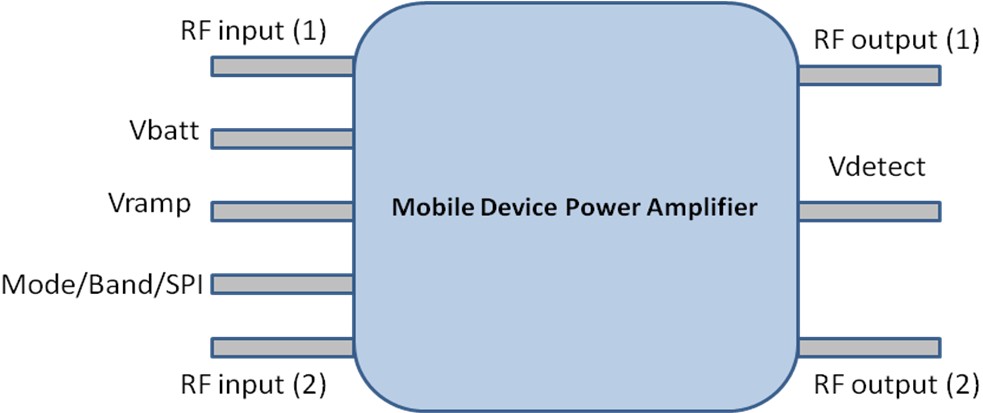

Typical Setup and Device Control for RF Front-End Test

Figure 10: This is an illustration of what a generic mobile device PA looks like.

A PA has at least two different inputs, RF input (1) and (2), because of large differences in the mobile device bands. For example, GSM can operate in the 800 MHz range as well as the PCS range of 1.8 GHz. This requires a separate amplification to account for the frequency differences. Also multimode PAs for next-generation mobile devices often mix GSM with another standard like WCDMA or LTE. There may be four or more inputs on the PA. In this case, the modes are broken down as high band and low band for the different frequencies and they also have different inputs for the different standards to optimize amplification efficiencies.

Vbatt is the power supplied to the PA from either the battery or a battery simulator instrument

Vramp is a control input line to help control the gain of the PA. It is especially important for the bursted GSM/GPRS/EDGE/EDGE+ signals where the signal profile is important.

Depending on the complexity of the PA, it may contain separate mode and band control lines for switching power control (Mode/Band/SPI). For example, mode control can be going from a GSM mode to EDGE mode. The band adjusts for the different frequency bands the PA can operate in. In next-generation PAs, this is trending toward using serial peripheral interface (SPI) and eventually MIPI (a newer high-speed serial interface). SPI and MIPI use a high-speed digital control interface that you can integrate through the power management IC (PMIC), CPU, and other chips in the mobile phone.

Similar to the inputs, there are at least two outputs, RF output (1) and (2), on the PA today. These are for different frequency bands. The trend for newer PAs is to have multiple standards, modes, and frequencies.

Vdetect outputs a control signal for the battery of the device to indicate how much power is needed for Vbatt on the PA.

Common Test Equipment for RF Front-End Testing

When interfacing with the RF front-end device for characterization and production test, you typically use several pieces of equipment. The following sections describe the most common instrumentation and how it is interfaced to with the RF front-end device.

Figure 11: This collection of instrumentation is the traditional test setup for an RF front-end device test.

Spectrum analyzers are prevalent in any RF device development lab or facility. They offer great power measurements for unknown signals and are easy to configure for capturing RF signals. In an RF front-end test, they are commonly used for higher frequency RF signal capture such as spur and harmonics testing. If you need to make a seventh-order measurement of a WLAN device, you would need an analyzer that can measure out to 40 GHz. Because the analyzer doesn’t have native bandpass filters, it is common to add an external filter to the input for the primary carrier to have enough dynamic range to measure the harmonics or spurs. Often, different banks of filters are used for the cellular bands or wireless network bands for WLAN, Bluetooth, ZigBee, and so on.

A vector signal analyzer (VSA) is one of the most important pieces of test equipment for RF front-end device test. Similar to a spectrum analyzer for power measurements, it can measure phase information, which is important for modulation accuracy measurements. Besides this phase and magnitude capture capability, it also has very fast digitization of the RF signal (performed after downconversion), which results in dynamic capture of signals. This is preferred for spread spectrum technologies such as WCDMA or WLAN. A 30 MHz bandwidth may be required with continuous phase information. The VSA interfaces to the RF output (1) and output (2) of the PA (refer to Figure 10).

An RF function generator, also known as a continuous wave (CW) generator, provides an accurate RF signal to input into the RF front-end device. These generators are commonly used for system calibration or are combined for multitone generation for IMD and IP3 or as an adjacent channel interferer.

A vector signal generator (VSG) is the most common type of generator in a lab or facility doing RF front-end device development. It provides not only controlled RF signal output for both power and frequency, but also a phase-controlled output signal. This is typically done through either a superheterodyne architecture or an I/Q modulator architecture. You can also use the VSG for system calibration, multitone generation, and adjacent channel interferer. However, more importantly, it can generate modulated signals into the RF front-end device. This is critical for testing modulation accuracy of the signal after it passes through the device. The VSG interfaces to the RF input (1) and input (2) of the PA device (refer to Figure 10).

The vector network analyzer (VNA) is not as common as other instrumentation in the RF front-end device lab; however, it has important features for some measurements. Mostly it is used for reflection and transmission measurements such as return loss, insertion loss, and VSWR. It has extremely good relative accuracies, which are important for the above ratio’d measurements. Sometimes external couplers are used with a CW generator and spectrum analyzer, but these don’t provide the same kind of accuracy as a VNA.

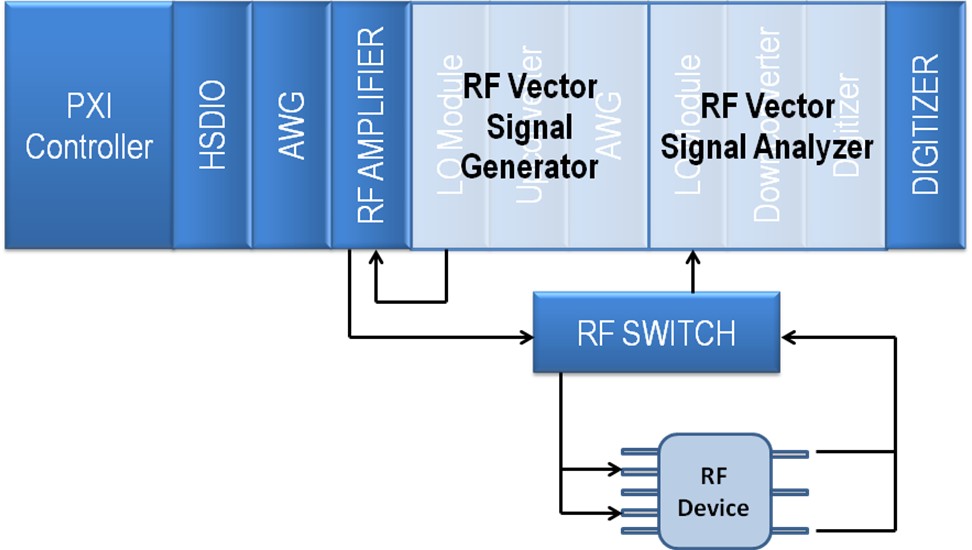

An RF switch can be present especially when trying to add more RF channels to the device without the added cost of more expensive generators or analyzers. Because of the strict specifications of the RF signals, it is most common to have electromechanical switches in an RF front-end device test. As semiconductor devices advance, it will be able to replace these with solid state switches, which increase the life span and speed of switching.

A high-speed digital analyzer/generator (HSDIO) provides control of the RF front-end device for changing modes (standards such as CDMA or LTE), frequency bands, and other set up of the device. As mobile devices become more sophisticated, standards such as MIPI are being adopted to provide a common communication protocol between all chips. An HSDIO can provide simple static commands or high-speed serial commands for the MIPI and SPI protocols. This becomes more necessary as digital interfaces transition to more high-speed serial interfaces versus traditional parallel digital interfaces. The HSDIO interfaces to the mode/band/SPI port of the PA device (refer to Figure 10).

An arbitrary waveform generator (AWG) controls the Vramp signal of a PA. Because many RF signals are bursted rather than a continuous transmission, it is important to generate the correct profile of the signal. The Vramp control line (as seen in Figure 10) is interfaced to the AWG. Vramp is responsible for a PA’s gain control profile. An AWG allows completely controlled synthesis of an analog waveform. Different kinds of custom ramp profiles can easily be achieved with a 100 MS/sec or faster AWG.

A battery simulator is for the primary power source of the RF front end. In the mobile device PA, this current can be 3 amps or higher, depending on the standard and frequency of this signal it amplifies. Another important requirement of the power supply is to have a fast transient response also for ensuring correct power profiles of the bursted RF signal. Vbatt, in Figure 10, is typically supplied by the battery simulator especially for GSM or similar burst-sensitive signals.

A source measure unit (SMU) is a specialized battery supply that is common for RF front-end devices. It differs from a standard power supply in that it provides read-back capabilities in the nanoamp or smaller current range. It also can operate in four quadrants to provide sourcing or sinking of the signal power. The SMU can interface to multiple lines on the RF front-end device. In Figure 10, this could be Vramp, Vdetect, Vbatt, and mode/band/SPI ports for measuring current and line performance. In production test, the SMU may be combined with the HSDIO into a product called the per-pin power measure unit (PPMU). This device has the same ability as a typical HSDIO instrument, but also has power and measurement capability like the SMU. It is typically not as accurate as an SMU by itself, but can have much denser channel counts.

A digital multimeter is probably one of the most common instruments in a lab and it also shows up in the RF front-end device labs. Although not as critical as an SMU, it can measure voltage drops over lines or current leakage from many of the same control and monitoring lines. A digital multimeter can have as accurate current and voltage measurements as an SMU.

An oscilloscope or digitizer is for time domain measurements. For RF front-end devices, it is a useful troubleshooting tool, especially with its high sampling rate capability. The Vdetect line from Figure 10 is measured using a digitizer because of its rapidly changing values.

A power meter is important for the RF front-end device. RF power accuracy all evolves from this device in the lab. It typically has power accuracies 10 times better or more than a spectrum analyzer or VSA. It uses a different kind of architecture to capture power and, because of this architecture, it typically has a limited power range. However, it is often used as a reference for system calibration so measurements can be made outside its range or for faster measurements. RF front-end devices have to be characterized either directly or indirectly with a power meter to ensure correct power level output.

A load pull is not as common as other instrumentation in the RF front-end device lab, but it is an important piece of equipment for real-world simulation. Typically the impedance of the antenna connected through to a PA varies depending on its environment. It could be near a metallic structure or propped up against a car seat. This affects the adjusted impedance between the RF front-end device and the antenna. This in turn can cause the VSWR to increase, which makes the RF front end want to provide more power to compensate and hence drain the battery faster. A load pull simulates this condition by adjusting the impedance of the RF input or output. The PA can then be designed to be stronger to avoid excessive battery pull.

An amplifier is often used to simulate the higher power conditions needed for compression testing of the RF front-end device. Most generators, either CW or VSG, have a limited output power level to no more than +10 dBm. To simulate the higher power input to a RF front-end device, it is required to amplify this signal often as high as +18 or +20 dBm. The RF signal generated by the CW or VSG passes through the amplifier to output the appropriate gain.

Performing RF Front-End Test With National Instruments PXI Products

Now that you have a better understanding of the different measurements, components, and instrumentation used in RF front-end test, you can examine how this looks when using a PXI-based system.

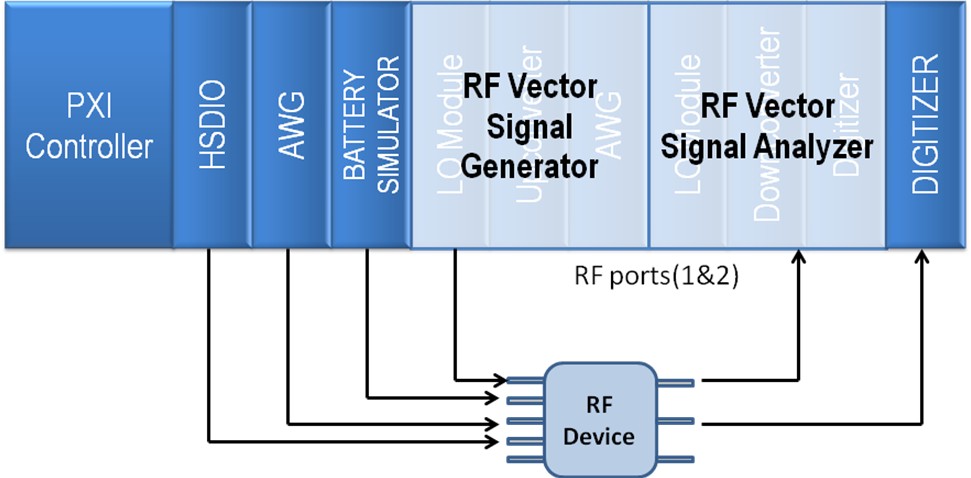

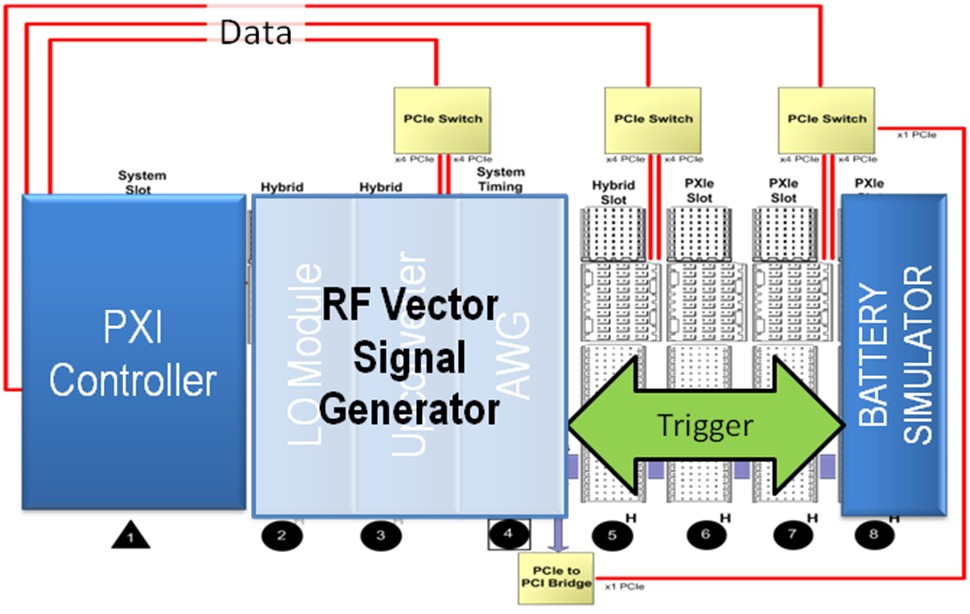

Figure 12: This system is set up for testing an RF front-end device.

The following products compose a basic PXI-based RF front-end device test:

- PXI Controller—Multicore embedded processor for fast parallel or composite measurements

- HSDIO—HSDIO for any digital control signal; can operate with SPI, MIPI, I2C, custom digital, and static digital control for up to 20 lines

- AWG—Arbitrary waveform generator with 16-bit resolution, onboard scripting, and triggering for precise Vramp control

- Battery Simulator—Specialized power supply made for RF mobile device test; ultrafast transient response for bursted RF signals

- RF Vector Signal Generator—100 MHz wide VSG that supports 2 G through 4 G cellular signals as well as wireless network signals such as WLAN

- RF Vector Signal Analyzer—50 MHz wide VSA that supports 2 G through 4 G cellular signals as well as wireless network signals such as WLAN

- Digitizer—High-resolution digitizer for capturing the Vdetect signal or other fast transient signals up to 43 MHz in bandwidth

Figure 13: This diagram shows the equipment used in a typical setup.

- RF Preamplifier—Programmable pre-amp/amp with up to 50 dB gain; can boost output power of NI PXIe-5673E to +21 dBm, which is important for 1 dB compression testing of a PA; beyond +21 dBm, the alternative is an external amplifier

- RF Switch—One of several different RF switches for switching the generator and analyzer channels (With multiple bands supported on most devices, you need more than one path to the RF front-end device. Instead of more generators and analyzers, you can automate a high-quality switch for changing inputs and outputs.)

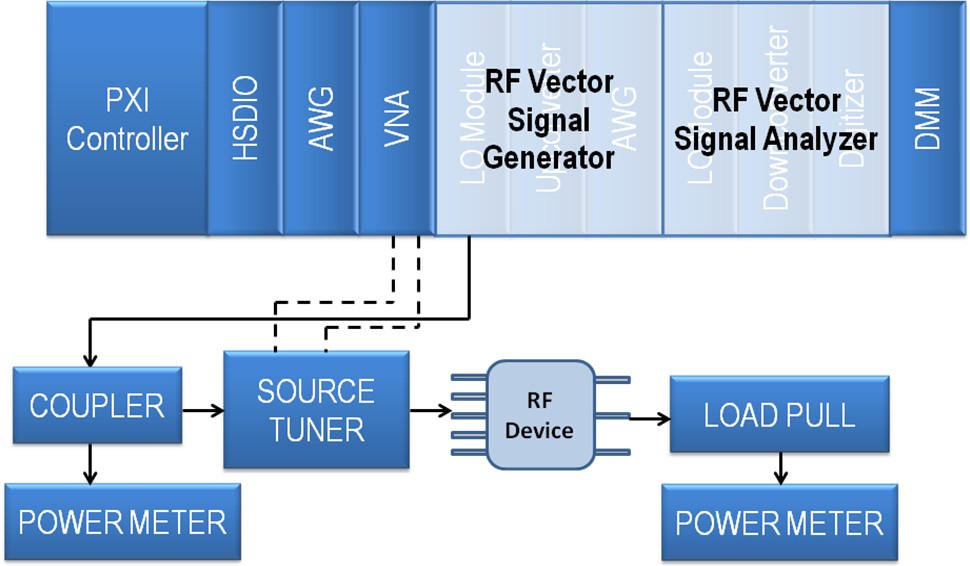

Figure 14: A more specialized test uses a source tuner and load tuner (load pull) to test for nonlinear behavior and input/output impedance variations.

- VNA—Two-port VNA for measuring insertion loss, return loss, and VSWR of the RF front-end device (Figure 14 shows a dotted line representing the connection of the VNA)

- Source Tuner and Load Pull—Separate third-party instruments from companies like Maury Microwave and Focus Instruments

- Power Meter—Stand-alone USB-based power sensor and meter in one unit; high-power accuracy for impedance measurement and system calibration

Using the PXI Form Factor for Tight Trigger and Timing Integration in RF Front-End Test

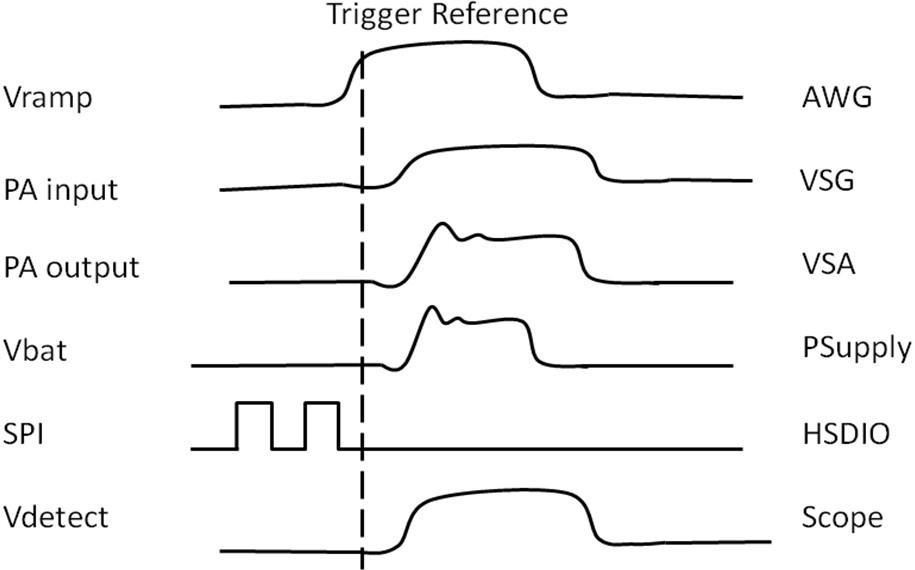

An important aspect for RF front-end test is the timing and trigger integration needed to perform different tests. Triggering plays a prominent role in testing a PA device. Without tight trigger control, the device gives erroneous results from misaligned device power, Vramp, or RF signal generation and capture.

For example, look again at the PA device in Figure 10. To test this device, you have several lines to control and read simultaneously. Power needs to be provided to the PA in the Vbatt pin and, because it is simulating a battery-powered device, it is a bursted power, which is triggered to occur when the RF signal is sent to it. You also need to control the gain of the signal Vramp and this typically requires an AWG to create the correct ramp. You also need to control the mode and frequency, although this does not need to be controlled through timing. Finally, the RF input signal needs to bursted with a specific timing sequence. All of this is illustrated in Figure 15.

Figure 15: Trigger Reference Diagram for PA Test

Since you can trigger PXI through its backplane from any module, you can share the same trigger reference for all of the devices mentioned above as well as the capture-only devices such as the VSA and digitizer (see Figure 16). Alternatively, for the VSA and digitizer, they can reference their own trigger by using the I/Q power trigger feature of the NI PXIe-5663 to capture based off an RF signal power level. Pretrigger buffered data can be configured so the signal of interest is captured with signal ramp up, profile, and ramp down.

Figure 16: The PXI backplane demonstrates trigger connectivity between the VSG and battery simulator.

Measurement Time Advantages With PXI

PXI offers significant time savings over traditional instrumentation for RF front-end device test. Test time is reduced in four areas:

- Latest off-the-shelf processors for the fastest signal processing

- FPGA technology for real-time signal processing and measurements

- Fast PCI Express backplane for data movement and low-latency communication with host controller

- Flexible software for optimized system configuration and communication

Latest Off-the-Shelf Processors for the Fastest Signal Processing

Just as in any other application that benefits from a faster CPU, the signal processing for PA test also benefits. RF signals often presents a challenge for test time because of its more intense signal processing than low-frequency signals. Not only is the signal originating from a higher frequency via downconversion, it also has more wideband content. With the emergence of new technologies such as LTE and 802.11 ac, bandwidths can easily exceed 80 MHz, so ADCs need to sample at 200 MS/sec or faster. Once the signal is digitized, it needs to be processed from its baseband format (assuming digital downconversion is done on the IF signal) for modulation accuracy or spectral measurements. This can include pulse shape filter removal, channel decoding, and demodulation or formatting for spectral measurements. When dealing with 200 mega samples of data, this requires a lot of processing.

A more common way to do this processing is with a multicore processor. PXI test systems offer multicore processing with its embedded controller or an off-the-shelf PC using remote MXI. Multicore processors emerged as a result of processor heat-generation issues as clock rates increased. Without more sophisticated cooling like water or nitrogen, the clock rates had to be limited for the microprocessor. PXI takes advantage of the multiple cores by running instrumentation in parallel, using multithreading, and performing composite measurements.

The tables below show test time differences going from a dual-core processor to a quad-core processor. These measurements are for GSM and EDGE signals.

GSM/Edge

PVT

| Signal Type | Measurement Description | NI PXI-8106 Intel T7400 Core 2 Due | NI PXIe-8133 Intel i7 Quad Core (6 GB RAM) |

| GMSK | PVT time (1 AVG) | 9.7 ms | 7 ms |

| PVT time (10 AVG) | 56 ms | 52 ms | |

| Mean PVT (10 AVG) | 0.28 dBm | ||

| STDEV PVT (10 AVG) | 0.009 dB | ||

ORFS (ACP)

| Signal Type | Measurement Description | NI PXI-8106 Intel T7400 Core 2 Due | NI PXIe-8133 Intel i7 Quad Core (6 GB RAM) |

| GMSK | ORFS time (1 AVG) | 14 ms[i] | 11 ms |

| ORFS time (10 AVG) | 90 ms2 | 77 ms | |

| Mean ORFS (10 AVG) | -36 dBc @ 200 kHz -41 dBc @ 250 kHz -71 dBc @ 400 kHz -80 dBc @ 600 kHz -81 dBc @ 1,200 kHz | ||

| STDEV ORFS (10 AVG) | 0.3 dB | ||

PFER

| Signal Type | Measurement Description | NI PXI-8106 Intel T7400 Core 2 Due | NI PXIe-8133 Intel i7 Quad Core (6GB RAM) |

| GMSK | PFER time (1 AVG) | 11 ms | 9 ms |

| PFER time (10 AVG) | 57 ms | 53 ms | |

| Mean PFER (10 AVG) | RMS Phase Error 0.195 degree Pk Phase Error 0.48 degree | ||

| STDEV PFER (10 AVG) | 0.014 dB | ||

EVM

| Signal Type | Measurement Description | NI PXI-8106 Intel T7400 Core 2 Due | NI PXIe-8133 Intel i7 Quad Core (6GB RAM) |

| 8PSK | EVM time (1 AVG) | 9.4 ms | 7 ms |

| EVM time (10 AVG) | 53 ms | 53 ms | |

| Mean EVM (10 AVG) | RMS EVM 0.55 % Pk EVM 1.2 % | ||

| STDEV EVM (10 AVG) | 0.1 dB | ||

The NI TestStand application is a good way to configure the PA test system for parallel and multithreaded testing. It provides advanced features of synchronization such as queues, notifiers, and rendezvous in addition to the auto schedule feature, which helps optimize parallel test with the available test equipment. If testing more than one PA at a time, NI TestStand can help manage switching between hardware.

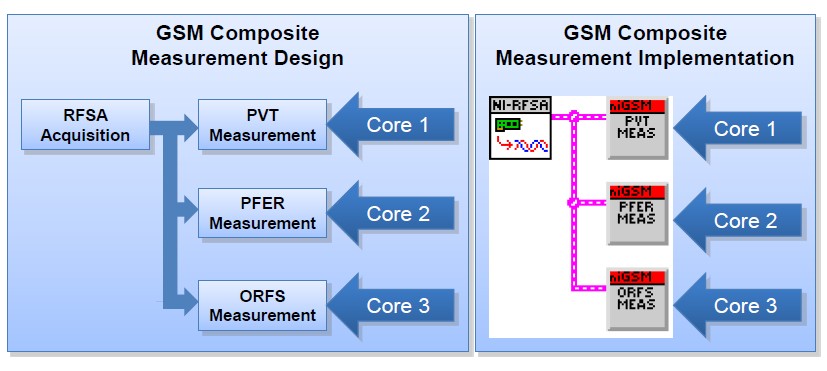

Composite measurements offer a way to take advantage of the multicore processor. Instead of performing measurements in a queued fashion by acquiring the I/Q data and analyzing the data for each measurement, it performs one single data acquisition and simultaneously analyzes the data for all measurements. Figure 17 illustrates this example for a GSM signal. Rather than performing separate acquisitions for PVT, PFER, and ORFS, you perform a single acquisition and then process the I/Q data in parallel using the multicore processor.

Figure 17: Composite Measurement for a GSM Signal

Composite measurements can offer significant time savings. Go back to the same GSM and EDGE measurements you looked at earlier. Instead of doing individual acquisitions and measurements, perform the same tests with a composite measurement. The table below shows the results.

GSM: ORFS, PVT, and PFER

EDGE: ORFS, PVT, and EVM

| Signal Type | Measurement Description | NI PXI-8106 Intel T7400 Core 2 Due | NI PXIe-8133 Intel i7 Quad Core (6 GB RAM) |

| GMSK | Composite time (1 AVG) | 14 ms2 | 11 ms2 |

| GMSK | Composite time (10 AVG) | 110 ms2 | 77 ms2 |

| 8PSK | Composite time (1 AVG) | 14 ms2 | 11 ms2 |

| 8PSK | Composite time (10 AVG) | 106 ms2 | 74 ms2 |

If you examine GSM (GMSK modulation), the total test time for individual tests with 10 averages is 52 ms (PVT) + 77 ms (ORFS) + 53 ms (PFER) or 182 ms total test time. By comparison the composite measurement is performed in 77 ms which is a 136 percent reduction in test time!

FPGA Technology for Real-Time Signal Processing and Measurements

Another area that has helped reduce RF test times and will further accelerate that test time reduction is field-programmable gate array (FPGA) technology. Today’s FPGA offers real-time signal processing in an energy-efficient, flexible package. In the world of wireless, this technology is important for signal processing of data. A good example of this is onboard signal processing or OSP technology. The digitizer for the NI 5663 VSA and the AWG for the NI 5673 VSG have OSP technology. These provide direct IF to baseband or baseband to IF conversion in the FPGA, which normally requires intense processing in a host PC.

Besides more typical OSP applications, it is also possible to configure the FPGA now with tools like the LabVIEW FPGA Module to perform measurements in the FGPA. In the GSM signal discussed before, if you look at the standard, the burst signal is 5 ms in length. Because you can also process the entire signal in parallel, you can perform a similar composite measurement as you do on a multicore floating processor. You can effectively reduce your test time from 11 ms for a single burst capture to a real-time capture of 5 ms.

Fast PCI Express Backplane for Data Movement and Low-Latency Communication With Host Controller

After signal processing, the next most important factor to reduce test time is a fast bus for data movement. For shorter bursts of data, the differences between this and a slower bus are not as evident. However, as you increase data acquisition size for signals like LTE it begins to impact test time.

PXI Instruments Provide Accuracy and Speed

Testing RF front-end components such as duplexers, PAs, and transceivers on cell phones requires high-fidelity test equipment. Typically, traditional boxed instruments are used in characterization because of their higher accuracy, but these instruments don’t provide the speed required in manufacturing test environments. Big iron testers are fast and capable of parallel test, but don’t have the accuracy and debugging capabilities of boxed instruments. PXI instruments provide the accuracy required in characterization labs while providing the speed needed by manufacturing test engineers. Since PXI instruments are modular, you can use multiple mixed-signal instruments such as RF analyzers, generators, digital generators/analyzers, and power supplies together. You can tightly synchronize these instruments to improve test speed and make accurate measurements. Plus, with the PCI technology used in PXI, you can share data between instruments without software limitations.