Developing Monitoring Applications with the LabVIEW DSC Module for Control Systems

Overview

This document provides an overview of the OPC specification, a description of the LabVIEW Datalogging and Supervisory Control module, a summary of other National Instruments products that you can use to implement high-channel count systems, and instructions and tips on how to successfully develop such systems.

Contents

- The OPC Specification

- LabVIEW Datalogging and Supervisory Control Module Overview

- National Instruments OPC-Connective Equipment

- Building a High-Channel Count Application with LabVIEW

- Tag Engine Historical Logging Benchmarks

- Conclusion

- Appendix -- Benchmark Test Results

The OPC Specification

The following sections provide a basic overview of the OPC standard’s purpose, motivation, and goals, as well as a brief explanation of OLE, COM, and ActiveX.

OPC Introduction -- OLE for Process Control

OPC (OLE for Process Control) is a standard interface between numerous data sources, including devices on a factory floor, laboratory equipment, test system fixtures, and databases in a control room. To alleviate duplication of effort in developing device drivers, eliminate inconsistencies between drivers, provide support for hardware feature changes, and avoid access conflicts in industrial control systems, the OPC Foundation defined a set of standard interfaces that allow any client to access any OPC-compatible devices. Most suppliers of industrial data acquisition and control devices work with the OPC Foundation standard.

OPC allows device-side server and application software -- two separate processes -- to communicate with each other through a standard Microsoft COM interface.

OPC was designed to be a layer of abstraction between the specific device and the program that needs to get information or control that device. The OPC standard specifies the behavior that the interfaces are expected to provide to the clients who use them, and the client receives the data from the interface using standard function calls and methods. Consequently, any computer analysis or data acquisition program can communicate with any industrial device so long as that program contains an OPC client protocol and the device driver has an OPC interface associated with it.

COM/ActiveX

The underlying layer of the OPC specification is based on Microsoft's COM/DCOM technology, which is also known as Object Linking and Embedding (OLE) or more commonly as ActiveX. COM (Component Object Model) and DCOM (Distributed COM) act as interfaces between the client and other system components. In modern operating systems, processes are shielded from each other, and a client that needs to talk to a component in another process cannot do so directly. COM provides an interface communication layer that allows local and remote procedure calls to be made between processes. DCOM or Distributed COM is the natural extension of COM to support communication among objects on different computers -- on a LAN, WAN, or the Internet. COM is referred to as OLE when the application is used to embed documents of one type inside a document of another type. One example of a COM implementation is the ability to create and edit Microsoft Excel spreadsheets within a Microsoft Word document. COM is commonly known as ActiveX when referring to its Internet capabilities. An example of ActiveX is the ability to embed multimedia players within pages on the Web. OPC uses the term OLE because that was the most commonly used term to describe COM when the OPC Specification was defined.

The communication between processes in COM supports three basic types of interaction:

- Properties--individual settings for a control

- Methods--functions called on a control to perform a specific action

- Events--messages that a control creates to alert the outside world of what is happening within the process

OPC Implementation

The OPC Specification defines a standard COM interface for use in industrial acquisition and control settings. The specification includes a protocol for defining objects, setting properties on those objects, and standardizing function calls and events. In doing so, OPC accommodates a wide variety of data sources. Device I/O includes data acquisition devices, valves, fieldbuses, and Programmable Logic Controllers (PLCs). The specification also includes a protocol for working with Data Control Systems (DCSs) and application databases, as well as online data access, alarm and event handling, and historical data access for all of these data sources.

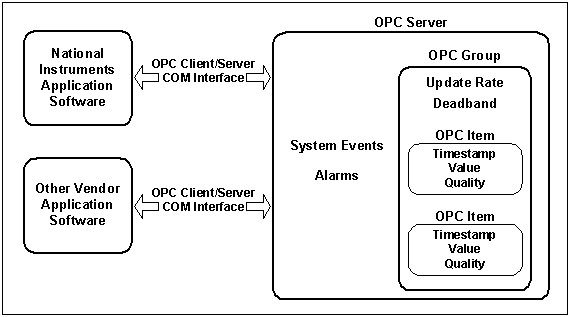

The data access server has three divisions:

- Server--contains all of the group objects

- Group--maintains information about itself and contains and organizes the OPC items

- Item--contains a unique identifier held within the group that acts as a reference for the individual data source, as well as value, quality, and timestamp information. The value is the data from the source, the quality status gives information about the device, and the timestamp is the time that the data was retrieved.

An OPC application accesses all items through the OPC group rather than through the item itself. The group also contains a specified update rate for the group, which tells the server at what rate to make data changes available to the OPC client. A deadband specified for each group tells the server to reject values if they have changed by less than the specified deadband percentage.

Figure 1. An example implementation of the OPC specification

The OPC server also provides alarm and event handling to clients. Within a server, an alarm is an abnormal condition of special significance to the client -- a condition associated with the state of the server or the state of a group or item within the server. For example, if a data source value that represents the real-world temperature of a mixer drops below a certain temperature, then the application can be sent an alarm so that it will be able to properly handle the low temperature. Events are detectable occurrences that are of importance to the server and client, such as system errors, configuration changes, and operator actions.

OPC also incorporates historical data access standards, which are a way to access the data stored by historical engines, including raw data storage servers and complex data storage and analysis servers. This feature of OPC allows interoperability of proprietary database systems.

OPC Ideal for High-Channel Count Applications

The OPC Foundation’s stated design, goals, and motivation for industry standardization of control systems has enabled the implementation of high-channel count systems that are efficient and user-friendly.

Client software developers and users of these applications have greater flexibility in implementing a solution that is tailored to their needs, because data is organized into groups and the naming, or tagging, of data points is determined by the client software. Grouping is beneficial in dealing with large sets of data sources because it provides greater organization of the data as well as easy reference to similar sets of data. In an OPC application, a tag gives a unique identifier to an I/O point. The OPC Specification leaves the responsibility for naming tags up to the client software, which can either name the tags programmatically or pass that task on to the user. In large systems, meaningful names for data sources improves usability by allowing the operator to choose easy to remember identifiers that can specify the data source by function, hardware name, or other name based on the operator’s discretion. This flexibility is a significant factor in the ability of client software to provide solutions that are tailored for high-channel count applications.

Client software also specifies the rate at which the server supplies new data to the client. The server is responsible for data publication, so the client becomes event-driven and can handle large sets of data much more efficiently, because it does not have to poll the data sources to get new data. Instead, the client software becomes a reactive object that waits for new data to arrive. The program does not need to perform time-consuming data polling, which frees up more time for analysis and datalogging.

The client also specifies deadbands on the server, which allows the client to determine what data is important and disregard data that is not significant. Deadband percentages reject values that do not change more than a certain percentage from the previous value recorded. By establishing moderate deadband values, a much greater number of channels can be monitored, because the client only receives information about channels that it deems essential, and it does not get flooded with superfluous information.

The OPC specification enables high performance throughput, a necessity in high-channel count applications. The The Performance and Throughput of OPC white paper on the OPC Foundation website discusses the results of testing a simulated OPC client. The paper shows that OPC technology allows for throughput higher than most client software packages can currently obtain. Throughput is highly dependent on the hardware configuration and the amount of data that can be obtained from the underlying data source. Therefore, OPC is not the bottleneck in throughput in most cases, creating a technology that is able to handle the necessary performance of high-channel count supervisory systems.

Because OPC is now an industry standard, client software can connect to almost every vendor device available. Client software now is compatible with many types of devices, so the software can be designed with large numbers and varieties of devices in mind. These are a few of the many characteristics of OPC that give development software a huge advantage when OPC connectivity is leveraged to implement high-channel count application software.

LabVIEW Datalogging and Supervisory Control Module Overview

NI leverages the flexibility and connectivity of OPC in many software products, including LabVIEW, Measurement Studio, connectivity packages for LabWindows/CVI, as well as the LabVIEW Datalogging and Supervisory Control module. In addition to client packages, NI provides OPC servers for DeviceNet hardware products.

LabVIEW Datalogging and Supervisory Control Module

The LabVIEW Datalogging and Supervisory Control module is an add-on to LabVIEW, designed to increase development productivity for engineers and scientists who are developing distributed datalogging and supervisory control applications. This module leverages the power of LabVIEW and uses OPC connectivity to create a graphical development environment that you can use to monitor and control any vendor’s data source, provided it has an OPC server. OPC provides a standard automation interface, while LabVIEW reduces development time. With automatic logging, alarm and event handling, and easily configured security features, the LabVIEW Datalogging and Supervisory Control module is the ideal programming environment to create high-channel count monitoring, control, and historical storage applications.

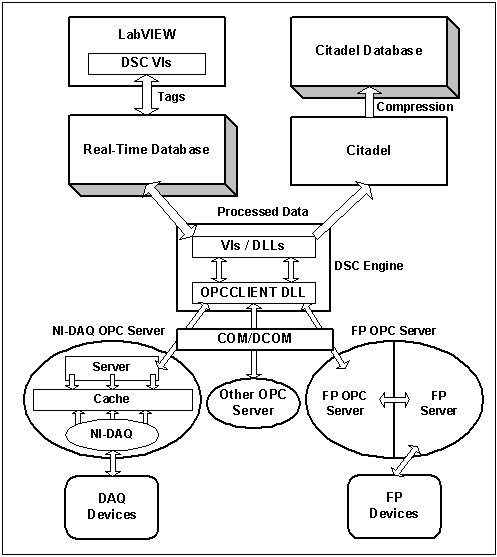

The main feature of the LabVIEW Datalogging and Supervisory Control module is the Tag Engine, which runs in a separate process from LabVIEW and is responsible for handling system events and alarms, receiving data from OPC servers, processing the data, updating the real-time database (RTDB), and logging that data to disk using Citadel. The engine maintains an event-driven architecture that waits for events or changes in data before taking any action, which allows for a greater efficiency when dealing with a high number of channels.

The lower end of the Tag Engine supports OPC server communication and Dynamic Data Exchange (DDE). The engine itself processes the data based on its current configuration and sends the information to the RTDB -- the shared memory used to communicate with LabVIEW. If specified, the engine also sends the information automatically to Citadel, the historical database. All of the engine settings are made using the Tag Configuration Editor, a utility that allows operators to create SCADA configuration files (SCF) that contain all of the settings for tags, alarms, and events.

Figure 2. Data Flow in the LabVIEW Datalogging and Supervisory Control Module

When the engine starts, it uses the active SCF to open connections to the tags by creating the specified groups and their sub-items in the server. Both an update rate and a deadband are associated with each group. After the engine creates the group objects, the server is responsible for supplying information about these tags (except for data within the deadband) at the specified update rates. The engine also extracts the alarm data from the configuration file and passes this information to the server, which maintains alarm statuses. These features of OPC allow the Tag Engine to become an efficient, event-driven program that does not have to poll data or spend resources determining whether a value is in an alarm state. The SCF also determines several settings for data processing that occur within the server. Tag Engine processing settings include: scaling ranges for tags, an engine deadband, logging settings such as logging resolution and deadband, queue sizes, and system settings. Scaling and an engine deadband make sure that only significant, correctly scaled information is passed to LabVIEW applications and/or the historical database. A logging deadband allows the operator to log only the most significant data. The logging deadband setting, combined with the logging resolution setting, helps to control the database growth rate. Refer to the LabVIEW Datalogging and Supervisory Control Module Developer's Manual (linked below) for more detailed explanations or instructions.

The LabVIEW Datalogging and Supervisory Control module also includes a networking technology called Logos, which is a proprietary mechanism developed by National Instruments for inter-process communication between the company's industrial automation products. Logos enhances existing TCP/IP for multi-client/server applications, allowing a system to provide, subscribe, and publish data over a network to all registered computers. This technology allows National Instruments software to adapt to the needs of high performance applications such as the LabVIEW Datalogging and Supervisory Control module.

These architectural highlights help to demonstrate how the LabVIEW Datalogging and Supervisory Control module and its tag-processing engine are designed to handle large channel counts. The connectivity that OPC provides is a large factor, but if the software could not handle the amount of data coming in, that connectivity would be useless. The LabVIEW Datalogging and Supervisory Control module enables connections to a large number of data sources because it is able to effectively eliminate data that is deemed unimportant by controlling deadbands and update rates. The engine can devote the bulk of its resources to processing data and events, because the engine is a separate process from LabVIEW, and the data polling and initial deadbands are handled in the server, not the engine.

The Tag Configuration Editor makes the configuration of tags more efficient, and the ability to export the entire file to a spreadsheet enables easy alteration of all of the tags in the system. Through the configuration file, the Tag Engine can begin monitoring all of the tags at once, eliminating any need for you to go through the laborious process of configuring every tag each time the engine is launched. Instead, configuration files enable the engine to restore settings from a previous session and quickly launch archived configurations.

The development VIs installed with the module further enable you to develop custom applications specially suited to the needs of your system. You can set almost all engine functions and supervisory settings dynamically, including a large portion of the individual tag attributes. This capability gives you tremendous flexibility in developing an application that is highly tailored to your individual application. You also have the option of using HMI wizards that generate typical monitoring and control code automatically, allowing for quick and easy deployment of large-scale applications.

The availability of OPC connectivity, combined with LabVIEW and additional security, monitoring, and other features, allows for the immediate and efficient deployment of large-scale industrial-grade applications. By minimizing development time, LabVIEW enables you to concentrate on the solutions, not the implementation of those solutions

National Instruments OPC-Connective Equipment

The LabVIEW Datalogging and Supervisory Control module is OPC-oriented and can connect to any device that has an OPC server, making these programs ideal for any industrial monitoring and control setting. NI DeviceNet products are used with OPC in data acquisition, industrial automation, data control systems, and lab settings. NI also offers highly integrated motion and machine vision products, which are another important aspect to many of these systems.

DeviceNet

DeviceNet is a low-level network designed to connect industrial devices (sensors and actuators) to higher-level controllers such as PCs, PLCs, and embedded controllers. National Instruments produces DeviceNet interfaces and drivers for all of the major programming environments. DeviceNet focuses on the interchangeability of devices from different vendors most often used in manufacturing applications and enjoys the advantages of a producer-consumer network. National Instruments DeviceNet interfaces can be used to prototype a DeviceNet system or application such as PC-based control, embedded control, or PC-Based HMI.

NI DeviceNet products include modules that work with various system types:

Building a High-Channel Count Application with LabVIEW

After selecting the new hardware products you need to create and/or improve your system and deciding on the best-suited software package, you are ready to build your application. National Instruments software makes this process straightforward by providing a highly integrated product line and intuitive and easy-to-use software. The following sections describe how to build a high-channel count application with the LabVIEW Datalogging and Supervisory Control module. This document does not describe how to configure new DeviceNet devices. If this is a requirement of your application, refer to your user documentation to get started with the applicable protocol and then start the building process at the Configure Tag Settings section of this document. This guide is an outline only to the software process. For detailed instructions on external signal connection or intricacies of the configuration or development software, refer to the device or software documentation.

Configure DAQ Devices

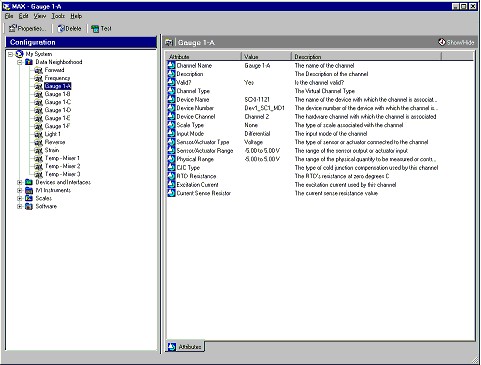

Use National Instruments Measurement & Automation Explorer to configure the DAQ devices in your system. Measurement & Automation Explorer lets you define virtual channels for your devices -- creative names for data sources as well as the option to scale the channel data to a real world value. The OPC server uses these virtual channels when connecting to the data sources on the hardware.

Figure 3. Virtual Channels in Measurement & Automation Explorer

Right-click the Data Neighborhood icon in Measurement & Automation Explorer and select Create New from the shortcut menu. Follow the steps of the Channel Wizard to create a new virtual channel. The wizard will ask for the name for the virtual channel, the data source, and the scaling factor.

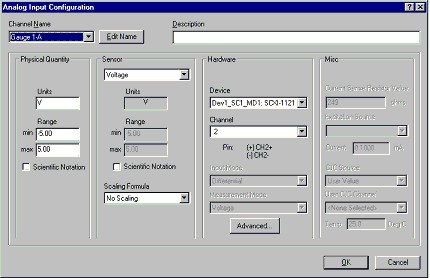

Figure 4. Configuring a Virtual Channel in Measurement & Automation Explorer

The following are a number of tips for creating virtual channels with the Channel Wizard:

- Multiple DAQ configurations can be saved using the save configuration feature of Measurement & Automation Explorer. Measurement & Automation Explorer saves files in a somewhat counter-intuitive manner in that you must save a new configuration before you modify it because any changes you make to the configuration are immediately saved to the current file.

- Virtual channels can be duplicated with their channel reference and name incremented with each successive duplicate. Take advantage of this feature of virtual channels to configure channels swiftly.

- Remember to set the scales for a channel before you duplicate it.

- Choose a naming convention for the tags so that you can easily remember and reference them.

- Remember that the more channels are created, the more the drivers must process. If the program does not seem to be responding, be patient.

- Be careful not to reference two virtual channels to the same I/O point. This will cause errors in the OPC server.

- Many of the SCXI modules have software settings and/or hardware jumpers that change the behavior of the module, so be aware of those and change them if you need to in Measurement & Automation Explorer and the physical module.

- Remember that in datalogging and supervisory control applications, the emphasis is on monitoring nearly constant values. The Tag Engine is not intended for high-end waveform analysis, but to supervise relatively slowly changing signals. There is a trade-off between the number of channels and the sampling rate per channel that can be achieved.

After you set your channels, you can proceed to the next steps of the process.

Configure Tag Settings in Tag Configuration Editor

After you configure the hardware properly, launch LabVIEW. Open the Tag Configuration Editor (TCE) and begin to edit the SCF.

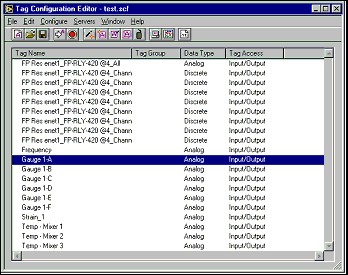

Figure 5. Tag Configuration Editor

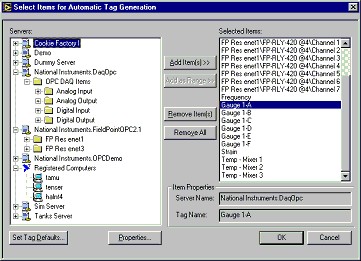

Use the Tag Wizard to connect to the available OPC servers on the system or to servers available on remote systems.

Figure 6. Tag Wizard

After you add the channels you selected, they appear in the list on the panel of the TCE. You can edit each of these tags from their default values, and all of the settings mentioned earlier in this document can be applied. Remember that the engine settings and historical settings are available to edit as well. Larger queue sizes for the engine can help to deal with larger amounts of data or a greater number of system events or alarms.

Below are a number of tips for using the Tag Wizard:

- You can use a separate piece of software called Server Explorer bundled with the LabVIEW Datalogging and Supervisory Control module to browse local and remote OPC servers in order to ensure that the channels are configured correctly.

- In the Tag Wizard, you can add groups of items all at once by clicking the folder icon and selecting Add Items.

- The I/O group settings apply to every item in the group. So, to create multiple update rate settings, create a new group for every set of tags with a certain update rate.

- For large numbers of tags, use the tag export command to export tags to a spreadsheet. Any spreadsheet program will allow you to easily manipulate the tag values that must be configured, including I/O group, update rates, alarms, deadbands, scaling, and so on. You can save variations of the files if you want to test different settings by creating new files in the TCE, importing these back into the editor, and testing the changes.

- The data the engine receives is automatically logged when you toggle it on as long as it is enabled in the SCF. Alarm and event generation is automatic as well and is easy to configure.

- The higher the number of channels, the lower the update rates must be in order to prevent engine overflow. If you are getting queue overflows, try lowering the update rate and/or increasing the queue size.

- There are four deadband settings: I/O Group deadband, Engine deadband, logging deadband, and alarm deadband.

- Be sure to update the scales for each tag according to the scales set in the tag’s hardware configuration program.

- Keep channels configured in Measurement & Automation Explorer and the TCE equal. The greater the number of virtual channels, the slower the performance of the OPC server, no matter how many channels are added to the TCE.

Run Engine

After the SCF is configured and saved, you can start the Tag Engine. Security settings can be applied as needed. If the application is intended for logging only, then you are finished, because all logging is done automatically. However, in many cases, a more customized application is needed, which brings us to dealing with LabVIEW VIs and application code.

Build Application Code

Use the HMI wizard whenever possible to automatically generate the code to monitor and update tag values. This greatly reduces development time and is great for simple monitoring and control applications. However, if you need a specialized application, the VIs provided make tag manipulation easy and fit in easily with the other VIs provided with LabVIEW, including any advanced data analysis, manipulation, or testing that must be performed on the tag values.

The LabVIEW Datalogging and Supervisory Control module VIs enable you to provide applications that can do almost anything programmatically. For example, you can control the starting and stopping of the engine using VIs, you can create security settings and user accounts on-the-fly, you can access historical databases, and you can open and close the Historical Trend Viewer programmatically.

Below are a number of tips for building applications in LabVIEW:

- Accessing the historical database programmatically should be done with caution. The larger the database, the longer it will take to access it.

- Avoid using historical data access VIs if you can. Many times, the same purpose can be achieved by using tag attribute VIs that are much faster. For example, if you need to access the database with the current set of tags, use the Get Tags VI, not the Get Historical Tags VI.

- When using the tag read/write VIs, remember that every time a value is written, it is queued in the engine. Avoid inefficient programming that asserts a value into a write tag every time through a loop if not necessary. If deadbands are not set, this can lead to engine output overflows.

- When starting or stopping the engine programmatically, beware that the calling VI can return before the engine is entirely finished starting or stopping. If data or file operations are engine dependent, use the engine status VI to determine when the engine is in the desired state before continuing the program flow.

- Many tag attributes are dynamic so keep this in mind before creating perhaps unnecessary SCFs, but also remember that programmatic changes to tags are not saved when the program exits.

- Use the timestamp along with the tag value to determine the exact time the value was retrieved. This knowledge can help determine the value’s relative importance in time to other tags.

- Remember, building a large-scale application is an iterative process; all of the steps in this document will most likely have to be done multiple times before the application is complete.

Successful programming in LabVIEW for connectivity and control of SCXI, FieldPoint, and other OPC tags, including third-party vendors, is dependent on installing and configuring the hardware for the specific application, then configuring the devices in software (FieldPoint Explorer, Measurement and Automation Explorer, and the TCE) in collaboration with program development in LabVIEW using the Data logging and Supervisory Control module.

Tag Engine Historical Logging Benchmarks

To gauge the performance of the Tag Engine in a real world setting, a benchmarking test was developed using the DAQ OPC server and voltage inputs from several SCXI-1102C modules. The purpose of the tests was to determine the data throughput capabilities of the engine when streaming data to disk.The tests were also run to determine database size with respect to logging resolution. This information is valuable to customers who need to know the performance of the datalogging feature to successfully plan and develop their applications and is also useful to National Instruments R&D engineers who can use the benchmarking information to gauge and improve the product in the future.

System Specifications:

- PXI-8170 Controller

- 700 MHz Pentium III Processor

- 256 MB RAM

- 4200 Rpm Hard drive

- Input signals coming through PXI-6040E and SCXI-1001 Chassis

- Windows NT 4.0 Service Pack 6

- LabVIEW 6.0 Datalogging and Supervisory Control module

- NI-DAQ 6.9 RC7

The appendix contains all of the results of benchmark testing for 50, 100, and 200 channels. The other test variables are input frequency, alarms, and deadband. The input frequency is varied because of the asynchronous nature of OPC systems, and this variation sometimes affects the results. Alarms also affect throughput, and, in the case of these tests, alarms were dynamically enabled and disabled for a LO alarm value that was rarely breached. The deadband was also dynamically altered to test a 0% and a 5% deadband.

Results

The tests showed that the maximum throughput to disk from the DAQ OPC server was around 6750 pts/s for all channel settings with alarms disabled and deadband at 0%. The value is the average throughput for 60 seconds of logging. Each test setting was tested 5 times for error calculations. The throughput, of course, decreases when alarms are enabled and a higher deadband setting is used. When examining these results, keep in mind that throughput is also hardware dependent as mentioned in the OPC Performance white paper, so the results may be slightly higher or lower for different types of hardware. With that said, these tests do give a good idea of what kind of results can be expected in a real world system.

Tests also were run to determine logging size with respect to logging resolution, which determines the precision of data to log to disk. For example, a logging resolution of 0.1 will round all logged data to the nearest tenth. The following table gives an example situation and the size of the database created for that situation. The data was obtained using 100 SCXI channels logging for about 20 minutes with zero deadband and no alarms. The average points per second for the test runs was approximately 6700.

| Resolution | Size of database (MB) |

|

0.1

|

35.4

|

|

0.01

|

36.4

|

|

0.001

|

40.5

|

|

0.0001

|

43.5

|

|

0.00001

|

46.5

|

Because each point of data has a timestamp and a value, and, in LabVIEW, these values are both doubles (8 bytes), then the same amount of data points logged to a raw database without compression would yield a size of about 122 MB ((6700 pt/s x 20 min x 60 s/min x 16 bytes/point)/2^20 bytes/MB). Comparatively, even with a precision of five decimal points, the compression is over 60%.

Analysis of the Results

The results for engine throughput as shown in the appendix are evidence that the throughput is not extremely deterministic. In fact, some cases show that the throughput is significantly higher or lower than other similar cases. This result can be expected from OPC-based systems because of the asynchronous nature of OPC. Also, modern operating systems often perform maintenance operations behind the scenes that can take disk access or CPU time away from the logging process. Of course, all of these tests are dependent on the processor, memory, and hard disk speed as well. To achieve maximum throughput, all other open programs should be stopped and closed. The CPU usage for the high performance throughput attained with these tests was nearly 100% for all of the tests run. The reason is that the tests were designed to push the computer to the maximum in order to find the limits of the logging engine. In most applications, this kind of throughput will not be necessary, so the CPU usage will be less.

The tests also showed that the server never actually achieves the stated update rate, but does increase as the update rate is increased. However, the tests also show that the maximum throughput is not achieved when the update rate is set to the highest level. For the 1 kHz update rate, the throughput actually went down in some cases.

The trade-off between channel count and throughput per channel is clearly demonstrated with the tests showing the maximum throughput does not go up as the channels increase, but is divided by the total number of channels.

Logging resolution tests show that the database can remain relatively small in terms of the amount of data that it can log, even with higher precision. The database will get bigger more quickly with higher logging resolution, but this, of course, is also a trade-off.

The performance benchmarking tests of the automated logging feature of the LabVIEW Datalogging and Supervisory Control module show that the system holds up in a real world system. The Tag Engine is able to sustain substantial throughput when logging data to disk, making the module an ideal solution for logging and monitoring applications.

Conclusion

The LabVIEW Datalogging and Supervisory Control module leverages the ease of use, functionality, and performance of LabVIEW, as well as the connectivity of OPC technology, to create a development environment that is ideal for high-channel count applications that have a need for high performance data logging and supervisory control. With National Instruments technology, developers can create efficient large channel count systems easily and cost-effectively.

Appendix -- Benchmark Test Results

050analogSCXItags_0100HzUpdate_LOALARM.scf

| Input Frequency | Deadband | Alarms enabled | Pts/s mean | Pts/s stddev | Pts/s/ch mean | Pts/s/ch stddev |

|

15

|

0

|

0

|

4800.1

|

181.406

|

96.002

|

3.628

|

|

15

|

0

|

1

|

4313.283

|

93.814

|

86.266

|

1.876

|

|

15

|

5

|

0

|

2488.394

|

301.402

|

49.768

|

6.028

|

|

15

|

5

|

1

|

2646.096

|

152.176

|

52.922

|

3.044

|

|

25

|

0

|

0

|

3892.207

|

122.138

|

77.844

|

2.443

|

|

25

|

0

|

1

|

4811.086

|

238.165

|

96.222

|

4.763

|

|

25

|

5

|

0

|

3276.118

|

277.999

|

65.522

|

5.56

|

|

25

|

5

|

1

|

3485.961

|

393.089

|

69.719

|

7.862

|

|

50

|

0

|

0

|

4307.054

|

145.055

|

86.141

|

2.901

|

|

50

|

0

|

1

|

4192.754

|

417.688

|

83.855

|

8.354

|

|

50

|

5

|

0

|

3534.843

|

353.108

|

70.697

|

7.062

|

|

50

|

5

|

1

|

3539.955

|

434.527

|

70.799

|

8.691

|

050analogSCXItags_0125HzUpdate_LOALARM.scf

| Input Frequency | Deadband | Alarms enabled | Pts/s mean | Pts/s stddev | Pts/s/ch mean | Pts/s/ch stddev |

|

15

|

0

|

0

|

3621.118

|

387.88

|

72.422

|

7.758

|

|

15

|

0

|

1

|

1678.229

|

166.295

|

33.565

|

3.326

|

|

15

|

5

|

0

|

2565.209

|

258.11

|

51.304

|

5.162

|

|

15

|

5

|

1

|

1387.894

|

114.027

|

27.758

|

2.281

|

|

25

|

0

|

0

|

3881.511

|

209.72

|

77.63

|

4.194

|

|

25

|

0

|

1

|

1459.635

|

142.915

|

29.193

|

2.858

|

|

25

|

5

|

0

|

3315.87

|

255.096

|

66.317

|

5.102

|

|

25

|

5

|

1

|

1283.698

|

156.628

|

25.674

|

3.133

|

|

50

|

0

|

0

|

3909.971

|

332.397

|

78.199

|

6.648

|

|

50

|

0

|

1

|

1089.724

|

87.199

|

21.794

|

1.744

|

|

50

|

5

|

0

|

3642.293

|

264.865

|

72.846

|

5.297

|

|

50

|

5

|

1

|

924.865

|

85.49

|

18.497

|

1.71

|

050analogSCXItags_0150HzUpdate_LOALARM.scf

| Input Frequency | Deadband | Alarms enabled | Pts/s mean | Pts/s stddev | Pts/s/ch mean | Pts/s/ch stddev |

|

15

|

0

|

0

|

2403.835

|

1967.152

|

48.077

|

39.343

|

|

15

|

0

|

1

|

4714.412

|

228.959

|

94.288

|

4.579

|

|

15

|

5

|

0

|

2603.851

|

254.252

|

52.077

|

5.085

|

|

15

|

5

|

1

|

2446.882

|

127.68

|

48.938

|

2.554

|

|

25

|

0

|

0

|

4655.863

|

319.131

|

93.117

|

6.383

|

|

25

|

0

|

1

|

4366.951

|

432.64

|

87.339

|

8.653

|

|

25

|

5

|

0

|

3315.511

|

414.233

|

66.31

|

8.285

|

|

25

|

5

|

1

|

3305.434

|

130.629

|

66.109

|

2.613

|

|

50

|

0

|

0

|

4631.007

|

264.975

|

92.62

|

5.299

|

|

50

|

0

|

1

|

4105.943

|

165.052

|

82.119

|

3.301

|

|

50

|

5

|

0

|

3532.189

|

458.423

|

70.644

|

9.168

|

|

50

|

5

|

1

|

3584.048

|

239.633

|

71.681

|

4.793

|

050analogSCXItags_0200HzUpdate_LOALARM.scf

| Input Frequency | Deadband | Alarms enabled | Pts/s mean | Pts/s stddev | Pts/s/ch mean | Pts/s/ch stddev |

|

15

|

0

|

0

|

3632.944

|

1830.548

|

72.659

|

36.611

|

|

15

|

0

|

1

|

4338.982

|

461.076

|

86.78

|

9.222

|

|

15

|

5

|

0

|

2526.8

|

173.374

|

50.536

|

3.467

|

|

15

|

5

|

1

|

2628.534

|

249.331

|

52.571

|

4.987

|

|

25

|

0

|

0

|

4514.146

|

282.624

|

90.283

|

5.652

|

|

25

|

0

|

1

|

4482.441

|

430.199

|

89.649

|

8.604

|

|

25

|

5

|

0

|

3300.829

|

320.97

|

66.017

|

6.419

|

|

25

|

5

|

1

|

3255.685

|

367.861

|

65.114

|

7.357

|

|

50

|

0

|

0

|

4468.608

|

351.904

|

89.372

|

7.038

|

|

50

|

0

|

1

|

4591.961

|

358.127

|

91.839

|

7.163

|

|

50

|

5

|

0

|

3548.49

|

401.293

|

70.97

|

8.026

|

|

50

|

5

|

1

|

3559.784

|

208.303

|

71.196

|

4.166

|

050analogSCXItags_0500HzUpdate_LOALARM.scf

| Input Frequency | Deadband | Alarms enabled | Pts/s mean | Pts/s stddev | Pts/s/ch mean | Pts/s/ch stddev |

|

15

|

0

|

0

|

4451.515

|

533.117

|

89.03

|

10.662

|

|

15

|

0

|

1

|

4259.575

|

261.326

|

85.191

|

5.227

|

|

15

|

5

|

0

|

2594.083

|

198.928

|

51.882

|

3.979

|

|

15

|

5

|

1

|

2566.057

|

224.291

|

51.321

|

4.486

|

|

25

|

0

|

0

|

4539.462

|

262.199

|

90.789

|

5.244

|

|

25

|

0

|

1

|

4494.468

|

406.503

|

89.889

|

8.13

|

|

25

|

5

|

0

|

3378.337

|

347.759

|

67.567

|

6.955

|

|

25

|

5

|

1

|

3290.424

|

196.739

|

65.808

|

3.935

|

|

50

|

0

|

0

|

4320.343

|

348.712

|

86.407

|

6.974

|

|

50

|

0

|

1

|

4621.962

|

285.354

|

92.439

|

5.707

|

|

50

|

5

|

0

|

3611.462

|

338.493

|

72.229

|

6.77

|

|

50

|

5

|

1

|

3514.111

|

270.896

|

70.282

|

5.418

|

050analogSCXItags_1000HzUpdate_LOALARM.scf

| Input Frequency | Deadband | Alarms enabled | Pts/s mean | Pts/s stddev | Pts/s/ch mean | Pts/s/ch stddev |

|

15

|

0

|

0

|

4434.014

|

353.981

|

88.68

|

7.08

|

|

15

|

0

|

1

|

4560.524

|

431.711

|

91.21

|

8.634

|

|

15

|

5

|

0

|

2620.912

|

252.76

|

52.418

|

5.055

|

|

15

|

5

|

1

|

2597.776

|

226.095

|

51.956

|

4.522

|

|

25

|

0

|

0

|

4318.662

|

238.88

|

86.373

|

4.778

|

|

25

|

0

|

1

|

4590.248

|

330.939

|

91.805

|

6.619

|

|

25

|

5

|

0

|

3423.218

|

392.37

|

68.464

|

7.847

|

|

25

|

5

|

1

|

3380.163

|

311.12

|

67.603

|

6.222

|

|

50

|

0

|

0

|

4395.995

|

405.85

|

87.92

|

8.117

|

|

50

|

0

|

1

|

4276.581

|

323.391

|

85.532

|

6.468

|

|

50

|

5

|

0

|

3594.784

|

350.723

|

71.896

|

7.014

|

|

50

|

5

|

1

|

3493.525

|

322.097

|

69.871

|

6.442

|

100analogSCXItags_0100HzUpdate_LOALARM.scf

| Input Frequency | Deadband | Alarms enabled | Pts/s mean | Pts/s stddev | Pts/s/ch mean | Pts/s/ch stddev |

|

15

|

0

|

0

|

7463.66

|

3845.574

|

74.637

|

38.456

|

|

15

|

0

|

1

|

7650.781

|

1641.545

|

76.508

|

16.415

|

|

15

|

5

|

0

|

4607.99

|

110.599

|

46.08

|

1.106

|

|

15

|

5

|

1

|

5209.85

|

151.395

|

52.098

|

1.514

|

|

25

|

0

|

0

|

5849.719

|

458.987

|

58.497

|

4.59

|

|

25

|

0

|

1

|

5708.465

|

459.889

|

57.085

|

4.599

|

|

25

|

5

|

0

|

5492.563

|

91.576

|

54.926

|

0.916

|

|

25

|

5

|

1

|

4883.407

|

155.671

|

48.834

|

1.557

|

|

50

|

0

|

0

|

6092.36

|

536.441

|

60.924

|

5.364

|

|

50

|

0

|

1

|

5657.667

|

428.484

|

56.577

|

4.285

|

|

50

|

5

|

0

|

4227.418

|

156.351

|

42.274

|

1.564

|

|

50

|

5

|

1

|

4139.345

|

344.015

|

41.393

|

3.44

|

100analogSCXItags_0125HzUpdate_LOALARM.scf

| Input Frequency | Deadband | Alarms enabled | Pts/s mean | Pts/s stddev | Pts/s/ch mean | Pts/s/ch stddev |

|

15

|

0

|

0

|

5968.615

|

392.273

|

59.686

|

3.923

|

|

15

|

0

|

1

|

5740.173

|

499.663

|

57.402

|

4.997

|

|

15

|

5

|

0

|

4961.676

|

369.695

|

49.617

|

3.697

|

|

15

|

5

|

1

|

5190.204

|

86.355

|

51.902

|

0.864

|

|

25

|

0

|

0

|

5898.159

|

319.774

|

58.982

|

3.198

|

|

25

|

0

|

1

|

5906.751

|

439.68

|

59.068

|

4.397

|

|

25

|

5

|

0

|

5678.625

|

157.648

|

56.786

|

1.576

|

|

25

|

5

|

1

|

5567.471

|

93.557

|

55.675

|

0.936

|

|

50

|

0

|

0

|

6032.038

|

321.237

|

60.32

|

3.212

|

|

50

|

0

|

1

|

5788.724

|

224.128

|

57.887

|

2.241

|

|

50

|

5

|

0

|

4463.355

|

190.631

|

44.634

|

1.906

|

|

50

|

5

|

1

|

4062.016

|

115.455

|

40.62

|

1.155

|

100analogSCXItags_0150HzUpdate_LOALARM.scf

| Input Frequency | Deadband | Alarms enabled | Pts/s mean | Pts/s stddev | Pts/s/ch mean | Pts/s/ch stddev |

|

15

|

0

|

0

|

6054.68

|

287.2

|

60.547

|

2.872

|

|

15

|

0

|

1

|

5781.489

|

113.042

|

57.815

|

1.13

|

|

15

|

5

|

0

|

4925.773

|

267.445

|

49.258

|

2.674

|

|

15

|

5

|

1

|

4952.848

|

211.224

|

49.528

|

2.112

|

|

25

|

0

|

0

|

6081.609

|

294.17

|

60.816

|

2.942

|

|

25

|

0

|

1

|

5874.128

|

287.422

|

58.741

|

2.874

|

|

25

|

5

|

0

|

5695.319

|

60.093

|

56.953

|

0.601

|

|

25

|

5

|

1

|

5569.718

|

84.763

|

55.697

|

0.848

|

|

50

|

0

|

0

|

6036.44

|

262.928

|

60.364

|

2.629

|

|

50

|

0

|

1

|

5835.608

|

240.926

|

58.356

|

2.409

|

|

50

|

5

|

0

|

4427.575

|

194.343

|

44.276

|

1.943

|

|

50

|

5

|

1

|

4179.701

|

146.522

|

41.797

|

1.465

|

100analogSCXItags_0200HzUpdate_LOALARM.scf

| Input Frequency | Deadband | Alarms enabled | Pts/s mean | Pts/s stddev | Pts/s/ch mean | Pts/s/ch stddev |

|

15

|

0

|

0

|

5988.292

|

241.234

|

59.883

|

2.412

|

|

15

|

0

|

1

|

5900.519

|

264.001

|

59.005

|

2.64

|

|

15

|

5

|

0

|

4902.237

|

191.96

|

49.022

|

1.92

|

|

15

|

5

|

1

|

4911.521

|

259.654

|

49.115

|

2.597

|

|

25

|

0

|

0

|

6100.575

|

268.391

|

61.006

|

2.684

|

|

25

|

0

|

1

|

5835.394

|

268.223

|

58.354

|

2.682

|

|

25

|

5

|

0

|

5667.482

|

224.279

|

56.675

|

2.243

|

|

25

|

5

|

1

|

5113.705

|

166.457

|

51.137

|

1.665

|

|

50

|

0

|

0

|

6226.679

|

331.814

|

62.267

|

3.318

|

|

50

|

0

|

1

|

5920.765

|

269.403

|

59.208

|

2.694

|

|

50

|

5

|

0

|

4542.556

|

268.931

|

45.426

|

2.689

|

|

50

|

5

|

1

|

4274.89

|

328.33

|

42.749

|

3.283

|

200analogSCXItags_0100HzUpdate_LOALARM.scf

| Input Frequency | Deadband | Alarms enabled | Pts/s mean | Pts/s stddev | Pts/s/ch mean | Pts/s/ch stddev |

|

15

|

0

|

0

|

6322.027

|

215.49

|

31.61

|

1.077

|

|

15

|

0

|

1

|

6227.543

|

129.925

|

31.138

|

0.65

|

|

15

|

5

|

0

|

3490.774

|

76.655

|

17.454

|

0.383

|

|

15

|

5

|

1

|

3299.065

|

142.25

|

16.495

|

0.711

|

|

25

|

0

|

0

|

6371.248

|

156.929

|

31.856

|

0.785

|

|

25

|

0

|

1

|

6263.195

|

155.702

|

31.316

|

0.779

|

|

25

|

5

|

0

|

4561.997

|

161.501

|

22.81

|

0.808

|

|

25

|

5

|

1

|

4491.155

|

90.42

|

22.456

|

0.452

|

|

50

|

0

|

0

|

6295.517

|

144.514

|

31.478

|

0.723

|

|

50

|

0

|

1

|

6289.58

|

130.29

|

31.448

|

0.651

|

|

50

|

5

|

0

|

2969.311

|

131.26

|

14.847

|

0.656

|

|

50

|

5

|

1

|

2810.879

|

128.148

|

14.054

|

0.641

|

200analogSCXItags_0125HzUpdate_LOALARM.scf

| Input Frequency | Deadband | Alarms enabled | Pts/s mean | Pts/s stddev | Pts/s/ch mean | Pts/s/ch stddev |

|

15

|

0

|

0

|

6433.531

|

191.547

|

32.168

|

0.958

|

|

15

|

0

|

1

|

5876.709

|

211.066

|

29.384

|

1.055

|

|

15

|

5

|

0

|

5813.431

|

97.534

|

29.067

|

0.488

|

|

15

|

5

|

1

|

5436.672

|

91.529

|

27.183

|

0.458

|

|

25

|

0

|

0

|

6489.729

|

153.256

|

32.449

|

0.766

|

|

25

|

0

|

1

|

6042.608

|

168.946

|

30.213

|

0.845

|

|

25

|

5

|

0

|

5639.661

|

159.308

|

28.198

|

0.797

|

|

25

|

5

|

1

|

4888.349

|

179.72

|

24.442

|

0.899

|

|

50

|

0

|

0

|

6521.272

|

189.717

|

32.606

|

0.949

|

|

50

|

0

|

1

|

6103.931

|

159.198

|

30.52

|

0.796

|

|

50

|

5

|

0

|

4655.249

|

113.396

|

23.276

|

0.567

|

|

50

|

5

|

1

|

4474.253

|

138.998

|

22.371

|

0.695

|

200analogSCXItags_0150HzUpdate_LOALARM.scf

| Input Frequency | Deadband | Alarms enabled | Pts/s mean | Pts/s stddev | Pts/s/ch mean | Pts/s/ch stddev |

|

15

|

0

|

0

|

6709.838

|

220.322

|

33.549

|

1.102

|

|

15

|

0

|

1

|

6162.232

|

172.924

|

30.811

|

0.865

|

|

15

|

5

|

0

|

5967.062

|

136.638

|

29.835

|

0.683

|

|

15

|

5

|

1

|

5347.471

|

113.218

|

26.737

|

0.566

|

|

25

|

0

|

0

|

6809.53

|

228.546

|

34.048

|

1.143

|

|

25

|

0

|

1

|

6410.144

|

143.07

|

32.051

|

0.715

|

|

25

|

5

|

0

|

5700.943

|

123.596

|

28.505

|

0.618

|

|

25

|

5

|

1

|

5308.383

|

75.813

|

26.542

|

0.379

|

|

50

|

0

|

0

|

6662.945

|

153.075

|

33.315

|

0.765

|

|

50

|

0

|

1

|

6275.631

|

123.868

|

31.378

|

0.619

|

|

50

|

5

|

0

|

4714.997

|

190.198

|

23.575

|

0.951

|

|

50

|

5

|

1

|

4484.56

|

168.535

|

22.423

|

0.843

|

200analogSCXItags_0200HzUpdate_LOALARM.scf

| Input Frequency | Deadband | Alarms enabled | Pts/s mean | Pts/s stddev | Pts/s/ch mean | Pts/s/ch stddev |

|

15

|

0

|

0

|

5265.609

|

2634.163

|

26.328

|

13.171

|

|

15

|

0

|

1

|

5776.386

|

44.464

|

28.882

|

0.222

|

|

15

|

5

|

0

|

5777.333

|

156.227

|

28.887

|

0.781

|

|

15

|

5

|

1

|

5456.876

|

49.807

|

27.284

|

0.249

|

|

25

|

0

|

0

|

6397.569

|

168.105

|

31.988

|

0.841

|

|

25

|

0

|

1

|

6230.659

|

160.448

|

31.153

|

0.802

|

|

25

|

5

|

0

|

5625.919

|

171.145

|

28.13

|

0.856

|

|

25

|

5

|

1

|

4957.417

|

114.627

|

24.787

|

0.573

|

|

50

|

0

|

0

|

6399.063

|

164.431

|

31.995

|

0.822

|

|

50

|

0

|

1

|

6172.433

|

146.667

|

30.862

|

0.733

|

|

50

|

5

|

0

|

4794.329

|

98.903

|

23.972

|

0.495

|

|

50

|

5

|

1

|

4414.021

|

147.198

|

22.07

|

0.736

|

200analogSCXItags_0500HzUpdate_LOALARM.scf

| Input Frequency | Deadband | Alarms enabled | Pts/s mean | Pts/s stddev | Pts/s/ch mean | Pts/s/ch stddev |

|

15

|

0

|

0

|

5286.239

|

2645.82

|

26.431

|

13.229

|

|

15

|

0

|

1

|

5880.349

|

26.005

|

29.402

|

0.13

|

|

15

|

5

|

0

|

5755.582

|

167.262

|

28.778

|

0.836

|

|

15

|

5

|

1

|

5378.095

|

78.136

|

26.89

|

0.391

|

|

25

|

0

|

0

|

6595.741

|

51.079

|

32.979

|

0.255

|

|

25

|

0

|

1

|

5991.145

|

206.783

|

29.956

|

1.034

|

|

25

|

5

|

0

|

5551.91

|

60.45

|

27.76

|

0.302

|

|

25

|

5

|

1

|

5073.992

|

108.607

|

25.37

|

0.543

|

|

50

|

0

|

0

|

6608.111

|

152.714

|

33.041

|

0.764

|

|

50

|

0

|

1

|

6145.35

|

169.217

|

30.727

|

0.846

|

|

50

|

5

|

0

|

4650.088

|

101.806

|

23.25

|

0.509

|

|

50

|

5

|

1

|

4463.691

|

207.123

|

22.318

|

1.036

|

200analogSCXItags_1000HzUpdate_LOALARM.scf

| Input Frequency | Deadband | Alarms enabled | Pts/s mean | Pts/s stddev | Pts/s/ch mean | Pts/s/ch stddev |

|

15

|

0

|

0

|

4069.409

|

3321.474

|

20.347

|

16.607

|

|

15

|

0

|

1

|

6076.087

|

108.873

|

30.38

|

0.544

|

|

15

|

5

|

0

|

5922.881

|

121.426

|

29.614

|

0.607

|

|

15

|

5

|

1

|

5404.863

|

48.868

|

27.024

|

0.244

|

|

25

|

0

|

0

|

6769.941

|

108.65

|

33.85

|

0.543

|

|

25

|

0

|

1

|

6078.44

|

130.52

|

30.392

|

0.653

|

|

25

|

5

|

0

|

5661.183

|

138.065

|

28.306

|

0.69

|

|

25

|

5

|

1

|

5102.062

|

78.425

|

25.51

|

0.392

|

|

50

|

0

|

0

|

6774.692

|

196.357

|

33.873

|

0.982

|

|

50

|

0

|

1

|

6286.237

|

146.107

|

31.431

|

0.731

|

|

50

|

5

|

0

|

4824.092

|

186.237

|

24.12

|

0.931

|

|

50

|

5

|

1

|

4411.179

|

72.96

|

22.056

|

0.365

|