Minimize Schedule Risk With Off-the-Shelf Technology for LRU Testing

Overview

The lifecycles and operation of line-replaceable unit (LRU) test systems are governed by aerospace program cycles. Countless aerospace LRU testers are still in service because programs did not include the budget or time to update and extend the capability of deployed systems. When a test architecture can’t meet all test requirements, it can be difficult to propose changes to the status quo solution because the program must balance the schedule and cost impact of making alterations. This leads to decades-old test systems with few technology updates still in operation. Almost universally across the industry, deferring test infrastructure upgrades results in the accumulation of technical risk because each deferment increases the cost and risk associated with an upgrade on a subsequent program. This lack of technological readiness can limit an aerospace program’s options for meeting its test and quality requirements and certainly will hinder its ability to innovate and be competitive.

NI and our ecosystem of partner companies are focused on accelerating the process of building an aerospace LRU test system, so you can focus on what matters more―using your unique expertise to produce an optimized product.

Contents

- The Inner-Workings of a Test Architecture

- Commonalities of LRU Test Systems

- Freedom to Use Your Expertise

- The Benefits of NI HIL Simulators

The Inner-Workings of a Test Architecture

Aerospace program officers are primarily concerned with meeting customer requirements and preventing any quality escapes rather than the inner-workings of their test architecture. At the enterprise level, quality testing involves better model-based design, greater test automation, the ability to share common architectures between phases of the life cycle, and requirements tracking. But typically, these process improvements require modernizing the underlying test infrastructure and are sacrificed so that the program’s basic elements—like having a pin to test on—can be completed to support the schedule.

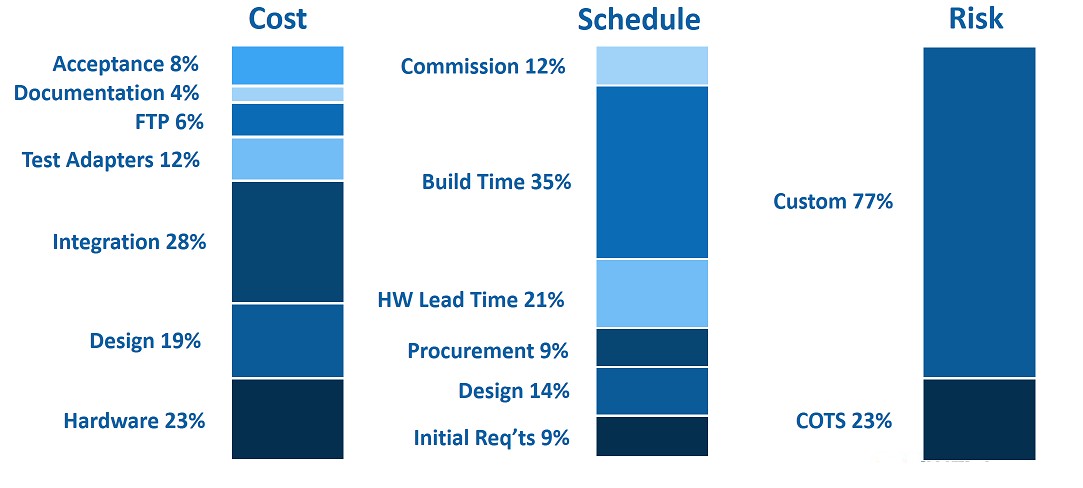

To keep attention focused on product quality, a test architecture needs to be flexible enough to allow for continuous evolution from program to program. Paradoxically, the migration to this type of architecture must occur within a single program. Capital budgets outside a program are rare and the need for an upgrade typically arises in the middle of a program when you’re most risk averse. Any path forward requires a clear understanding of a program’s primary cost, risk, and schedule drivers. Factors like designing the test system, point-to-point wiring, and building test adapters are essential to create a functioning test system. But they don’t necessarily contribute to increased product quality. The percentages shown in Figure 1 are typical of many aerospace companies.

Figure 1: To architect and deploy a new LRU test system includes trade-offs in up-front cost, development time, and acceptance of risk. The typical LRU tester deployed today is highly custom and has a long build time, both of which add significant risk to a program schedule.

Hardware typically accounts for less than a quarter of the total cost, whereas the design and build labor accounts for the greatest impact to budget and schedule. Based on typical data, you can estimate $800 to $1,000 per pin of I/O with an 8- to 12-month schedule, depending on the size of the system. To make an impact, you must address both cost and time.

There is a large technology overlap between LRU test systems across companies. If you off-load these common system components to off-the-shelf components, you are free to work on the niche test system pieces only you can do that greatly enhance your testing.

Commonalities of LRU Test Systems

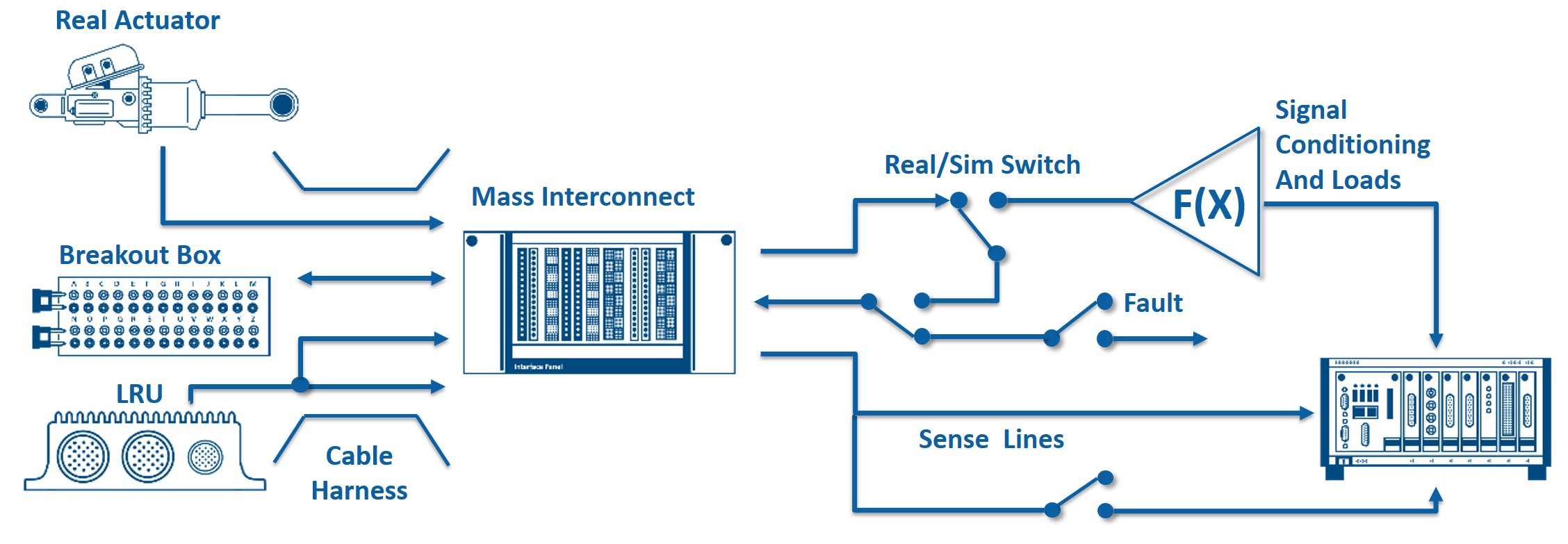

A basic LRU test system consists of a unit under test (UUT) interfaced to a mass interconnect that is connected to simulation I/O driven by a test executive running the aircraft simulation. You can customize this basic setup to include the addition of signal conditioning for sensor simulation and specific loads that need to be driven by the LRU as well as fault insertion for software testing. Integration lab testing involves connecting to real devices that are under control as well as control LRUs and introduces the need to switch between real and simulated versions of devices. Additional customizations can involve a breakout box for manual faulting, signal injection and rerouting, as well as sense lines to know exactly what the LRU is seeing during all phases of testing. Sense lines may mean an instrument-grade measurement is needed.

Figure 2: A Typical LRU test system includes I/O instrumentation, signal conditioning, fault insertion, sense, and switching lines, real and simulated stimulus signals, a mass interconnect, a breakout box and cable harnesses, real actuators, and the LRU under test.

Traditionally, NI could help customers by consolidating the measurement and simulation components of this setup into one measurement and computing platform. However, this does not address the signal routing components that are the major cost and schedule influencers. If you take the industry-standard metric of three minutes per wire termination and $5,000 per week full-time equivalent (FTE) for labor rate, facilities, and oversight of a technician, then the system costs about $125 per I/O pin per hour. A full 600-pin system would be around 15 weeks at $75,000. This is without any design changes. So, in reality, the cost will likely be much higher.

Every LRU test system uses some variation of this basic setup. So why is there so much custom design and wiring for a system that is used industry wide? Maybe this is the cost of business. But, what if it didn’t have to be this way?

Freedom to Use Your Expertise

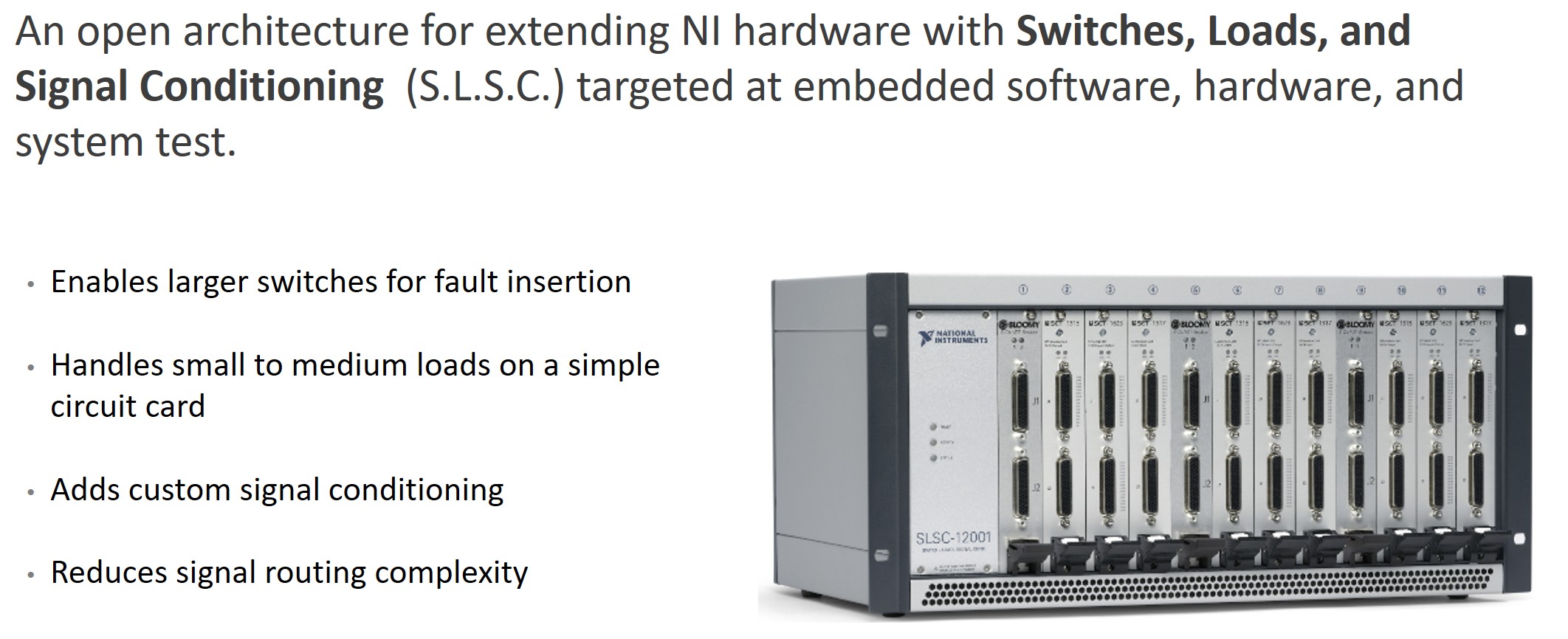

NI is challenging the status quo in industries where the signal handling follows established patterns as it does with LRU testing. With the introduction of NI’s switch load signal conditioning (SLSC) add-on for the PXI and CompactRIO measurement platforms, you can take standard analog and digital I/O types and transform and manipulate the signal paths to implement the kind of inline functions that form the core of an LRU validation architecture.

Figure 3: The NI Switch Load Signal Conditioning platform extends the PXI and CompactRIO instrumentation platforms to complete more of the LRU test system. The NI SLSC platform includes signal conditioning, fault insertion, sense, and switching lines that then pas signals to I/O instrumentation.

To help eliminate the need for customization, NI offers solutions for many of the most common signal types. Some highlights include high-voltage digital waveform signals, resistive sensor simulation, and ARINC 429 and MIL-STD 1553 cards. Many of these cards come from partner companies, namely Bloomy Controls and SET, with direct expertise in this field. The intention is that these cards will cover most I/O needs. There is no way, however, that any vendor can know your test requirements, so some customization may be needed. With NI’s open and flexible platform, you can design your own SLSC cards based on NI’s module development kit. This provides all the details needed for you to customize unique circuitry that’s compatible with the rest of the SLSC ecosystem. Alternatively, an NI partner can create this custom card for you. After this is complete, you effectively have an off-the-shelf product that is compatible with the rest of the SLSC ecosystem. All SLSC cards have the same 44-pin D-SUB connector with the same pinout, mitigating the need for point-to-point wiring between terminal blocks. Terminal blocks can be replaced with standard interface panels to connect signals to actuators, cable harnesses, and the LRU.

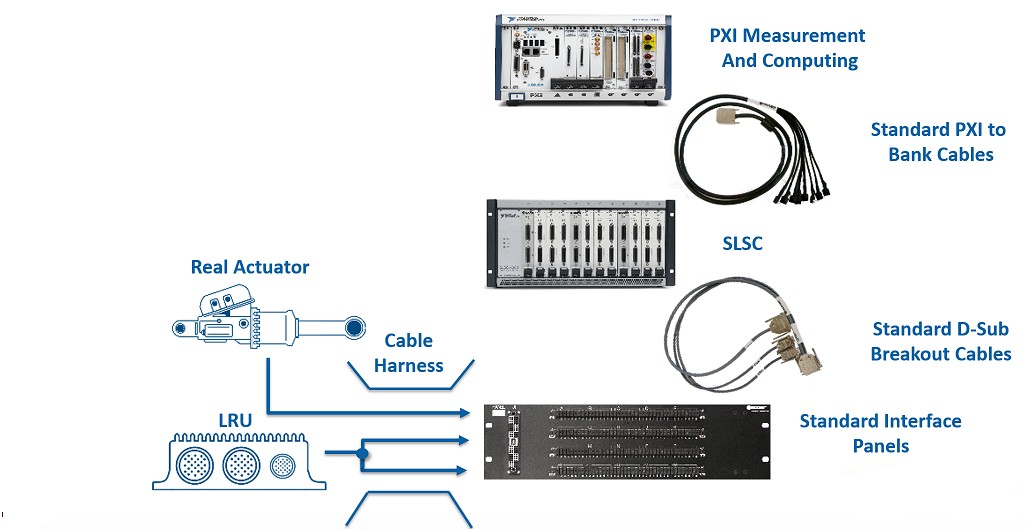

Figure 4: With the NI SLSC and PXI platforms, standardized cabling and interface panels, and common test rack components, Ni can deliver a off-the-shelf test system that replaces legacy or customized LRU test system components.

With this approach, you can replace custom design with configuration using off-the-shelf components. This may not cover all signals in the system, but time, cost, and risk associated with building a custom solution is removed for the majority. NI partners, such as Bloomy Controls, can provide racks ready for use. LRU testers can be delivered ready for your customization or can be specifically tailored to your specifications with a software starting point preconfigured by our partners. Featuring a minimum amount of custom design and NRE, these ready-to-use test architectures reduce lead time but are still part of NI’s open and flexible platform, meaning that you can modify your system and are not locked into a black box solution.

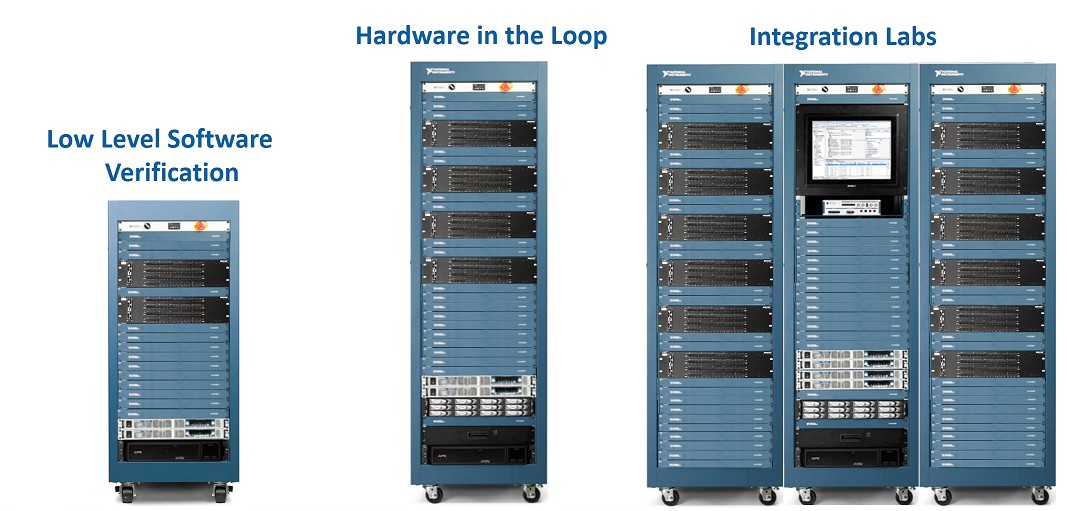

Figure 5: NI HIL Simulators are integrated using COTS rack components, from the programmable power supplies and power infrastructure to the HMIs and the 19" rack.

The Benefits of NI HIL Simulators

In order to invest in LRU test system enhancements

- All changes must occur within one program cycle

- NRE costs must decrease or remain constant

- Point-to-point wiring must be moved to test adapters and/or remain unchanged

- All change costs must be minimized and the cost associated with commissioning the system must be justifiable

By replacing custom engineering solutions with off-the-shelf components, you can

- Reduce cost by as much as 23 percent, resulting in $600 to $700 per I/O pin with a higher percentage of COTS components

- Point-to-point wiring is moved to test adapters, resulting in no change

- Decrease the risk of schedule impacts by 48 percent, resulting in a four- to six-month timeline

- Off-load maintenance burdens to a third party

With this approach, you can focus on areas that demand your unique expertise.