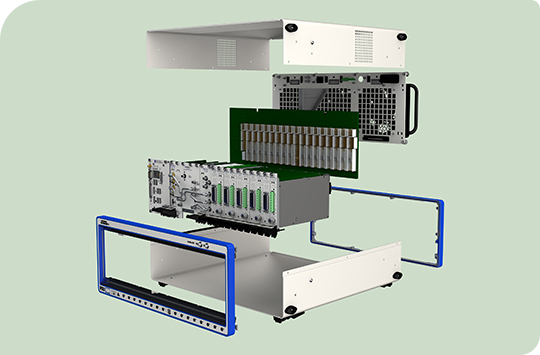

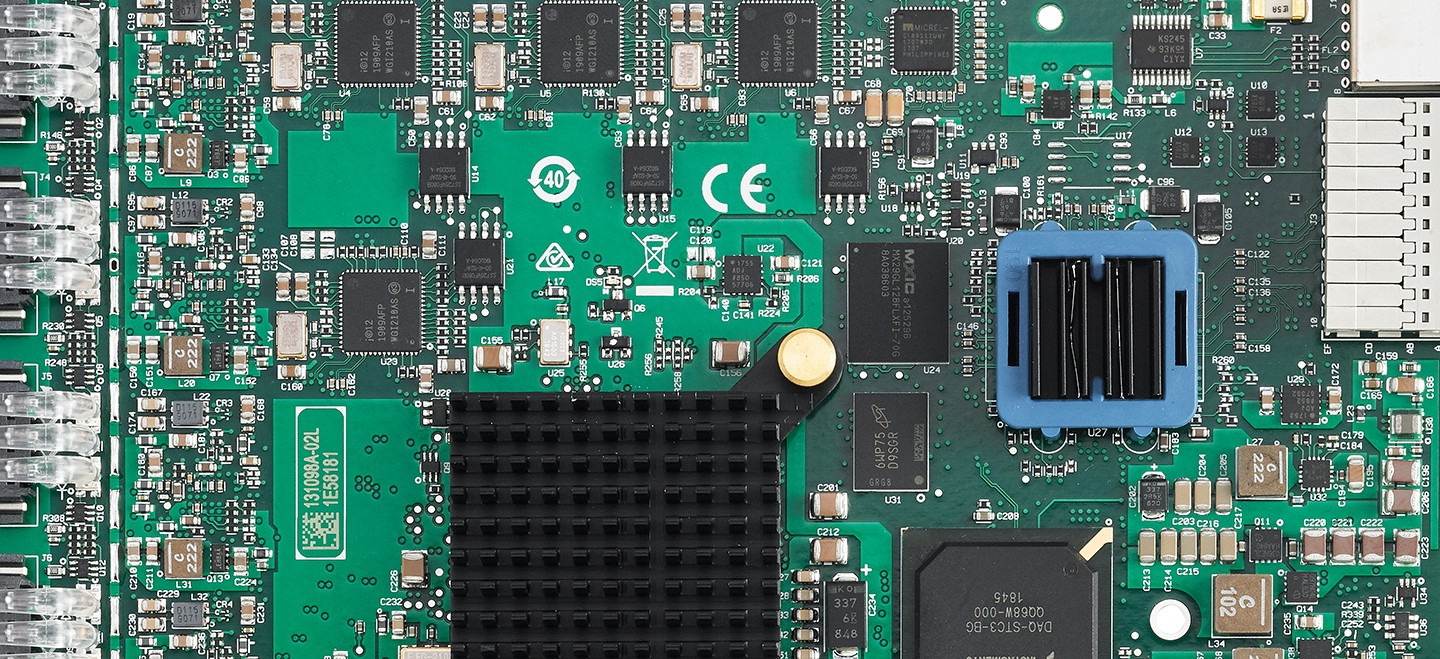

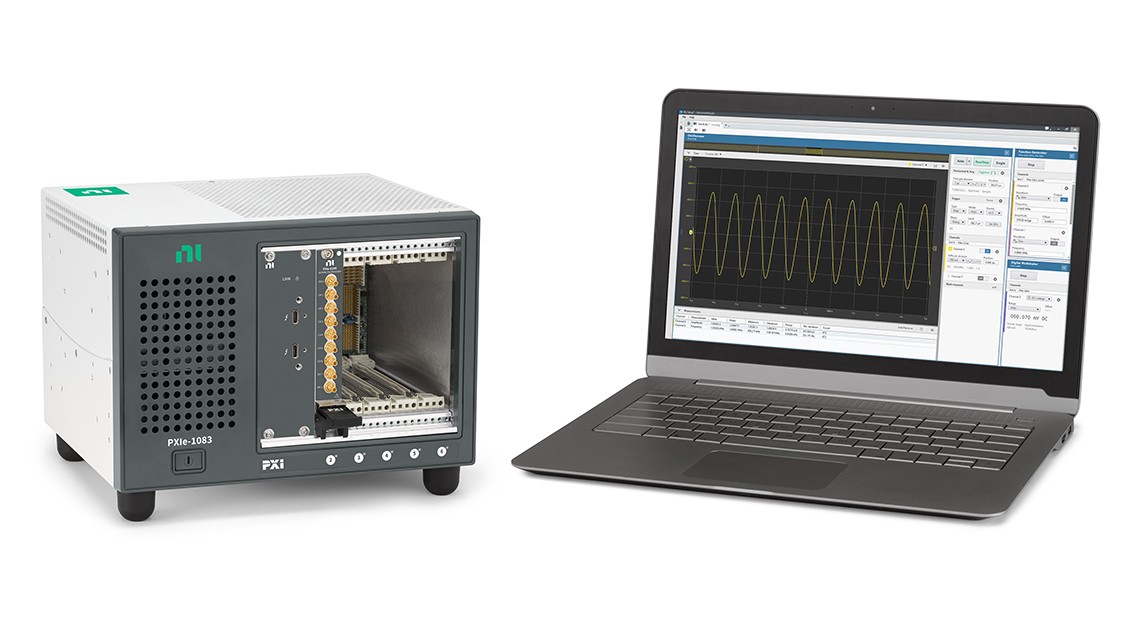

Engineers choose NI PXI because of its high measurement performance and proven history of lowering test time and reducing the overall cost of test. The combination of PXI’s high performance, modularity, and software connectivity make it ideal for test applications that require high accuracy, high-channel count, mixed measurements.

Best for:

- Production test systems for electronic devices

- Automated validation test

- High-channel, high-speed test applications

- Combining instruments, sensor, and electrical measurements