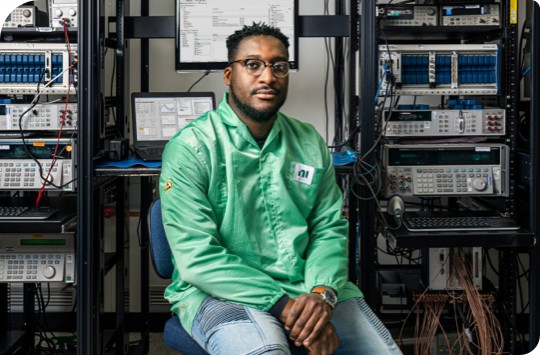

NI’s Kyle Voosen interviews Chris Robinson of Medtronic on what it means to engineer ambitiously. Chris describes the challenges his team faces focusing on automated production test of pacemakers and gives insights into the innovations they have implemented to become a best-in-class global manufacturer.