From Friday, April 19th (11:00 PM CDT) through Saturday, April 20th (2:00 PM CDT), 2024, ni.com will undergo system upgrades that may result in temporary service interruption.

We appreciate your patience as we improve our online experience.

From Friday, April 19th (11:00 PM CDT) through Saturday, April 20th (2:00 PM CDT), 2024, ni.com will undergo system upgrades that may result in temporary service interruption.

We appreciate your patience as we improve our online experience.

LabVIEW has been used by millions of engineers and scientists to develop sophisticated test, measurement, and control applications. While LabVIEW provides a variety of features and tools ranging from interactive assistants to configurable user-defined interfaces, it is differentiated by its graphical, general-purpose programming language (known as G) along with an associated integrated compiler, a linker, and debugging tools.

To better understand the major value propositions of LabVIEW graphical programming, it is helpful to review some background on the first higher-level programming language. At the dawn of the modern computer age in the mid-1950s, a small team at IBM decided to create a more practical alternative to programming the enormous IBM 704 mainframe (a supercomputer in its day) in low-level assembly language, the most modern language available at the time. The result was FORTRAN, a more human-readable programming language whose purpose was to speed up the development process.

The engineering community was initially skeptical that this new method could outperform programs hand-crafted in assembly, but soon it was shown that FORTRAN-generated programs ran nearly as efficiently as those written in assembly. At the same time, FORTRAN reduced the number of programming statements necessary in a program by a factor of 20, which is why it is often considered the first higher-level programming language. Not surprisingly, FORTRAN quickly gained acceptance in the scientific community and remains influential.

Fifty years later, there are still important lessons in this anecdote. First, for more than 50 years, engineers have sought easier and faster ways to solve problems through computer programming. Second, the programming languages chosen by engineers to translate their tasks have trended toward higher levels of abstraction. These lessons help explain the immense popularity and widespread adoption of G since its inception in 1986; G represents an extremely high-level programming language whose purpose is to increase the productivity of its users while executing at nearly the same speeds as lower-level languages like FORTRAN, C, and C++.

LabVIEW is different from most other general-purpose programming languages in two major ways. First, G programming is performed by wiring together graphical icons on a diagram, which is then compiled directly to machine code so the computer processors can execute it. While represented graphically instead of with text, G contains the same programming concepts found in most traditional languages. For example, G includes all the standard constructs, such as data types, loops, event handling, variables, recursion, and object-oriented programming.

The second main differentiator is that G code developed with LabVIEW executes according to the rules of data flow instead of the more traditional procedural approach (in other words, a sequential series of commands to be carried out) found in most text-based programming languages like C and C++. Dataflow languages like G (as well as Agilent VEE, Microsoft Visual Programming Language, and Apple Quartz Composer) promote data as the main concept behind any program. Dataflow execution is data-driven, or data-dependent. The flow of data between nodes in the program, not sequential lines of text, determines the execution order.

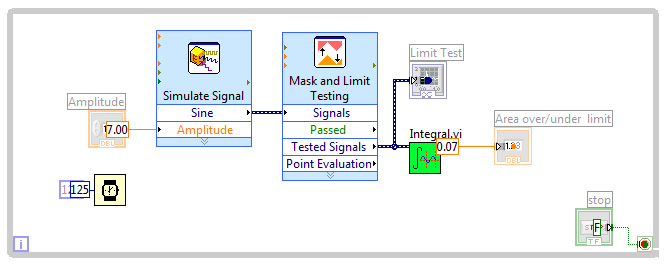

This distinction may seem minor at first, but the impact is extraordinary because it renders the data paths between parts of the program to be the developer’s main focus. Nodes in a LabVIEW program (in other words, functions, structures such as loops, subroutines, and so on) have inputs, process data, and produce outputs. Once all of a given node’s inputs contain valid data, that node executes its logic, produces output data, and passes that data to the next node in the dataflow path. A node that receives data from another node can execute only after the other node completes execution.

Like most people, engineers and scientists learn by seeing and processing images without any need for conscious contemplation. Many engineers and scientists can also be characterized as “visual thinkers,” meaning that they are especially adept at using visual processing to organize information. In other words, they think best in pictures. This is often reinforced in colleges and universities, where students are encouraged to model solutions to problems as process diagrams. However, most general-purpose programming languages require you to spend significant time learning the specific text-based syntax associated with that language and then map the structure of the language to the problem being solved. Graphical programming with G provides a more intuitive experience.

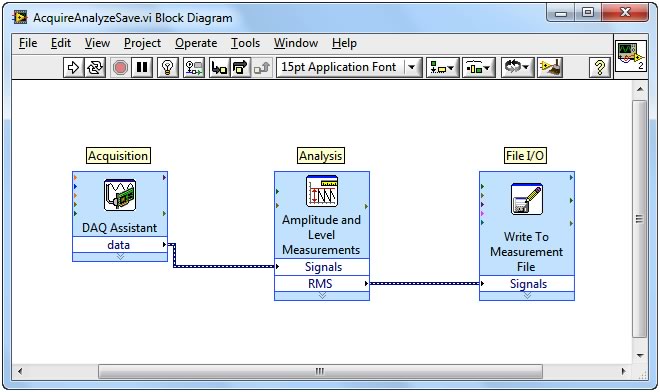

G code is typically easier for engineers and scientists to quickly understand because they are largely familiar with visualizing and even diagrammatically modeling processes and tasks in terms of block diagrams and flowcharts (which also follow the rules of data flow). In addition, because dataflow languages require you to base the structure of the program around the flow of data, you are encouraged to think in terms of the problem you need to solve. For example, a typical G program might first acquire several channels of temperature data, then pass the data to an analysis function, and, finally, write the analyzed data to disk. Overall, the flow of data and steps involved in this program are easy to understand within a LabVIEW diagram.

Because LabVIEW graphical G code is easy to comprehend, common programming tasks, like debugging, become more intuitive as well. For example, LabVIEW provides unique debugging tools that you can use to watch as data interactively moves through the wires of a LabVIEW program and see the data values as they pass from one function to another along the wires (known within LabVIEW as execution highlighting).

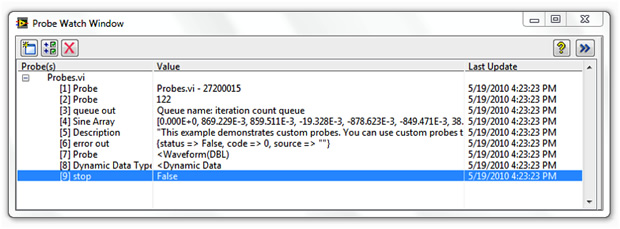

LabVIEW also offers debugging features for G comparable to those found in traditional programming tools. These features, accessible as part of the toolbar for a diagram, include probes, breakpoints, and step over/into/out of.

With the G debugging tools, you can probe data on many parts of the program simultaneously, pause execution, and step into a subroutine without complex programming. While this is possible in other programming languages, it is easier to visualize the state of the program and the relationships between parallel parts of the code (which are common in G because of its graphical nature).

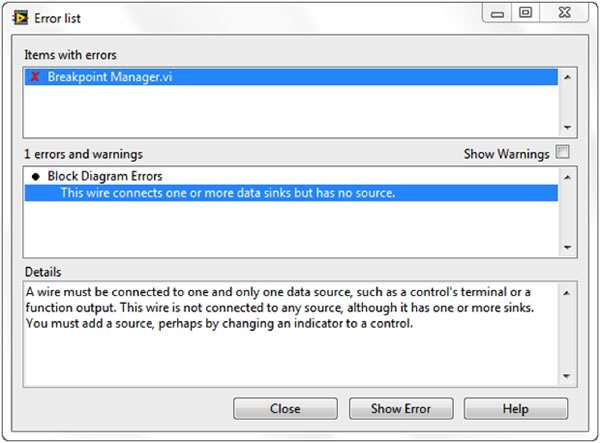

One of the most common debugging features used in LabVIEW is the always-on compiler. While you are developing a program, the compiler continuously checks for errors and provides semantic and syntactic feedback on the application. If an error exists, you cannot run the program – you see only a broken Run button in the toolbar.

Pressing the broken Run button opens a list of problems that you must address. Once you have addressed these issues the LabVIEW compiler can compile your program to machine code. Once compiled, the performance of G programs is comparable to that of more traditional text-based languages like C.

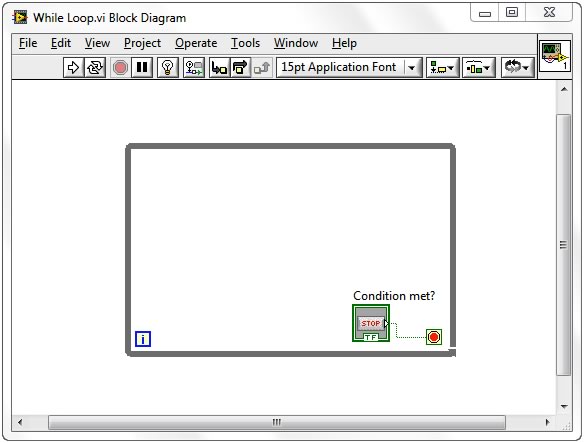

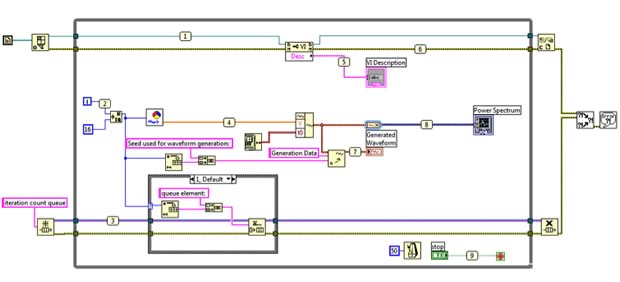

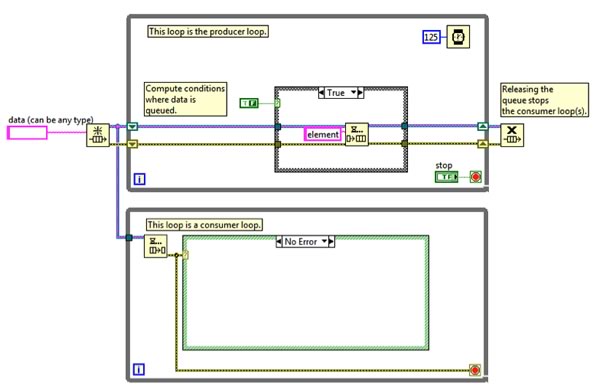

Dataflow languages like LabVIEW allow for automatic parallelization. In contrast to sequential languages like C and C++, graphical programs inherently contain information about which parts of the code should execute in parallel. For example, a common G design pattern is the Producer/Consumer Design Pattern, in which two separate While Loops execute independently: the first loop is responsible for producing data and the second loop processes data. Despite executing in parallel (possibly at different rates), data is passed between the two loops using queues, which are standard data structures in general-purpose programming languages.

Parallelism is important in computer programs because it can unlock performance gains relative to purely sequential programs due to recent changes in computer processor designs. For more than 40 years, computer chip manufacturers increased processor clock speed to increase chip performance. Today, however, increasing clock speeds for performance gains is no longer viable because of power consumption and heat dissipation constraints. As a result, chip vendors have instead moved to new chip architectures with multiple processor cores on a single chip.

To take advantage of the performance available in multicore processors, you must be able to use multithreading within your applications (in other words, break up applications into discrete sections that can be executed independently). If you use traditional text-based languages, you must explicitly create and manage threads to implement parallelism, a major challenge for nonexpert programmers.

In contrast, the parallel nature of G code makes multitasking and multithreading simple to implement. The built-in compiler continually works in the background to identify parallel sections of code. Whenever G code has a branch in a wire, or a parallel sequence of nodes on the diagram, the compiler tries to execute the code in parallel within a set of threads that LabVIEW manages automatically. In computer science terms, this is called “implicit parallelism” because you do not have to specifically write code with the purpose of running it in parallel; the G language takes care of parallelism on its own.

Beyond multithreading on a multicore system, G can provide even greater parallel execution by extending graphical programming to field-programmable gate arrays (FPGAs). FPGAs are reprogrammable silicon chips that are massively parallel – with each independent processing task assigned to a dedicated section of the chip – but they are not limited by the number of processing cores available. As a result, the performance of one part of the application is not adversely affected when more processing is added.

Historically, FPGA programming was the province of only a specially trained expert with a deep understanding of digital hardware design languages. Increasingly, engineers without FPGA expertise want to use FPGA-based custom hardware for unique timing and triggering routines, ultrahigh-speed control, interfacing to digital protocols, digital signal processing (DSP), RF and communications, and many other applications requiring high-speed hardware reliability, customization, and tight determinism. G is particularly suited for FPGA programming because it clearly represents parallelism and data flow and is quickly growing in popularity as a tool of choice for developers seeking parallel processing and deterministic execution.

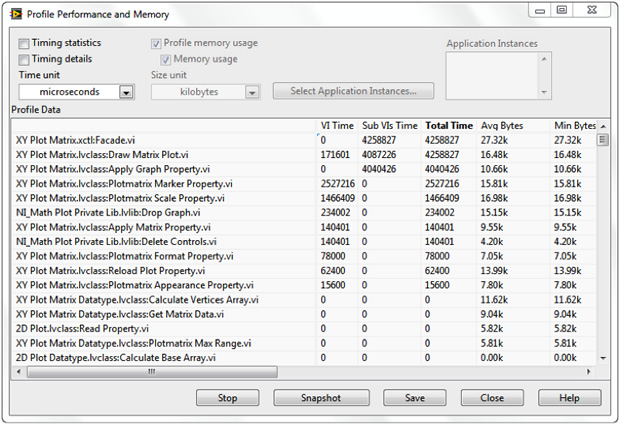

When G code exhibits unusual or unexpected behavior that you cannot easily resolve using the previously mentioned debugging tools, you can use more advanced debugging features with the LabVIEW Desktop Execution Trace Toolkit. This toolkit is designed for more advanced users who want to perform dynamic code analysis for the following:

Detecting memory and reference leaks

Isolating the source of a specific event or undesired behavior

Screening applications for areas where performance can be improved

Identifying the last call before an error

Ensuring the execution of an application is the same on different targets

While G code provides an excellent representation for parallelism and removes the requirement on developers to understand and manage computer memory, it is not necessarily ideal for every task. In particular, mathematical formulas and equations can often be more succinctly represented with text. For that reason, you can use LabVIEW to combine graphical programming with several forms of text-based programming. Working within LabVIEW, you can choose a textual approach, a graphical approach, or a combination of the two.

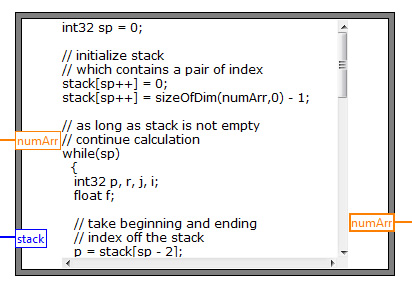

For example, LabVIEW contains the concept of the Formula Node, which evaluates textual mathematical formulas and expressions similar to C on the block diagram. These mathematical formulas can execute side by side and integrate with graphical LabVIEW code.

LabVIEW and its graphical, dataflow programming language provides a better way for you to solve problems than traditional, lower-level alternatives, and the proof is in its longevity. The key differentiators for programming in G are the intuitive graphical code that you can create and the data-driven rules that govern its execution combine to offer a programming experience that expresses the thought processes of its users more closely than other languages. Despite G being a higher-level language, you can still achieve performance comparable to that of languages like C because of the built-in LabVIEW compiler.